Hi all! As part of my course work, I was researching the possibilities of such a domestic cloud platform as . The platform offers various services for solving practical problems. However, sometimes it is necessary to configure your cloud application with a rather extensive infrastructure based on these services. In this article, I want to share the experience of deploying such an application.

What do you want to receive?

- a powerful tool for solving analytical problems or monitoring problems of any systems. In its basic configuration, this is a virtual machine with the Grafana web server, as well as a database (ClickHouse, InfluxDB, etc.) with a dataset that will be used to build analytics.

After starting a virtual machine with a web server, you can go to its host and get a beautiful UI, specify databases as sources for further work, create dashboards and graphs.

The basic version has one significant drawback - it is not at all fault-tolerant. That is, the entire performance of the application depends on the viability of one virtual machine. If she refuses or if 10 people open the UI at the same time, then problems will arise.

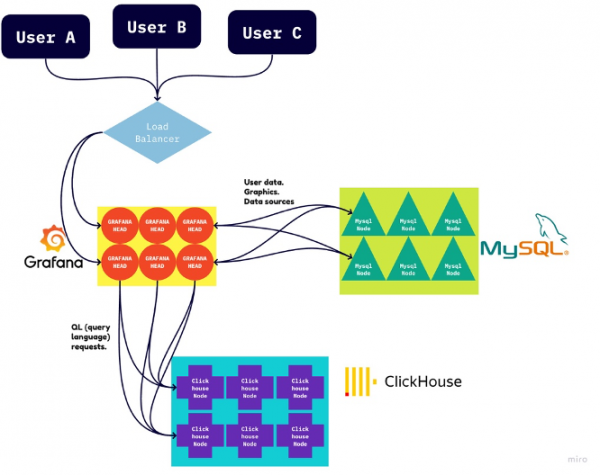

They are solved simply: you just need to deploy many identical virtual machines with a web server and place them under an L3 balancer. But not everything is so clear-cut here. Grafana stores user settings (paths to databases, dashboards, graphics, etc.) directly on the disk of its virtual machine. Thus, if you change some settings in the UI, then these changes will be displayed only on the virtual machine where the balancer sent us. This will lead to inconsistent settings for our application, problems with launch and use.

Here another database will come to the rescue, for example, MySQL or its equivalent. We tell Grafana that she should store user settings in this "spare" database. After that, it will be enough to specify the path to this database on each machine once, and edit all other user settings on any of the virtual machines, they will grow on the rest.

Here is a diagram of the final application infrastructure:

Learn to raise with your hands

MySQL and ClickHouse

Before deploying such an application with the click of a button, it was necessary to learn how to lift each of its components with handles and integrate them with each other.

Yandex.Cloud will help us here, which provides L3 balancers, ClickHouse and MySQL as managed services. The user only needs to specify the parameters and wait until the platform brings everything into a working state.

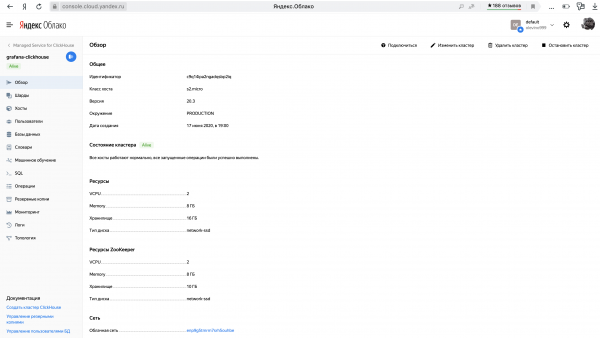

I registered, created a cloud and a billing account for myself. After that, I went into the cloud and raised MySQL and ClickHouse clusters with minimal settings. Wait until they become active.

You also need to remember to create a database in each cluster and configure access to it by login and password. I will not go into details here - everything is quite obvious in the interface.

The non-obvious detail was that these databases have many hosts that provide their fault tolerance. However, Grafana requires exactly one host for each database it works with. Long reading The clouds led me to a decision. It turns out that a host of the form c-<cluster_id>.rw.mdb.yandexcloud.net Maps to the current active cluster master host with the corresponding ID. That is what we will give to Grafana.

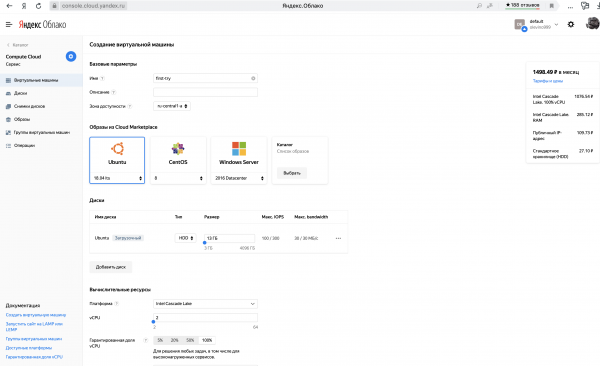

Web server

Now it's up to the web server. Let's raise a regular virtual machine with Linux and set up Grafana on it with our hands.

Let's connect via ssh and install the necessary packages.

sudo apt-get install -y apt-transport-https software-properties-common wget

wget -q -O - https://packages.grafana.com/gpg.key | sudo apt-key add -

sudo add-apt-repository "deb https://packages.grafana.com/enterprise/deb stable main"

sudo apt-get update

sudo apt-get install -y grafana-enterprise

After that, we will start Grafana under systemctl and install the plugin for working with ClickHouse (yes, it is not supplied in the basic package).

sudo systemctl start grafana-server

sudo systemctl enable grafana-server

sudo grafana-cli plugins install vertamedia-clickhouse-datasourceEverything after that with a simple command

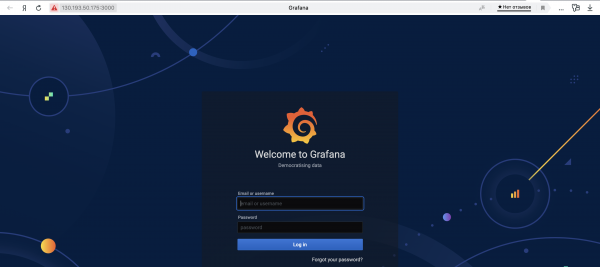

sudo service grafana-server startwe will start the web server. Now it will be possible to drive in the external IP address of the virtual machine in the browser, specify port 3000 and see the beautiful UI grafana.

But do not rush, before setting up Grafana, you must not forget to specify the path to MySQL for it to store the settings there.

The entire configuration of the Grafana web server is in the file /etc/grafana/grafana.ini. The required line looks like this:

;url =We expose the host to the MySQL cluster. The same file contains the login and password for accessing Grafana in the picture above, which are both equal by default admin.

You can use sed commands:

sudo sed -i "s#.*;url =.*#url = mysql://${MYSQL_USERNAME}:${MYSQL_PASSWORD}@${MYSQL_CLUSTER_URI}#" /etc/grafana/grafana.ini

sudo sed -i "s#.*;admin_user =.*#admin_user = ${GRAFANA_USERNAME}#" /etc/grafana/grafana.ini

sudo sed -i "s#.*;admin_password =.*#admin_password = ${GRAFANA_PASSWORD}#" /etc/grafana/grafana.ini

It's time to restart the web server!

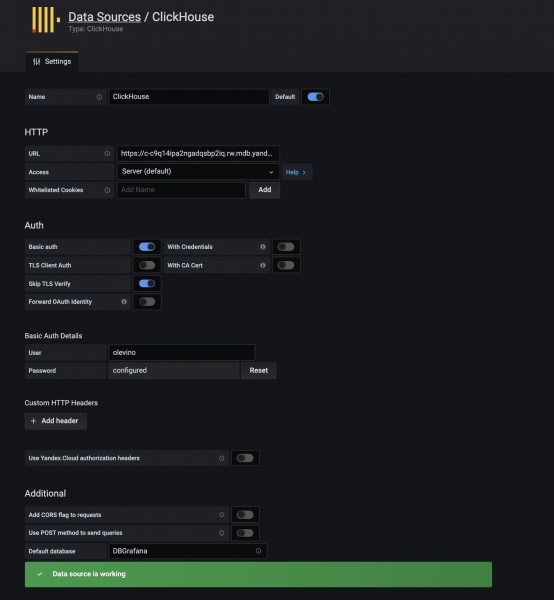

sudo service grafana-server restartNow in the Grafana UI, we will specify ClickHouse as the DataSource.

I managed to achieve a working configuration with the following settings:

I have given as URL https://c-<cluster_id>.rw.mdb.yandexcloud.net:8443

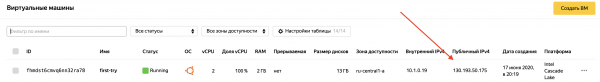

All! We have one working virtual machine with a web server connected to CH and MySQL. You can already upload the dataset to ClickHouse and build dashboards. However, we have not yet reached our goal and have not deployed a full-fledged infrastructure.

Packer

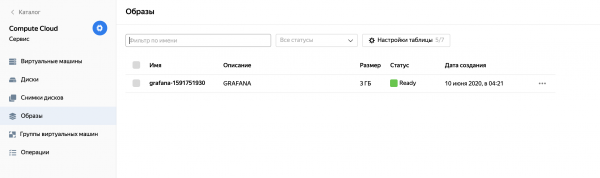

Yandex.Cloud allows you to create a disk image of an existing virtual machine, and based on it, you can create as many identical machines as you like. This is exactly what we will use. To conveniently assemble the image, take the tool from HashiCorp. It takes as input a json file with instructions for building the image.

Our json file will consist of two blocks: builders and provisioners. The first block describes the parameters of the image itself as an entity, and the second one describes the instructions for filling it with the necessary content.

Builders

{

"builders": [

{

"type": "yandex",

"endpoint": "{{user `endpoint`}}",

"folder_id": "<folder_id>",

"subnet_id": "{{user `subnet_id`}}",

"zone": "{{user `zone`}}",

"labels": {},

"use_ipv4_nat": true,

"use_internal_ip": false,

"service_account_key_file": "<service_account_key_file>",

"image_name": "grafana-{{timestamp}}",

"image_family": "grafana",

"image_labels": {},

"image_description": "GRAFANA",

"source_image_family": "ubuntu-1804-lts",

"disk_size_gb": 3,

"disk_type": "network-hdd",

"ssh_username": "ubuntu"

}

],

...

}In this template, you need to set the identifier of the section in the cloud where you want to create an image, as well as the path to the file with the keys from the service account previously created in this section. You can read more about creating service accounts and keys in the form of a file in the corresponding section. .

This configuration says that the disk image will be built based on the platform ubuntu-1804-lts, placed in the appropriate user section in the image family GRAFANA under the name grafana-{{timestamp}}.

Provisioners

Now for the more interesting part of the configuration. It will describe the sequence of actions that will need to be performed on a virtual machine before freezing its state into a disk image.

{

...,

"provisioners": [

{

"type": "shell",

"pause_before": "5s",

"scripts": [

"prepare-ctg.sh"

]

},

{

"type": "file",

"source": "setup.sh",

"destination": "/opt/grafana/setup.sh"

},

{

"type": "shell",

"execute_command": "sudo {{ .Vars }} bash '{{ .Path }}'",

"pause_before": "5s",

"scripts": [

"install-packages.sh",

"grafana-setup.sh",

"run-setup-at-reboot.sh"

]

}

]

}Here, all actions are divided into 3 stages. At the first stage, a simple script is executed that creates an auxiliary directory.

prepare-ctg.sh:

#!/bin/bash

sudo mkdir -p /opt/grafana

sudo chown -R ubuntu:ubuntu /opt/grafanaAt the next stage, we place a script in this directory, which will need to be run immediately after starting the virtual machine. This script will put the user variables to be set in Grafana's config and restart the web server.

setup.sh:

#!/bin/bash

CLUSTER_ID="<cluster_id>"

USERNAME="<username>"

PASSWORD="<password>"

sudo sed -i "s#.*;url =.*#url = mysql://${USERNAME}:${PASSWORD}@c-${CLUSTER_ID}.rw.mdb.yandexcloud.net#" /etc/grafana/grafana.ini

sudo sed -i "s#.*;admin_user =.*#admin_user = ${USERNAME}#" /etc/grafana/grafana.ini

sudo sed -i "s#.*;admin_password =.*#admin_password = ${PASSWORD}#" /etc/grafana/grafana.ini

sudo service grafana-server restartAfter that, there are 3 things to do:

1) install packages

2) start Grafana under systemctl and install the ClickHouse plugin

3) put the setup.sh script in the queue to run immediately after turning on the virtual machine.

install-packages.sh:

#!/bin/bash

sudo systemd-run --property='After=apt-daily.service apt-daily-upgrade.service' --wait /bin/true

sudo apt-get install -y apt-transport-https

sudo apt-get install -y software-properties-common wget

wget -q -O - https://packages.grafana.com/gpg.key | sudo apt-key add -

sudo add-apt-repository "deb https://packages.grafana.com/enterprise/deb stable main"

sudo apt-get update

sudo apt-get install -y grafana-enterprise grafana-setup.sh:

#!/bin/bash

sudo systemctl start grafana-server

sudo systemctl enable grafana-server

sudo grafana-cli plugins install vertamedia-clickhouse-datasourcerun-setup-at-reboot.sh:

#!/bin/bash

chmod +x /opt/grafana/setup.sh

cat > /etc/cron.d/first-boot <<EOF

PATH=/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin:/usr/games:/usr/local/games

@reboot root /bin/bash /opt/grafana/setup.sh > /var/log/yc-setup.log 2>&1

EOF

chmod +x /etc/cron.d/first-boot;Now it remains to run Packer and get the output image placed in the specified partition. When creating a virtual machine, you can select it as a boot disk and, after starting, get a ready-made Grafana web server.

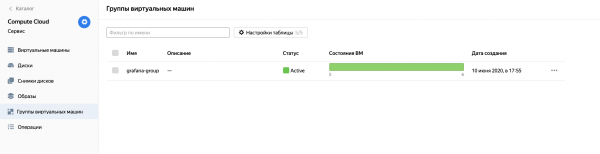

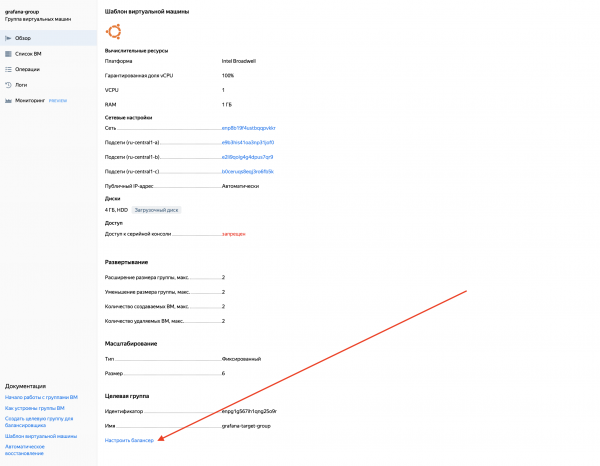

Instance group and balancer

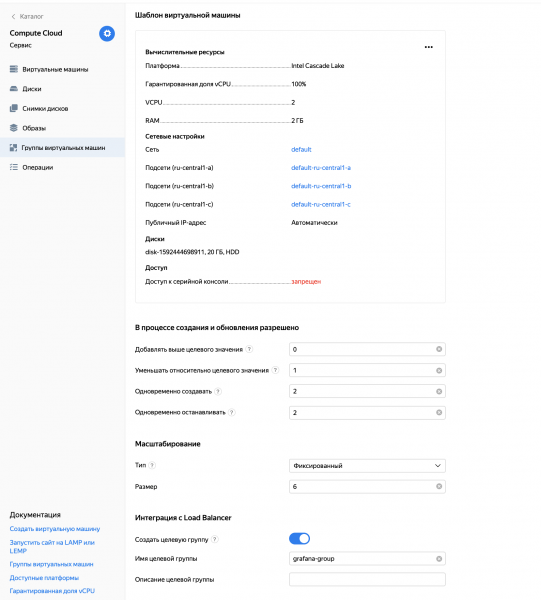

Once there is a disk image that allows you to create many identical Grafana web servers, we can create an instance group. On the Yandex.Cloud platform, this term refers to the union of virtual machines that have the same characteristics. When creating an instance group, the prototype of all machines in this group is configured, and then the characteristics of the group itself (for example, the minimum and maximum number of active machines). If the current number does not meet this criterion, then the instance group itself will remove unnecessary machines or create new ones in the image and likeness.

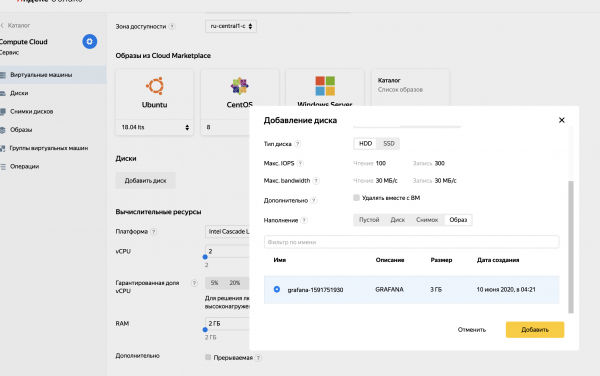

As part of our task, we will create an instance group of web servers that will be spawned from the previously created disk image.

The last instance group setting is really notable. The target group in integration with Load Balancer will help you set up an L3 balancer on top of the virtual machines of this group by pressing a couple of buttons.

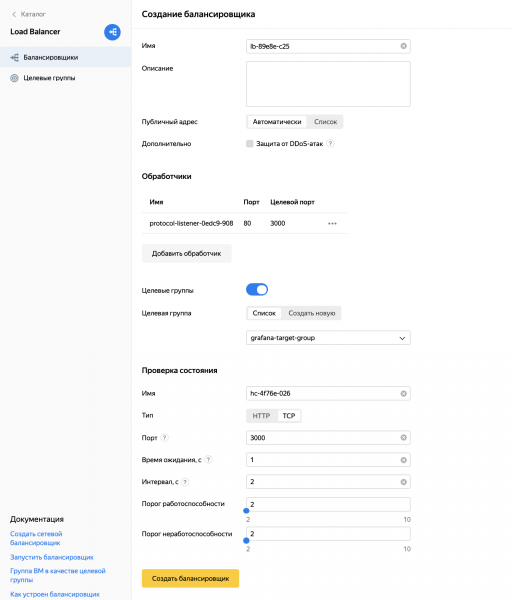

When setting up the balancer, I implemented two important points:

- I made it so that the balancer accepted user traffic on port 80, and redirected it to port 3000 of virtual machines, just where Grafana lives.

- Set up machine health checks by pinging them on port 3000.

mini-total

Finally, we were able to manually deploy the desired application infrastructure, and now we have a highly resilient Grafana service. It is only necessary to know the IP address of the balancer as the entry point to the application and the host of the ClickHouse cluster in order to load the dataset into it.

It would seem a victory? Yes, victory. But something still bothers me. The whole process above requires a lot of manual actions and does not scale at all, I want to automate it if possible. This will be the focus of the next section.

Integration with Terraform

We will again use a tool from HashiCorp called . It will help deploy the entire application infrastructure at the click of a button, based on a few variables passed by the user. Let's write a recipe that can be run multiple times in different sections of different users.

All work with Terraform comes down to writing a configuration file (*.tf) and the creation of infrastructure based on it.

Variables

At the very beginning of the file, we will take out the variables that determine where and how the future infrastructure will be deployed.

variable "oauth_token" {

type = string

default = "<oauth-token>"

}

variable "cloud_id" {

type = string

default = "<cloud-id>"

}

variable "folder_id" {

type = string

default = "<folder_id>"

}

variable "service_account_id" {

type = string

default = "<service_account_id>"

}

variable "image_id" {

type = string

default = "<image_id>"

}

variable "username" {

type = string

default = "<username>"

}

variable "password" {

type = string

default = "<password>"

}

variable "dbname" {

type = string

default = "<dbname>"

}

variable "public_key_path" {

type = string

default = "<path to ssh public key>"

}The whole process of deploying an application will come down to building a disk image and setting these variables. Let me explain what they are responsible for:

oauth_token — a token for access to the cloud. Can be obtained by .

cloud_id - identifier of the cloud where we will deploy the application

folder_id — identifier of the section where we will deploy the application

service_account_id — identifier of the service account in the corresponding section of the cloud.

image_id - identifier of the disk image obtained using Packer

username и Password - username and password to access both databases and the Grafana web server

dbname - database name inside CH and MySQL clusters

public_key_path - the path to the file with your public ssh key, through which you can connect under the name ubuntu to virtual machines with web servers

Provider setting

Now you need to configure the Terraform provider - in our case, Yandex:

provider "yandex" {

token = var.oauth_token

cloud_id = var.cloud_id

folder_id = var.folder_id

zone = "ru-central1-a"

}

You can see that here we are using the variables defined above.

Network and clusters

Now let's create a network in which elements of our infrastructure will communicate, three subnets (one in each region) and raise the CH and MySQL clusters.

resource "yandex_vpc_network" "grafana_network" {}

resource "yandex_vpc_subnet" "subnet_a" {

zone = "ru-central1-a"

network_id = yandex_vpc_network.grafana_network.id

v4_cidr_blocks = ["10.1.0.0/24"]

}

resource "yandex_vpc_subnet" "subnet_b" {

zone = "ru-central1-b"

network_id = yandex_vpc_network.grafana_network.id

v4_cidr_blocks = ["10.2.0.0/24"]

}

resource "yandex_vpc_subnet" "subnet_c" {

zone = "ru-central1-c"

network_id = yandex_vpc_network.grafana_network.id

v4_cidr_blocks = ["10.3.0.0/24"]

}

resource "yandex_mdb_clickhouse_cluster" "ch_cluster" {

name = "grafana-clickhouse"

environment = "PRODUCTION"

network_id = yandex_vpc_network.grafana_network.id

clickhouse {

resources {

resource_preset_id = "s2.micro"

disk_type_id = "network-ssd"

disk_size = 16

}

}

zookeeper {

resources {

resource_preset_id = "s2.micro"

disk_type_id = "network-ssd"

disk_size = 10

}

}

database {

name = var.dbname

}

user {

name = var.username

password = var.password

permission {

database_name = var.dbname

}

}

host {

type = "CLICKHOUSE"

zone = "ru-central1-a"

subnet_id = yandex_vpc_subnet.subnet_a.id

}

host {

type = "CLICKHOUSE"

zone = "ru-central1-b"

subnet_id = yandex_vpc_subnet.subnet_b.id

}

host {

type = "CLICKHOUSE"

zone = "ru-central1-c"

subnet_id = yandex_vpc_subnet.subnet_c.id

}

host {

type = "ZOOKEEPER"

zone = "ru-central1-a"

subnet_id = yandex_vpc_subnet.subnet_a.id

}

host {

type = "ZOOKEEPER"

zone = "ru-central1-b"

subnet_id = yandex_vpc_subnet.subnet_b.id

}

host {

type = "ZOOKEEPER"

zone = "ru-central1-c"

subnet_id = yandex_vpc_subnet.subnet_c.id

}

}

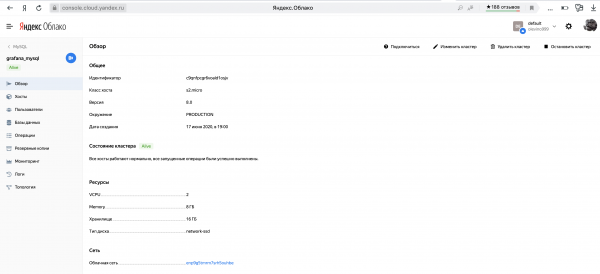

resource "yandex_mdb_mysql_cluster" "mysql_cluster" {

name = "grafana_mysql"

environment = "PRODUCTION"

network_id = yandex_vpc_network.grafana_network.id

version = "8.0"

resources {

resource_preset_id = "s2.micro"

disk_type_id = "network-ssd"

disk_size = 16

}

database {

name = var.dbname

}

user {

name = var.username

password = var.password

permission {

database_name = var.dbname

roles = ["ALL"]

}

}

host {

zone = "ru-central1-a"

subnet_id = yandex_vpc_subnet.subnet_a.id

}

host {

zone = "ru-central1-b"

subnet_id = yandex_vpc_subnet.subnet_b.id

}

host {

zone = "ru-central1-c"

subnet_id = yandex_vpc_subnet.subnet_c.id

}

}As you can see, each of the two clusters is built to be fairly fault-tolerant by being placed in three Availability Zones.

Web servers

It would seem that you can continue in the same spirit, but I ran into difficulty. Before that, I first raised the MySQL cluster and only after that, knowing its ID, I collected a disk image with the desired configuration, where I specified the host to the cluster. But now we don't know the cluster ID before the launch of Terraform, including at the time of the image build. So I had to resort to the following .

Using the metadata service from Amazon, we will pass some parameters to the virtual machine, which it will accept and process. We need the machine to go into the metadata for the MySQL host of the cluster and for the username-password that the user specified in the Terraform file after startup. Slightly change the contents of the file setup.sh, which runs when the virtual machine is turned on.

setup.sh:

#!/bin/bash

CLUSTER_URI="$(curl -H 'Metadata-Flavor:Google' http://169.254.169.254/computeMetadata/v1/instance/attributes/mysql_cluster_uri)"

USERNAME="$(curl -H 'Metadata-Flavor:Google' http://169.254.169.254/computeMetadata/v1/instance/attributes/username)"

PASSWORD="$(curl -H 'Metadata-Flavor:Google' http://169.254.169.254/computeMetadata/v1/instance/attributes/password)"

sudo sed -i "s#.*;url =.*#url = mysql://${USERNAME}:${PASSWORD}@${CLUSTER_URI}#" /etc/grafana/grafana.ini

sudo sed -i "s#.*;admin_user =.*#admin_user = ${USERNAME}#" /etc/grafana/grafana.ini

sudo sed -i "s#.*;admin_password =.*#admin_password = ${PASSWORD}#" /etc/grafana/grafana.ini

sudo service grafana-server restartIntance group and balancer

Having rebuilt the new disk image, we can finally add our file for Terraform.

We indicate that we want to use an existing disk image:

data "yandex_compute_image" "grafana_image" {

image_id = var.image_id

}Now let's create an instance group:

resource "yandex_compute_instance_group" "grafana_group" {

name = "grafana-group"

folder_id = var.folder_id

service_account_id = var.service_account_id

instance_template {

platform_id = "standard-v1"

resources {

memory = 1

cores = 1

}

boot_disk {

mode = "READ_WRITE"

initialize_params {

image_id = data.yandex_compute_image.grafana_image.id

size = 4

}

}

network_interface {

network_id = yandex_vpc_network.grafana_network.id

subnet_ids = [yandex_vpc_subnet.subnet_a.id, yandex_vpc_subnet.subnet_b.id, yandex_vpc_subnet.subnet_c.id]

nat = "true"

}

metadata = {

mysql_cluster_uri = "c-${yandex_mdb_mysql_cluster.mysql_cluster.id}.rw.mdb.yandexcloud.net:3306/${var.dbname}"

username = var.username

password = var.password

ssh-keys = "ubuntu:${file("${var.public_key_path}")}"

}

network_settings {

type = "STANDARD"

}

}

scale_policy {

fixed_scale {

size = 6

}

}

allocation_policy {

zones = ["ru-central1-a", "ru-central1-b", "ru-central1-c"]

}

deploy_policy {

max_unavailable = 2

max_creating = 2

max_expansion = 2

max_deleting = 2

}

load_balancer {

target_group_name = "grafana-target-group"

}

}It is worth paying attention to how we passed to the metadata cluster_uri, username и password. It is their virtual machine that will get it at startup and put it in the Grafana config.

It's about the balancer.

resource "yandex_lb_network_load_balancer" "grafana_balancer" {

name = "grafana-balancer"

listener {

name = "grafana-listener"

port = 80

target_port = 3000

external_address_spec {

ip_version = "ipv4"

}

}

attached_target_group {

target_group_id = yandex_compute_instance_group.grafana_group.load_balancer.0.target_group_id

healthcheck {

name = "healthcheck"

tcp_options {

port = 3000

}

}

}

}A little sugar

There was very little left. After the infrastructure is deployed, you will have to go to the Grafana UI and manually add the CH cluster (whose ID you still need to get) as a Data Source. But Terraform knows the cluster ID. Let's get him to finish the job.

Let's add a new provider - Grafana, and slip the balancer IP as a host. All changes that Terraform makes on the machine where its balancer determines will grow in MySQL, and therefore on all other machines.

provider "grafana" {

url = "http://${[for s in yandex_lb_network_load_balancer.grafana_balancer.listener: s.external_address_spec.0.address].0}"

auth = "${var.username}:${var.password}"

}

resource "grafana_data_source" "ch_data_source" {

type = "vertamedia-clickhouse-datasource"

name = "grafana"

url = "https://c-${yandex_mdb_clickhouse_cluster.ch_cluster.id}.rw.mdb.yandexcloud.net:8443"

basic_auth_enabled = "true"

basic_auth_username = var.username

basic_auth_password = var.password

is_default = "true"

access_mode = "proxy"

}Let's comb

Display balancer IP and ClickHouse cluster host

output "grafana_balancer_ip_address" {

value = [for s in yandex_lb_network_load_balancer.grafana_balancer.listener: s.external_address_spec.0.address].0

}

output "clickhouse_cluster_host" {

value = "https://c-${yandex_mdb_clickhouse_cluster.ch_cluster.id}.rw.mdb.yandexcloud.net:8443"

}Can run

All! Our configuration file is ready and we can, by setting variables, tell Terraform to raise everything that we described above. The whole process took me about 15 minutes.

At the end, you can see a beautiful message:

Apply complete! Resources: 9 added, 0 changed, 0 destroyed.

Outputs:

clickhouse_cluster_host = https://c-c9q14ipa2ngadqsbp2iq.rw.mdb.yandexcloud.net:8443

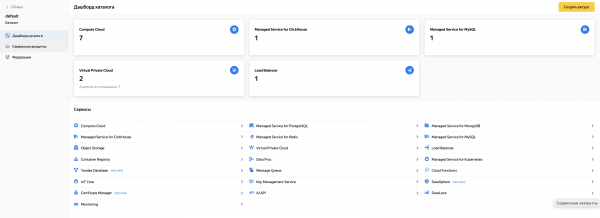

grafana_balancer_ip_address = 130.193.50.25And in the cloud, elements of the raised infrastructure will be visible:

To summarize

Now, using the example of Grafana, each of you can deploy applications with sprawling cloud architecture on the Yandex.Cloud platform. Useful tools from HashiCorp such as Packer and Terraform can help you with this. I hope someone finds this article useful 🙂

PS Below I will attach a link to the repository, where you can find ready-made recipes for Packer and Terraform, fragments of which I cited in this article.

Source: habr.com