Hello everyone on this blog! This is the third post in a series in which we show you how to deploy modern web applications on Red Hat OpenShift.

In the previous two posts, we've covered how to deploy modern web applications in just a few steps and how to use a new S2I image along with a pre-built HTTP server image such as NGINX using chained builds for production deployment.

Today we will show how to run a development server for your application on the OpenShift platform and synchronize it with the local file system, as well as talk about what OpenShift Pipelines are and how they can be used as an alternative to linked assemblies.

OpenShift as a development environment

development workflow

As mentioned in , the typical development process for modern web applications is simply a "development server" that tracks changes to local files. When they happen, the build of the application is triggered and then it is updated to the browser.

In most modern frameworks, such a "development server" is built into the appropriate command line tools.

Local example

First, let's see how it works in the case of running applications locally. Let's take an application as an example. from previous articles, although almost the same workflow concepts apply to all other modern frameworks.

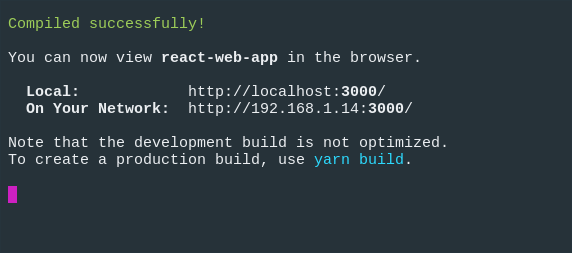

So, to start the "development server" in our React example, we'll type the following command:

$ npm run start

Then in the terminal window we will see something like this:

And our application will open in the default browser:

Now, if we make changes to the file, the application should refresh in the browser.

OK, with development in local mode, everything is clear, but how to achieve the same on OpenShift?

Development server on OpenShift

If you remember in , we analyzed the so-called run phase (run phase) of the S2I image and saw that, by default, our web application is served by the serve module.

However, if you take a closer look from that example, then it has the $NPM_RUN environment variable, which allows you to run your own command.

For example, we can use the nodeshift module to deploy our application:

$ npx nodeshift --deploy.env NPM_RUN="yarn start" --dockerImage=nodeshift/ubi8-s2i-web-app

Note: The example above is abbreviated to illustrate the general idea.

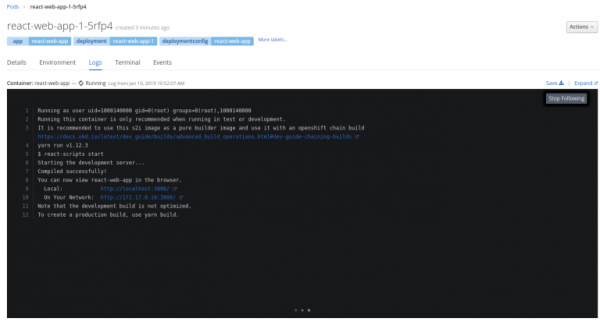

Here, we've added the NPM_RUN environment variable to our deployment, which tells the runtime to run the yarn start command, which starts the React development server inside our OpenShift pod.

If you look at the log of a running pod, then there will be something like this:

Of course, all this will be nothing until we can synchronize the local code with the code, which is also controlled for changes, but lives on a remote server.

Synchronization of remote and local code

Fortunately, nodeshift can easily help with synchronization, and you can use the watch command to track changes.

So after we've run the command to deploy the development server for our application, we can safely use this command:

$ npx nodeshift watch

As a result, a connection will be made to the running pod that we created a little earlier, the synchronization of our local files with the remote cluster will be activated, and the files on our local system will begin to be monitored for changes.

Therefore, if we now update the src/App.js file, the system will react to these changes, copy them to the remote cluster and start the development server, which will then update our application in the browser.

To complete the picture, let's show what these commands look like in their entirety:

$ npx nodeshift --strictSSL=false --dockerImage=nodeshift/ubi8-s2i-web-app --build.env YARN_ENABLED=true --expose --deploy.env NPM_RUN="yarn start" --deploy.port 3000

$ npx nodeshift watch --strictSSL=false

The watch command is an abstraction on top of the oc rsync command, you can learn more about how this works .

This was an example for React, but the exact same method can be used with other frameworks, just set the NPM_RUN environment variable as needed.

Openshift Pipelines

Next, we'll talk about a tool like OpenShift Pipelines and how it can be used as an alternative to chained builds.

What is OpenShift Pipelines

OpenShift Pipelines is a cloud-based CI/CD continuous integration and delivery system for pipelines using Tekton. Tekton is an open source, flexible Kubernetes native CI/CD framework that automates deployment across multiple platforms (Kubernetes, serverless, virtual machines, etc.) by abstracting away from the underlying layer.

Understanding this article requires some knowledge of Pipelines, so we strongly advise you to first read .

Setting up the working environment

To play around with the examples in this article, you first need to set up a working environment:

- Install and configure an OpenShift 4 cluster. Our examples use CodeReady Containers (CRD) for this, the installation instructions for which can be found .

- After the cluster is ready, you need to install the Pipeline Operator on it. Don't be afraid, it's easy, installation instructions .

- Download (tkn) .

- Run the create-react-app command line tool to create an app that will then be deployed (this is a simple app ).

- (Optional) Clone the repository to run the sample app locally with npm install and then npm start.

The application repository will also have a k8s folder, which will contain the Kubernetes/OpenShift YAMLs used to deploy the application. There will be Tasks, ClusterTasks, Resources and Pipelines which we will create in this .

Let's start

The first step for our example is to create a new project in the OpenShift cluster. Let's name this project webapp-pipeline and create it with the following command:

$ oc new-project webapp-pipeline

Later, this project name will appear in the code, so if you decide to name it something else, do not forget to edit the code from the examples accordingly. Starting from this point, we will go not from top to bottom, but from bottom to top: that is, first we will create all the components of the conveyor, and only then itself.

So, first of all…

Tasks

Let's create a couple of tasks (tasks), which will then help deploy the application within our pipeline pipeline. The first task, apply_manifests_task, is responsible for applying the YAML of those Kubernetes resources (service, deployment, and route) that are located in the k8s folder of our application. The second task - update_deployment_task - is responsible for updating an already deployed image to the one created by our pipeline.

Don't worry if it's not very clear yet. In fact, these tasks are something like utilities, and we will go into them in more detail a little later. For now, let's just create them:

$ oc create -f https://raw.githubusercontent.com/nodeshift/webapp-pipeline-tutorial/master/tasks/update_deployment_task.yaml

$ oc create -f https://raw.githubusercontent.com/nodeshift/webapp-pipeline-tutorial/master/tasks/apply_manifests_task.yaml

Then, using the tkn CLI command, check that the tasks have been created:

$ tkn task ls

NAME AGE

apply-manifests 1 minute ago

update-deployment 1 minute ago

Note: These are local tasks for your current project.

Cluster tasks

Cluster tasks are basically the same as just tasks. That is, it is a reusable collection of steps that are combined in one way or another when a particular task is launched. The difference is that the cluster task is available everywhere within the cluster. To see the list of cluster tasks that are automatically created when a Pipeline Operator is added, use the tkn CLI command again:

$ tkn clustertask ls

NAME AGE

buildah 1 day ago

buildah-v0-10-0 1 day ago

jib-maven 1 day ago

kn 1 day ago

maven 1 day ago

openshift-client 1 day ago

openshift-client-v0-10-0 1 day ago

s2i 1 day ago

s2i-go 1 day ago

s2i-go-v0-10-0 1 day ago

s2i-java-11 1 day ago

s2i-java-11-v0-10-0 1 day ago

s2i-java-8 1 day ago

s2i-java-8-v0-10-0 1 day ago

s2i-nodejs 1 day ago

s2i-nodejs-v0-10-0 1 day ago

s2i-perl 1 day ago

s2i-perl-v0-10-0 1 day ago

s2i-php 1 day ago

s2i-php-v0-10-0 1 day ago

s2i-python-3 1 day ago

s2i-python-3-v0-10-0 1 day ago

s2i-ruby 1 day ago

s2i-ruby-v0-10-0 1 day ago

s2i-v0-10-0 1 day ago

Now let's create two cluster tasks. The first will generate an S2I image and send it to the internal OpenShift registry; the second is to build our NGINX-based image, using the application we have already built as content.

Create and send an image

When creating the first task, we will repeat what we already did in the previous article about linked assemblies. Recall that we used the S2I image (ubi8-s2i-web-app) to "build" our application, and ended up with an image stored in the internal OpenShift registry. We will now use this S2I web application image to create a DockerFile for our application, and then use Buildah to actually build and push the resulting image to the OpenShift internal registry, as this is exactly what OpenShift does when you deploy your applications using NodeShift.

How do we know all this, you ask? From , we just copied it and finished it for ourselves.

So, now we create the s2i-web-app cluster task:

$ oc create -f https://raw.githubusercontent.com/nodeshift/webapp-pipeline-tutorial/master/clustertasks/s2i-web-app-task.yaml

We will not analyze this in detail, but only dwell on the OUTPUT_DIR parameter:

params:

- name: OUTPUT_DIR

description: The location of the build output directory

default: build

By default, this parameter is build, which is where React puts the built content. Other frameworks use different paths, for example in Ember it is dist. The output of our first cluster task will be an image containing the HTML, JavaScript, and CSS we have collected.

We build an image based on NGINX

As for our second cluster task, it should build an NGINX-based image for us using the content of the application we have already built. In fact, this is the part of the previous section where we looked at chained builds.

To do this, we - in the same way as a little above - will create a webapp-build-runtime cluster task:

$ oc create -f https://raw.githubusercontent.com/nodeshift/webapp-pipeline-tutorial/master/clustertasks/webapp-build-runtime-task.yaml

If you look at the code for these cluster tasks, you can see that it does not specify the Git repository we are working with, or the names of the images we are creating. We only specify what exactly we pass to Git, or some image where we want to output the final image. That is why these clustered tasks can be reused when working with other applications.

And then we gracefully move on to the next point ...

Resources

So, since, as we just said, cluster tasks should be as generic as possible, we need to create resources that will be used in input (Git repository) and output (final images). The first resource we need is Git, where our application resides, something like this:

# This resource is the location of the git repo with the web application source

apiVersion: tekton.dev/v1alpha1

kind: PipelineResource

metadata:

name: web-application-repo

spec:

type: git

params:

- name: url

value: https://github.com/nodeshift-starters/react-pipeline-example

- name: revision

value: master

Here PipelineResource is of type git. The url key in the params section points to a specific repository and sets the master branch (this is optional, but we write it for completeness).

Now we need to create a resource for the image, where the results of the s2i-web-app task will be saved, this is done as follows:

# This resource is the result of running "npm run build", the resulting built files will be located in /opt/app-root/output

apiVersion: tekton.dev/v1alpha1

kind: PipelineResource

metadata:

name: built-web-application-image

spec:

type: image

params:

- name: url

value: image-registry.openshift-image-registry.svc:5000/webapp-pipeline/built-web-application:latest

Here, the PipelineResource is of type image, and the value of the url parameter points to the internal OpenShift Image Registry, specifically the one in the webapp-pipeline namespace. Remember to change this setting if you are using a different namespace.

And finally, the last resource we need will also be of type image, and this will be the final NGINX image, which will then be used during deployment:

# This resource is the image that will be just the static html, css, js files being run with nginx

apiVersion: tekton.dev/v1alpha1

kind: PipelineResource

metadata:

name: runtime-web-application-image

spec:

type: image

params:

- name: url

value: image-registry.openshift-image-registry.svc:5000/webapp-pipeline/runtime-web-application:latest

Again, note that this resource stores the image in the internal OpenShift registry in the webapp-pipeline namespace.

To create all these resources at once, we use the create command:

$ oc create -f https://raw.githubusercontent.com/nodeshift/webapp-pipeline-tutorial/master/resources/resource.yaml

To make sure that the resources have been created, you can do this:

$ tkn resource ls

pipeline

Now that we have all the necessary components, we will assemble a pipeline from them by creating it with the following command:

$ oc create -f https://raw.githubusercontent.com/nodeshift/webapp-pipeline-tutorial/master/pipelines/build-and-deploy-react.yaml

But before running this command, let's take a look at these components. The first one is the name:

apiVersion: tekton.dev/v1alpha1

kind: Pipeline

metadata:

name: build-and-deploy-react

Then, in the spec section, we see an indication of the resources that we created earlier:

spec:

resources:

- name: web-application-repo

type: git

- name: built-web-application-image

type: image

- name: runtime-web-application-image

type: image

We then create tasks for our pipeline to run. First of all, it must execute the s2i-web-app task we have already created:

tasks:

- name: build-web-application

taskRef:

name: s2i-web-app

kind: ClusterTask

This task takes input (gir resource) and output (built-web-application-image resource) parameters. We also pass a special parameter to it so that it does not verify TLS since we are using self-signed certificates:

resources:

inputs:

- name: source

resource: web-application-repo

outputs:

- name: image

resource: built-web-application-image

params:

- name: TLSVERIFY

value: "false"

The next task is almost the same, only the webapp-build-runtime cluster task we have already created is called here:

name: build-runtime-image

taskRef:

name: webapp-build-runtime

kind: ClusterTask

As with the previous task, we are passing in a resource, but now it is built-web-application-image (the output of our previous task). And as a conclusion, we again set the image. Since this task should be performed after the previous one, we add the runAfter field:

resources:

inputs:

- name: image

resource: built-web-application-image

outputs:

- name: image

resource: runtime-web-application-image

params:

- name: TLSVERIFY

value: "false"

runAfter:

- build-web-application

The next two tasks are responsible for applying the service, route, and deployment YAML files that live in the k8s directory of our web application, and for updating this deployment when new images are created. We set these two cluster tasks at the beginning of the article.

Running the pipeline

So, all parts of our pipeline are created, and we will start it with the following command:

$ tkn pipeline start build-and-deploy-react

At this point, the command line is used interactively and the appropriate resources must be selected in response to each of its requests: for the git resource, select web-application-repo, then for the first image resource, built-web-application-image, and finally for second image resource –runtime-web-application-image:

? Choose the git resource to use for web-application-repo: web-application-repo (https://github.com/nodeshift-starters/react-pipeline-example)

? Choose the image resource to use for built-web-application-image: built-web-application-image (image-registry.openshift-image-registry.svc:5000/webapp-pipeline/built-web-

application:latest)

? Choose the image resource to use for runtime-web-application-image: runtime-web-application-image (image-registry.openshift-image-registry.svc:5000/webapp-pipeline/runtim

e-web-application:latest)

Pipelinerun started: build-and-deploy-react-run-4xwsr

Now check the status of the pipeline with the following command:

$ tkn pipeline logs -f

After the pipeline starts and the application is deployed, query the published route with the following command:

$ oc get route react-pipeline-example --template='http://{{.spec.host}}'

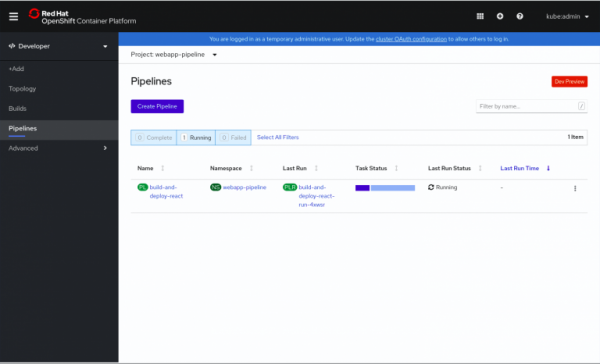

For greater visibility, you can see our pipeline in the Developer mode of the web console in the section Pipelinesas shown in Fig. 1.

Fig.1. Overview of running pipelines.

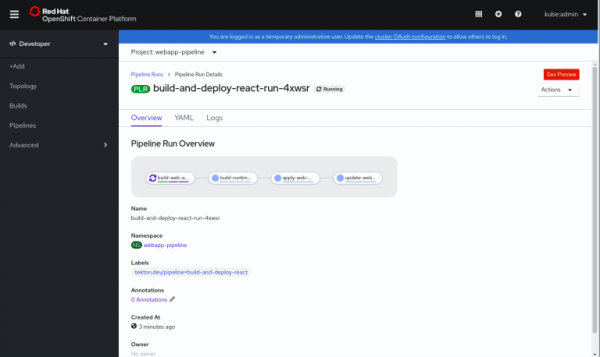

Clicking on a running pipeline displays additional details, as shown in Figure 2.

Rice. 2. Additional information about the conveyor.

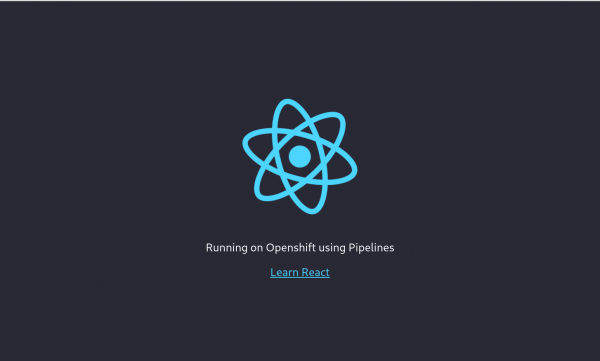

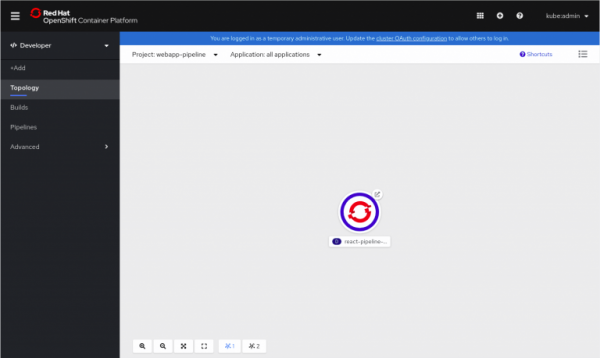

After more details, you can see the running applications in the view Topologies, as shown in Fig.3.

Fig 3. Running pod.

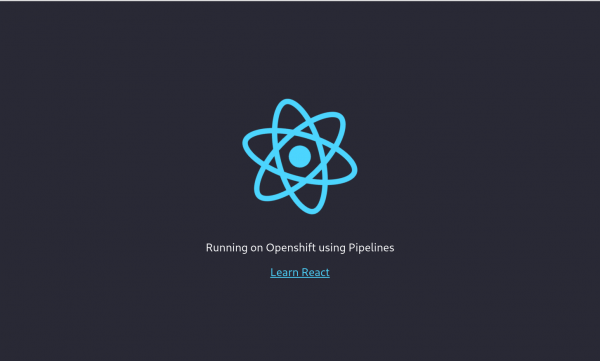

Clicking on the circle in the upper right corner of the icon opens our application, as shown in Figure 4.

Rice. 4. A running React application.

Conclusion

So, we have shown how to run a development server for your application on OpenShift and synchronize it with the local file system. We also looked at how to simulate a chained-build template using OpenShift Pipelines. All example codes from this article can be found .

Additional Resources

- Free eBook

- More on on the Red Hat website

Announcements of upcoming webinars

We are starting a series of Friday webinars about the native experience of using the Red Hat OpenShift Container Platform and Kubernetes:

Source: habr.com