Most likely, today no one has a question why it is necessary to collect service metrics. The next logical step is to set up an alert for the collected metrics, which will notify you of any deviations in the data to channels convenient for you (mail, Slack, Telegram). Online hotel booking service all metrics of our services are poured into InfluxDB and displayed in Grafana, the basic alerting is also configured there. For tasks like “you need to calculate something and compare with it”, we use Kapacitor.

Kapacitor is part of the TICK stack that can handle metrics from InfluxDB. He can connect several measurements to each other (join), calculate something useful from the received data, write the result back to InfluxDB, send an alert to Slack/Telegram/mail.

The whole stack has a cool and detailed , but there will always be useful things that are not explicitly indicated in the manuals. In this article, I decided to collect a number of such useful non-obvious tips (the main syntax of TICKscipt is described ) and show how they can be applied using the example of solving one of our problems.

Let's go!

float & int, calculation errors

Absolutely standard problem, solved through cast:

var alert_float = 5.0

var alert_int = 10

data|eval(lambda: float("value") > alert_float OR float("value") < float("alert_int"))

Using default()

If the tag/field is empty, errors will occur in the calculations:

|default()

.tag('status', 'empty')

.field('value', 0)

fill in join (inner vs outer)

By default, join will discard points where there is no data (inner).

With fill('null') an outer join will be performed, after which you need to do a default() and fill in the empty values:

var data = res1

|join(res2)

.as('res1', 'res2)

.fill('null')

|default()

.field('res1.value', 0.0)

.field('res2.value', 100.0)

There is still a nuance here. If in the example above one of the series (res1 or res2) is empty, the final series (data) will also be empty. There are several github tickets on this topic (, , ) - we are waiting for fixes and suffer a little.

Using conditions in calculations (if in lambda)

|eval(lambda: if("value" > 0, true, false)

The last five minutes from the pipeline for the period

For example, you need to compare the values of the last five minutes with the previous week. You can take two batches of data in two separate batches or pull out part of the data from a larger period:

|where(lambda: duration((unixNano(now()) - unixNano("time"))/1000, 1u) < 5m)

An alternative for the last five minutes would be to use the BarrierNode node, which cuts off the data before the specified time:

|barrier()

.period(5m)

Examples of using Go's templates in message

Templates match the format from the package , below are a few common problems.

if else

Putting things in order, not triggering people with text once again:

|alert()

...

.message(

'{{ if eq .Level "OK" }}It is ok now{{ else }}Chief, everything is broken{{end}}'

)

Two digits after the decimal point in message

Improving the readability of the message:

|alert()

...

.message(

'now value is {{ index .Fields "value" | printf "%0.2f" }}'

)

Expanding variables in message

We display more information in the message to answer the question “Why is it yelling”?

var warnAlert = 10

|alert()

...

.message(

'Today value less then '+string(warnAlert)+'%'

)

Alert unique ID

A necessary thing when there is more than one group in the data, otherwise only one alert will be generated:

|alert()

...

.id('{{ index .Tags "myname" }}/{{ index .Tags "myfield" }}')

Custom handler's

There is an exec in the large list of handlers, which allows you to execute your script with the parameters passed (stdin) - creativity and more!

One of our custom is a small python script for sending notifications to slack.

At first, we wanted to send a picture from an authorization-protected grafana in a message. After - write OK in the thread to the previous alert from the same group, and not as a separate message. A little later, add the most common mistake in the last X minutes to the message.

A separate topic is communication with other services and any actions initiated by an alert (only if your monitoring works well enough).

An example of a handler description, where slack_handler.py is our self-written script:

topic: slack_graph

id: slack_graph.alert

match: level() != INFO AND changed() == TRUE

kind: exec

options:

prog: /sbin/slack_handler.py

args: ["-c", "CHANNELID", "--graph", "--search"]

How to debug?

Logging option

|log()

.level("error")

.prefix("something")

Watch (cli): kapacitor -url :9092 logs lvl=error

Variant with httpOut

Shows the data in the current pipeline:

|httpOut('something')

Watch (get): :9092/kapacitor/v1/tasks/task_name/something

Execution scheme

- Each task returns an execution tree with useful numbers in the format .

- We take a block .

- Paste into the viewer .

Where else can you get a rake

timestamp in influxdb on writeback

For example, we set up an alert for the amount of requests per hour (groupBy(1h)) and want to record the alert that happened in influxdb (to beautifully show the fact that there is a problem on the graph in grafana).

influxDBOut() will write the time value from the alert to the timestamp, respectively, the point on the chart will be written earlier/later than the alert came.

When accuracy is required: we get around this problem by calling a custom handler, which will write the data to influxdb with the current timestamp.

docker, build and deploy

At startup, kapacitor can load tasks, templates, and handlers from the directory specified in the config, in the [load] block.

To create a task correctly, you need the following things:

- File name - expands to id/name of the script

- Type - stream/batch

- dbrp - a keyword to specify in which database + policy the script works (dbrp "supplier". "autogen")

If there is no line with dbrp in some batch task, the entire service will refuse to start and honestly write about it in the log.

In chronograf, on the contrary, this line should not exist, it is not accepted through the interface and gives an error.

Hack when building a container: Dockerfile exits with -1 if there are lines with //.+dbrp, which will allow you to immediately understand the reason for the fail when building the build.

join one to many

An example task: you need to take the 95th percentile of the service uptime for a week, compare every minute of the last 10 with this value.

You cannot do a one-to-many join, last/mean/median over a group of points turn the node into a stream, the error “cannot add child mismatched edges: batch -> stream” will be returned.

The result of a batch, as a variable in a lambda expression, is also not substituted.

There is an option to save the necessary numbers from the first batch to a file via udf and upload this file via sideload.

What did we decide?

We have about 100 hotel providers, each of them can have several connections, let's call it a channel. There are about 300 of these channels, each of the channels can fall off. Of all the recorded metrics, we will monitor the error rate (requests and errors).

Why not graphana?

Error alerts configured in grafana have several drawbacks. Some are critical, some you can close your eyes to, depending on the situation.

Grafana cannot calculate between dimensions + alerting, but we need a rate (requests-errors)/requests.

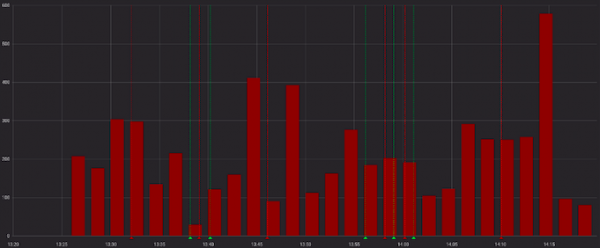

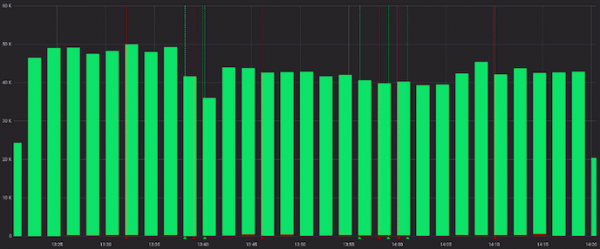

The errors look wickedly:

And less viciously when viewed with successful requests:

Okay, we can pre-calculate the rate in the service before grafana, and in some cases this will work. But not in ours, because. for each channel, its ratio is considered “normal”, and alerts work according to static values (we look for eyes, change if it often alerts).

These are examples of "normal" for different channels:

Let's ignore the previous point and assume that all suppliers have a similar "normal" picture. Now everything is fine, and we can get by with grafana alerts?

We can, but we really don’t want to, because we have to choose one of the options:

a) make a lot of graphs for each channel separately (and painfully accompany them)

b) leave one chart with all channels (and get lost in colorful lines and customized alerts)

How did you do it?

Again, there is a good starting example in the documentation (), you can peep or take as a basis in similar tasks.

What they did in the end:

- join two series in a few hours, grouping by channels;

- fill in the series by groups if there was no data;

- compare the median of the last 10 minutes with the previous data;

- shouting if something is found;

- write calculated rates and occurred alerts in influxdb;

- send a useful message to slack.

In my opinion, we managed everything that we would like to get at the output as beautifully as possible (and even a little more with custom handlers).

On github.com you can see и received script.

An example of the resulting code:

dbrp "supplier"."autogen"

var name = 'requests.rate'

var grafana_dash = 'pczpmYZWU/mydashboard'

var grafana_panel = '26'

var period = 8h

var todayPeriod = 10m

var every = 1m

var warnAlert = 15

var warnReset = 5

var reqQuery = 'SELECT sum("count") AS value FROM "supplier"."autogen"."requests"'

var errQuery = 'SELECT sum("count") AS value FROM "supplier"."autogen"."errors"'

var prevErr = batch

|query(errQuery)

.period(period)

.every(every)

.groupBy(1m, 'channel', 'supplier')

var prevReq = batch

|query(reqQuery)

.period(period)

.every(every)

.groupBy(1m, 'channel', 'supplier')

var rates = prevReq

|join(prevErr)

.as('req', 'err')

.tolerance(1m)

.fill('null')

// заполняем значения нулями, если их не было

|default()

.field('err.value', 0.0)

.field('req.value', 0.0)

// if в lambda: считаем рейт, только если ошибки были

|eval(lambda: if("err.value" > 0, 100.0 * (float("req.value") - float("err.value")) / float("req.value"), 100.0))

.as('rate')

// записываем посчитанные значения в инфлюкс

rates

|influxDBOut()

.quiet()

.create()

.database('kapacitor')

.retentionPolicy('autogen')

.measurement('rates')

// выбираем данные за последние 10 минут, считаем медиану

var todayRate = rates

|where(lambda: duration((unixNano(now()) - unixNano("time")) / 1000, 1u) < todayPeriod)

|median('rate')

.as('median')

var prevRate = rates

|median('rate')

.as('median')

var joined = todayRate

|join(prevRate)

.as('today', 'prev')

|httpOut('join')

var trigger = joined

|alert()

.warn(lambda: ("prev.median" - "today.median") > warnAlert)

.warnReset(lambda: ("prev.median" - "today.median") < warnReset)

.flapping(0.25, 0.5)

.stateChangesOnly()

// собираем в message ссылку на график дашборда графаны

.message(

'{{ .Level }}: {{ index .Tags "channel" }} err/req ratio ({{ index .Tags "supplier" }})

{{ if eq .Level "OK" }}It is ok now{{ else }}

'+string(todayPeriod)+' median is {{ index .Fields "today.median" | printf "%0.2f" }}%, by previous '+string(period)+' is {{ index .Fields "prev.median" | printf "%0.2f" }}%{{ end }}

http://grafana.ostrovok.in/d/'+string(grafana_dash)+

'?var-supplier={{ index .Tags "supplier" }}&var-channel={{ index .Tags "channel" }}&panelId='+string(grafana_panel)+'&fullscreen&tz=UTC%2B03%3A00'

)

.id('{{ index .Tags "name" }}/{{ index .Tags "channel" }}')

.levelTag('level')

.messageField('message')

.durationField('duration')

.topic('slack_graph')

// "today.median" дублируем как "value", также пишем в инфлюкс остальные филды алерта (keep)

trigger

|eval(lambda: "today.median")

.as('value')

.keep()

|influxDBOut()

.quiet()

.create()

.database('kapacitor')

.retentionPolicy('autogen')

.measurement('alerts')

.tag('alertName', name)

And what is the conclusion?

Kapacitor is great at performing monitoring alerting with a bunch of groupings, performing additional calculations on already recorded metrics, performing custom actions and running scripts (udf).

The entry threshold is not very high - try it if grafana or other instruments do not fully satisfy your Wishlist.

Source: habr.com