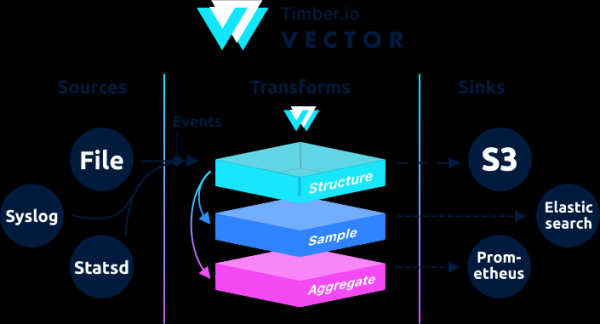

, i hoʻolālā ʻia e hōʻiliʻili, hoʻololi a hoʻouna i ka ʻikepili log, metric a me nā hanana.

→

Ke kākau ʻia nei ma ka ʻōlelo Rust, ʻike ʻia ia e ka hana kiʻekiʻe a me ka hoʻohana haʻahaʻa RAM i hoʻohālikelike ʻia i kāna mau analogues. Eia kekahi, nui ka nānā ʻana i nā hana e pili ana i ka pololei, ʻo ia hoʻi, ka hiki ke mālama i nā hanana i hoʻouna ʻole ʻia i kahi buffer ma ka disk a hoʻololi i nā faila.

ʻO Architecturally, ʻo Vector kahi mea hoʻokele hanana e loaʻa nā memo mai hoʻokahi a ʻoi aku paha nā kumuwaiwai, e hoʻohana ana ma luna o kēia mau memo nā hoʻololi, a hoʻouna iā lākou i hoʻokahi a ʻoi aku paha nā kahawai.

ʻO Vector kahi pani no ka filebeat a me ka logstash, hiki iā ia ke hana ma nā hana ʻelua (loaʻa a hoʻouna i nā lāʻau), nā kikoʻī hou aku ma luna o lākou. .

Inā ma Logstash ua kūkulu ʻia ke kaulahao e like me ka hoʻokomo → kānana → hoʻopuka a laila ma Vector ʻo ia → →

Hiki ke loaʻa nā laʻana ma ka palapala.

He aʻo hou kēia mai . Aia nā ʻōlelo kuhikuhi kumu i ka hana geoip. Ke hoʻāʻo nei i ka geoip mai kahi pūnaewele kūloko, hāʻawi ka vector i kahi hewa.

Aug 05 06:25:31.889 DEBUG transform{name=nginx_parse_rename_fields type=rename_fields}: vector::transforms::rename_fields: Field did not exist field=«geoip.country_name» rate_limit_secs=30Inā pono kekahi e hoʻoponopono i ka geoip, a laila e nānā i nā kuhikuhi kumu mai .

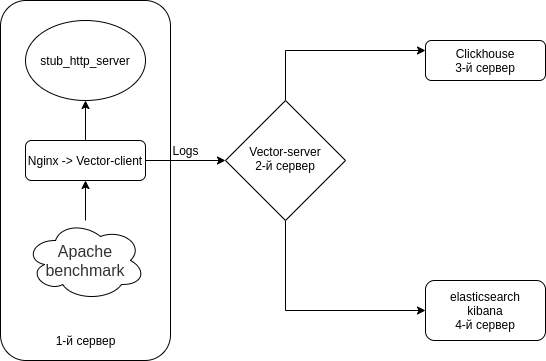

E hoʻonohonoho mākou i ka hui pū ʻana o Nginx (Access logs) → Vector (Client | Filebeat) → Vector (Server | Logstash) → kaʻawale ma Clickhouse a kaʻawale i Elasticsearch. E hoʻouka mākou i nā kikowaena 4. ʻOiai hiki iā ʻoe ke kāpae iā ia me 3 mau kikowaena.

ʻO ka papahana kekahi mea e like me kēia.

Hoʻopau iā Selinux ma kāu mau kikowaena āpau

sed -i 's/^SELINUX=.*/SELINUX=disabled/g' /etc/selinux/config

rebootHoʻokomo mākou i kahi emulator server HTTP + pono ma nā kikowaena āpau

Ma ke ʻano he emulator server HTTP e hoʻohana mākou от

ʻAʻohe rpm nodejs-stub-server. hana rpm no ia mea. rpm e hōʻuluʻulu ʻia me ka hoʻohana ʻana

Hoʻohui i ka waihona antonpatsev/nodejs-stub-server

yum -y install yum-plugin-copr epel-release

yes | yum copr enable antonpatsev/nodejs-stub-serverE hoʻouka i ka nodejs-stub-server, Apache benchmark a me ka pale terminal multiplexer ma nā kikowaena āpau

yum -y install stub_http_server screen mc httpd-tools screenUa hoʻoponopono au i ka manawa pane stub_http_server ma ka waihona /var/lib/stub_http_server/stub_http_server.js i nui nā lāʻau.

var max_sleep = 10;E hoʻomaka kākou i ka stub_http_server.

systemctl start stub_http_server

systemctl enable stub_http_serverma ke kikowaena 3

Hoʻohana ʻo ClickHouse i ka SSE 4.2 aʻoaʻo hoʻonohonoho, no laila ke ʻole i kuhikuhi ʻia, ke kākoʻo ʻana iā ia i loko o ke kaʻina hana i hoʻohana ʻia e lilo i koi ʻōnaehana hou. Eia ke kauoha e nānā inā kākoʻo ke kaʻina hana o kēia manawa iā SSE 4.2:

grep -q sse4_2 /proc/cpuinfo && echo "SSE 4.2 supported" || echo "SSE 4.2 not supported"Pono mua ʻoe e hoʻopili i ka waihona mana:

sudo yum install -y yum-utils

sudo rpm --import https://repo.clickhouse.tech/CLICKHOUSE-KEY.GPG

sudo yum-config-manager --add-repo https://repo.clickhouse.tech/rpm/stable/x86_64No ka hoʻokomo ʻana i nā pūʻolo pono ʻoe e holo i kēia mau kauoha:

sudo yum install -y clickhouse-server clickhouse-clientE ʻae i ka clickhouse-server e hoʻolohe i ke kāleka pūnaewele ma ka faila /etc/clickhouse-server/config.xml

<listen_host>0.0.0.0</listen_host>Ke hoʻololi nei i ka pae logging mai ka trace i ka debug

debug

Nā hoʻonohonoho kōmike maʻamau:

min_compress_block_size 65536

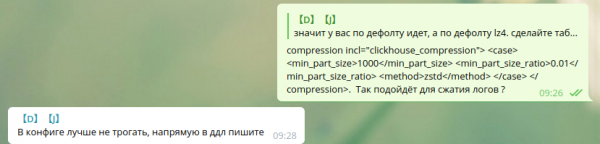

max_compress_block_size 1048576No ka hoʻoulu ʻana i ka Zstd compression, ua ʻōlelo ʻia ʻaʻole e hoʻopā i ka config, akā e hoʻohana i ka DDL.

ʻAʻole hiki iaʻu ke ʻike pehea e hoʻohana ai i ka zstd compression ma DDL ma Google. No laila ua waiho wau e like me ia.

E nā hoa hana e hoʻohana ana i ka zstd compression ma Clickhouse, e ʻoluʻolu e kaʻana like i nā kuhikuhi.

E hoʻomaka i ke kikowaena ma ke ʻano he daemon, holo:

service clickhouse-server startI kēia manawa e neʻe kākou i ka hoʻonohonoho ʻana iā Clickhouse

E hele i Clickhouse

clickhouse-client -h 172.26.10.109 -m172.26.10.109 — IP o ke kikowaena kahi i hoʻokomo ʻia ai ʻo Clickhouse.

E hana kākou i kahi waihona waihona vector

CREATE DATABASE vector;E nānā kākou aia ka waihona.

show databases;E hana i kahi pākaukau vector.logs.

/* Это таблица где хранятся логи как есть */

CREATE TABLE vector.logs

(

`node_name` String,

`timestamp` DateTime,

`server_name` String,

`user_id` String,

`request_full` String,

`request_user_agent` String,

`request_http_host` String,

`request_uri` String,

`request_scheme` String,

`request_method` String,

`request_length` UInt64,

`request_time` Float32,

`request_referrer` String,

`response_status` UInt16,

`response_body_bytes_sent` UInt64,

`response_content_type` String,

`remote_addr` IPv4,

`remote_port` UInt32,

`remote_user` String,

`upstream_addr` IPv4,

`upstream_port` UInt32,

`upstream_bytes_received` UInt64,

`upstream_bytes_sent` UInt64,

`upstream_cache_status` String,

`upstream_connect_time` Float32,

`upstream_header_time` Float32,

`upstream_response_length` UInt64,

`upstream_response_time` Float32,

`upstream_status` UInt16,

`upstream_content_type` String,

INDEX idx_http_host request_http_host TYPE set(0) GRANULARITY 1

)

ENGINE = MergeTree()

PARTITION BY toYYYYMMDD(timestamp)

ORDER BY timestamp

TTL timestamp + toIntervalMonth(1)

SETTINGS index_granularity = 8192;Nānā mākou ua hana ʻia nā papa. E hoʻomaka kākou clickhouse-client a noi aku.

E hele kāua i ka ʻikepili vector.

use vector;

Ok.

0 rows in set. Elapsed: 0.001 sec.E nana kakou i na papaaina.

show tables;

┌─name────────────────┐

│ logs │

└─────────────────────┘Ke hoʻokomo nei i ka elasticsearch ma ka server 4th e hoʻouna i ka ʻikepili like iā Elasticsearch no ka hoʻohālikelike ʻana me Clickhouse

Hoʻohui i kahi kī rpm lehulehu

rpm --import https://artifacts.elastic.co/GPG-KEY-elasticsearchE hana mākou i 2 repo:

/etc/yum.repos.d/elasticsearch.repo

[elasticsearch]

name=Elasticsearch repository for 7.x packages

baseurl=https://artifacts.elastic.co/packages/7.x/yum

gpgcheck=1

gpgkey=https://artifacts.elastic.co/GPG-KEY-elasticsearch

enabled=0

autorefresh=1

type=rpm-md/etc/yum.repos.d/kibana.repo

[kibana-7.x]

name=Kibana repository for 7.x packages

baseurl=https://artifacts.elastic.co/packages/7.x/yum

gpgcheck=1

gpgkey=https://artifacts.elastic.co/GPG-KEY-elasticsearch

enabled=1

autorefresh=1

type=rpm-mdE hoʻouka i ka elasticsearch a me ke kibana

yum install -y kibana elasticsearchNo ka mea aia ma 1 kope, pono ʻoe e hoʻohui i kēia i ka faila /etc/elasticsearch/elasticsearch.yml:

discovery.type: single-nodeNo laila hiki i kēlā vector ke hoʻouna i ka ʻikepili i elasticsearch mai kahi kikowaena ʻē aʻe, e hoʻololi kākou i ka network.host.

network.host: 0.0.0.0No ka hoʻohui ʻana i ke kibana, hoʻololi i ka ʻāpana server.host ma ka faila /etc/kibana/kibana.yml

server.host: "0.0.0.0"Kahiko a hoʻokomo i ka elasticsearch i ka autostart

systemctl enable elasticsearch

systemctl start elasticsearcha kibana

systemctl enable kibana

systemctl start kibanaKa hoʻonohonoho ʻana iā Elasticsearch no ke ʻano node hoʻokahi 1 shard, 0 replica. Loaʻa paha iā ʻoe kahi pūʻulu o ka nui o nā kikowaena a ʻaʻole pono ʻoe e hana i kēia.

No nā kuhikuhi kikoʻī e hiki mai ana, e hoʻohou i ka template paʻamau:

curl -X PUT http://localhost:9200/_template/default -H 'Content-Type: application/json' -d '{"index_patterns": ["*"],"order": -1,"settings": {"number_of_shards": "1","number_of_replicas": "0"}}' Kāu Mau Koho Paʻamau ma ke ʻano he pani no Logstash ma ke kikowaena 2

yum install -y https://packages.timber.io/vector/0.9.X/vector-x86_64.rpm mc httpd-tools screenE hoʻonohonoho kāua i ka Vector i pani no Logstash. Ke hoʻoponopono nei i ka faila /etc/vector/vector.toml

# /etc/vector/vector.toml

data_dir = "/var/lib/vector"

[sources.nginx_input_vector]

# General

type = "vector"

address = "0.0.0.0:9876"

shutdown_timeout_secs = 30

[transforms.nginx_parse_json]

inputs = [ "nginx_input_vector" ]

type = "json_parser"

[transforms.nginx_parse_add_defaults]

inputs = [ "nginx_parse_json" ]

type = "lua"

version = "2"

hooks.process = """

function (event, emit)

function split_first(s, delimiter)

result = {};

for match in (s..delimiter):gmatch("(.-)"..delimiter) do

table.insert(result, match);

end

return result[1];

end

function split_last(s, delimiter)

result = {};

for match in (s..delimiter):gmatch("(.-)"..delimiter) do

table.insert(result, match);

end

return result[#result];

end

event.log.upstream_addr = split_first(split_last(event.log.upstream_addr, ', '), ':')

event.log.upstream_bytes_received = split_last(event.log.upstream_bytes_received, ', ')

event.log.upstream_bytes_sent = split_last(event.log.upstream_bytes_sent, ', ')

event.log.upstream_connect_time = split_last(event.log.upstream_connect_time, ', ')

event.log.upstream_header_time = split_last(event.log.upstream_header_time, ', ')

event.log.upstream_response_length = split_last(event.log.upstream_response_length, ', ')

event.log.upstream_response_time = split_last(event.log.upstream_response_time, ', ')

event.log.upstream_status = split_last(event.log.upstream_status, ', ')

if event.log.upstream_addr == "" then

event.log.upstream_addr = "127.0.0.1"

end

if (event.log.upstream_bytes_received == "-" or event.log.upstream_bytes_received == "") then

event.log.upstream_bytes_received = "0"

end

if (event.log.upstream_bytes_sent == "-" or event.log.upstream_bytes_sent == "") then

event.log.upstream_bytes_sent = "0"

end

if event.log.upstream_cache_status == "" then

event.log.upstream_cache_status = "DISABLED"

end

if (event.log.upstream_connect_time == "-" or event.log.upstream_connect_time == "") then

event.log.upstream_connect_time = "0"

end

if (event.log.upstream_header_time == "-" or event.log.upstream_header_time == "") then

event.log.upstream_header_time = "0"

end

if (event.log.upstream_response_length == "-" or event.log.upstream_response_length == "") then

event.log.upstream_response_length = "0"

end

if (event.log.upstream_response_time == "-" or event.log.upstream_response_time == "") then

event.log.upstream_response_time = "0"

end

if (event.log.upstream_status == "-" or event.log.upstream_status == "") then

event.log.upstream_status = "0"

end

emit(event)

end

"""

[transforms.nginx_parse_remove_fields]

inputs = [ "nginx_parse_add_defaults" ]

type = "remove_fields"

fields = ["data", "file", "host", "source_type"]

[transforms.nginx_parse_coercer]

type = "coercer"

inputs = ["nginx_parse_remove_fields"]

types.request_length = "int"

types.request_time = "float"

types.response_status = "int"

types.response_body_bytes_sent = "int"

types.remote_port = "int"

types.upstream_bytes_received = "int"

types.upstream_bytes_send = "int"

types.upstream_connect_time = "float"

types.upstream_header_time = "float"

types.upstream_response_length = "int"

types.upstream_response_time = "float"

types.upstream_status = "int"

types.timestamp = "timestamp"

[sinks.nginx_output_clickhouse]

inputs = ["nginx_parse_coercer"]

type = "clickhouse"

database = "vector"

healthcheck = true

host = "http://172.26.10.109:8123" # Адрес Clickhouse

table = "logs"

encoding.timestamp_format = "unix"

buffer.type = "disk"

buffer.max_size = 104900000

buffer.when_full = "block"

request.in_flight_limit = 20

[sinks.elasticsearch]

type = "elasticsearch"

inputs = ["nginx_parse_coercer"]

compression = "none"

healthcheck = true

# 172.26.10.116 - сервер где установен elasticsearch

host = "http://172.26.10.116:9200"

index = "vector-%Y-%m-%d"Hiki iā ʻoe ke hoʻololi i ka ʻāpana transforms.nginx_parse_add_defaults.

mai hoʻohana i kēia mau configs no kahi CDN liʻiliʻi a hiki ke loaʻa kekahi mau waiwai i ka upstream_*

Eia kekahi laʻana:

"upstream_addr": "128.66.0.10:443, 128.66.0.11:443, 128.66.0.12:443"

"upstream_bytes_received": "-, -, 123"

"upstream_status": "502, 502, 200"Inā ʻaʻole kēia kou kūlana, a laila hiki ke maʻalahi kēia ʻāpana

E hana kākou i nā hoʻonohonoho lawelawe no systemd /etc/systemd/system/vector.service

# /etc/systemd/system/vector.service

[Unit]

Description=Vector

After=network-online.target

Requires=network-online.target

[Service]

User=vector

Group=vector

ExecStart=/usr/bin/vector

ExecReload=/bin/kill -HUP $MAINPID

Restart=no

StandardOutput=syslog

StandardError=syslog

SyslogIdentifier=vector

[Install]

WantedBy=multi-user.targetMa hope o ka hana ʻana i nā papa, hiki iā ʻoe ke holo i ka Vector

systemctl enable vector

systemctl start vectorHiki ke nānā ʻia nā log vector e like me kēia:

journalctl -f -u vectorPono e loaʻa nā helu e like me kēia ma nā moʻolelo

INFO vector::topology::builder: Healthcheck: Passed.

INFO vector::topology::builder: Healthcheck: Passed.Ma ka mea kūʻai (Web server) - 1st server

Ma ke kikowaena me nginx, pono ʻoe e hoʻopau i ka ipv6, no ka mea, hoʻohana ka papa lāʻau i ka clickhouse i ke kahua. upstream_addr IPv4, ʻoiai ʻaʻole wau e hoʻohana i ka IPv6 i loko o ka pūnaewele. Inā ʻaʻole i pio ka ipv6, aia nā hewa:

DB::Exception: Invalid IPv4 value.: (while read the value of key upstream_addr)E ka poʻe heluhelu, hoʻohui i ke kākoʻo ipv6.

Hana i ka faila /etc/sysctl.d/98-disable-ipv6.conf

net.ipv6.conf.all.disable_ipv6 = 1

net.ipv6.conf.default.disable_ipv6 = 1

net.ipv6.conf.lo.disable_ipv6 = 1Ke noi nei i nā hoʻonohonoho

sysctl --systemE hoʻokomo i ka nginx.

Hoʻohui ʻia ka faila waihona nginx /etc/yum.repos.d/nginx.repo

[nginx-stable]

name=nginx stable repo

baseurl=http://nginx.org/packages/centos/$releasever/$basearch/

gpgcheck=1

enabled=1

gpgkey=https://nginx.org/keys/nginx_signing.key

module_hotfixes=trueE hoʻouka i ka pūʻolo nginx

yum install -y nginxʻO ka mea mua, pono mākou e hoʻonohonoho i ka format log ma Nginx i ka faila /etc/nginx/nginx.conf

user nginx;

# you must set worker processes based on your CPU cores, nginx does not benefit from setting more than that

worker_processes auto; #some last versions calculate it automatically

# number of file descriptors used for nginx

# the limit for the maximum FDs on the server is usually set by the OS.

# if you don't set FD's then OS settings will be used which is by default 2000

worker_rlimit_nofile 100000;

error_log /var/log/nginx/error.log warn;

pid /var/run/nginx.pid;

# provides the configuration file context in which the directives that affect connection processing are specified.

events {

# determines how much clients will be served per worker

# max clients = worker_connections * worker_processes

# max clients is also limited by the number of socket connections available on the system (~64k)

worker_connections 4000;

# optimized to serve many clients with each thread, essential for linux -- for testing environment

use epoll;

# accept as many connections as possible, may flood worker connections if set too low -- for testing environment

multi_accept on;

}

http {

include /etc/nginx/mime.types;

default_type application/octet-stream;

log_format main '$remote_addr - $remote_user [$time_local] "$request" '

'$status $body_bytes_sent "$http_referer" '

'"$http_user_agent" "$http_x_forwarded_for"';

log_format vector escape=json

'{'

'"node_name":"nginx-vector",'

'"timestamp":"$time_iso8601",'

'"server_name":"$server_name",'

'"request_full": "$request",'

'"request_user_agent":"$http_user_agent",'

'"request_http_host":"$http_host",'

'"request_uri":"$request_uri",'

'"request_scheme": "$scheme",'

'"request_method":"$request_method",'

'"request_length":"$request_length",'

'"request_time": "$request_time",'

'"request_referrer":"$http_referer",'

'"response_status": "$status",'

'"response_body_bytes_sent":"$body_bytes_sent",'

'"response_content_type":"$sent_http_content_type",'

'"remote_addr": "$remote_addr",'

'"remote_port": "$remote_port",'

'"remote_user": "$remote_user",'

'"upstream_addr": "$upstream_addr",'

'"upstream_bytes_received": "$upstream_bytes_received",'

'"upstream_bytes_sent": "$upstream_bytes_sent",'

'"upstream_cache_status":"$upstream_cache_status",'

'"upstream_connect_time":"$upstream_connect_time",'

'"upstream_header_time":"$upstream_header_time",'

'"upstream_response_length":"$upstream_response_length",'

'"upstream_response_time":"$upstream_response_time",'

'"upstream_status": "$upstream_status",'

'"upstream_content_type":"$upstream_http_content_type"'

'}';

access_log /var/log/nginx/access.log main;

access_log /var/log/nginx/access.json.log vector; # Новый лог в формате json

sendfile on;

#tcp_nopush on;

keepalive_timeout 65;

#gzip on;

include /etc/nginx/conf.d/*.conf;

}I ʻole e uhaʻi i kāu hoʻonohonoho o kēia manawa, ʻae ʻo Nginx iā ʻoe e loaʻa i nā kuhikuhi access_log

access_log /var/log/nginx/access.log main; # Стандартный лог

access_log /var/log/nginx/access.json.log vector; # Новый лог в формате jsonMai poina e hoʻohui i kahi lula e logrotate no nā lāʻau hou (inā ʻaʻole i pau ka faila log me .log)

Wehe i default.conf mai /etc/nginx/conf.d/

rm -f /etc/nginx/conf.d/default.confHoʻohui i ka host virtual /etc/nginx/conf.d/vhost1.conf

server {

listen 80;

server_name vhost1;

location / {

proxy_pass http://172.26.10.106:8080;

}

}Hoʻohui i ka host virtual /etc/nginx/conf.d/vhost2.conf

server {

listen 80;

server_name vhost2;

location / {

proxy_pass http://172.26.10.108:8080;

}

}Hoʻohui i ka host virtual /etc/nginx/conf.d/vhost3.conf

server {

listen 80;

server_name vhost3;

location / {

proxy_pass http://172.26.10.109:8080;

}

}Hoʻohui i ka host virtual /etc/nginx/conf.d/vhost4.conf

server {

listen 80;

server_name vhost4;

location / {

proxy_pass http://172.26.10.116:8080;

}

}Hoʻohui i nā host virtual (172.26.10.106 ip o ke kikowaena kahi i hoʻokomo ʻia ai ka nginx) i nā kikowaena āpau i ka faila /etc/hosts:

172.26.10.106 vhost1

172.26.10.106 vhost2

172.26.10.106 vhost3

172.26.10.106 vhost4A inā ua mākaukau nā mea a pau

nginx -t

systemctl restart nginxI kēia manawa e hoʻokomo iā mākou iho

yum install -y https://packages.timber.io/vector/0.9.X/vector-x86_64.rpmE hana i kahi faila hoʻonohonoho no systemd /etc/systemd/system/vector.service

[Unit]

Description=Vector

After=network-online.target

Requires=network-online.target

[Service]

User=vector

Group=vector

ExecStart=/usr/bin/vector

ExecReload=/bin/kill -HUP $MAINPID

Restart=no

StandardOutput=syslog

StandardError=syslog

SyslogIdentifier=vector

[Install]

WantedBy=multi-user.targetA hoʻonohonoho i ka hoʻololi Filebeat ma ka /etc/vector/vector.toml config. ʻO IP address 172.26.10.108 ka IP address o ka log server (Vector-Server)

data_dir = "/var/lib/vector"

[sources.nginx_file]

type = "file"

include = [ "/var/log/nginx/access.json.log" ]

start_at_beginning = false

fingerprinting.strategy = "device_and_inode"

[sinks.nginx_output_vector]

type = "vector"

inputs = [ "nginx_file" ]

address = "172.26.10.108:9876"Mai poina e hoʻohui i ka vector mea hoʻohana i ka hui kūpono i hiki iā ia ke heluhelu i nā faila log. No ka laʻana, nginx i loko centos hana i nā log me nā kuleana hui adm.

usermod -a -G adm vectorE hoʻomaka kākou i ka lawelawe vector

systemctl enable vector

systemctl start vectorHiki ke nānā ʻia nā log vector e like me kēia:

journalctl -f -u vectorPono e loaʻa kahi helu e like me kēia ma nā moʻolelo

INFO vector::topology::builder: Healthcheck: Passed.ʻO ka hoʻāʻo ʻana

Hana mākou i ka hoʻāʻo me ka hoʻohana ʻana i ka benchmark Apache.

Ua hoʻokomo ʻia ka pūʻolo httpd-tools ma nā kikowaena āpau

Hoʻomaka mākou e hoʻāʻo me ka hoʻohana ʻana i ka benchmark Apache mai 4 mau kikowaena ʻokoʻa i ka pale. ʻO ka mea mua, hoʻomaka mākou i ka multixer terminal screen, a laila hoʻomaka mākou e hoʻāʻo me ka hoʻohana ʻana i ka benchmark Apache. Pehea e hana ai me ka pale āu e ʻike ai ma .

Mai ke kikowaena 1

while true; do ab -H "User-Agent: 1server" -c 100 -n 10 -t 10 http://vhost1/; sleep 1; doneMai ke kikowaena 2

while true; do ab -H "User-Agent: 2server" -c 100 -n 10 -t 10 http://vhost2/; sleep 1; doneMai ke kikowaena 3

while true; do ab -H "User-Agent: 3server" -c 100 -n 10 -t 10 http://vhost3/; sleep 1; doneMai ke kikowaena 4

while true; do ab -H "User-Agent: 4server" -c 100 -n 10 -t 10 http://vhost4/; sleep 1; doneE nānā kākou i ka ʻikepili ma Clickhouse

E hele i Clickhouse

clickhouse-client -h 172.26.10.109 -mKe hana nei i kahi nīnau SQL

SELECT * FROM vector.logs;

┌─node_name────┬───────────timestamp─┬─server_name─┬─user_id─┬─request_full───┬─request_user_agent─┬─request_http_host─┬─request_uri─┬─request_scheme─┬─request_method─┬─request_length─┬─request_time─┬─request_referrer─┬─response_status─┬─response_body_bytes_sent─┬─response_content_type─┬───remote_addr─┬─remote_port─┬─remote_user─┬─upstream_addr─┬─upstream_port─┬─upstream_bytes_received─┬─upstream_bytes_sent─┬─upstream_cache_status─┬─upstream_connect_time─┬─upstream_header_time─┬─upstream_response_length─┬─upstream_response_time─┬─upstream_status─┬─upstream_content_type─┐

│ nginx-vector │ 2020-08-07 04:32:42 │ vhost1 │ │ GET / HTTP/1.0 │ 1server │ vhost1 │ / │ http │ GET │ 66 │ 0.028 │ │ 404 │ 27 │ │ 172.26.10.106 │ 45886 │ │ 172.26.10.106 │ 0 │ 109 │ 97 │ DISABLED │ 0 │ 0.025 │ 27 │ 0.029 │ 404 │ │

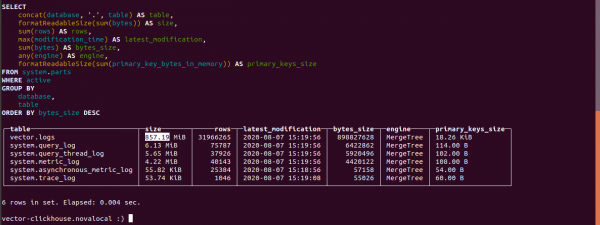

└──────────────┴─────────────────────┴─────────────┴─────────┴────────────────┴────────────────────┴───────────────────┴─────────────┴────────────────┴────────────────┴────────────────┴──────────────┴──────────────────┴─────────────────┴──────────────────────────┴───────────────────────┴───────────────┴─────────────┴─────────────┴───────────────┴───────────────┴─────────────────────────┴─────────────────────┴───────────────────────┴───────────────────────┴──────────────────────┴──────────────────────────┴────────────────────────┴─────────────────┴───────────────────────E ʻike i ka nui o nā papa ma Clickhouse

select concat(database, '.', table) as table,

formatReadableSize(sum(bytes)) as size,

sum(rows) as rows,

max(modification_time) as latest_modification,

sum(bytes) as bytes_size,

any(engine) as engine,

formatReadableSize(sum(primary_key_bytes_in_memory)) as primary_keys_size

from system.parts

where active

group by database, table

order by bytes_size desc;E ʻike kākou i ka nui o nā lāʻau i lawe ʻia ma Clickhouse.

ʻO 857.19 MB ka nui o ka papa lāʻau.

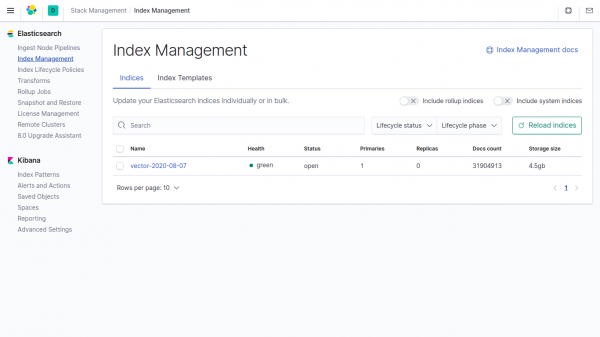

ʻO ka nui o ka ʻikepili like ma ka index ma Elasticsearch he 4,5GB.

Inā ʻaʻole ʻoe e kuhikuhi i ka ʻikepili i ka vector i nā ʻāpana, lawe ʻo Clickhouse i 4500/857.19 = 5.24 mau manawa ma lalo o Elasticsearch.

I ka vector, hoʻohana ʻia ke kahua paʻa.

Kamaʻilio Telegram na

Kamaʻilio Telegram na

Kamaʻilio Telegram na ""

Source: www.habr.com