, amelyet naplóadatok, mutatók és események gyűjtésére, átalakítására és küldésére terveztek.

→

Rust nyelven íródott, analógjaihoz képest nagy teljesítmény és alacsony RAM-fogyasztás jellemzi. Ezen túlmenően nagy figyelmet fordítanak a helyességgel kapcsolatos funkciókra, különösen az el nem küldött események lemezen lévő pufferbe mentésére és a fájlok elforgatására.

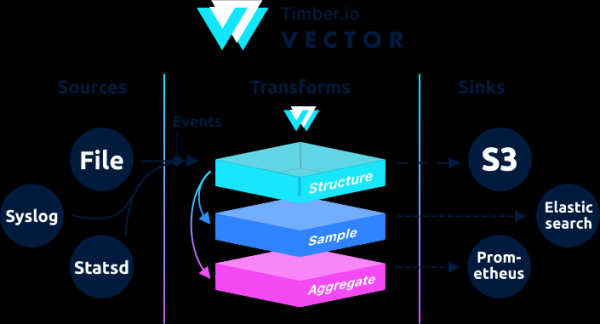

Építészetileg a Vector eseményútválasztó, amely egy vagy több üzenetet fogad források, opcionálisan alkalmazva ezekre az üzenetekre átalakulások, és elküldi őket egy vagy többnek lefolyók.

A Vector a filebeat és a logstash helyettesítője, mindkét szerepkörben képes működni (naplók fogadása és küldése), további részletek róluk .

Ha a Logstash-ban a lánc bemenetként → szűrő → kimenetként épül fel, akkor Vectorban az → →

Példák találhatók a dokumentációban.

Ez az utasítás egy felülvizsgált utasítás . Az eredeti utasítások geoip feldolgozást tartalmaznak. A geoip belső hálózatról történő tesztelésekor a vektor hibát adott.

Aug 05 06:25:31.889 DEBUG transform{name=nginx_parse_rename_fields type=rename_fields}: vector::transforms::rename_fields: Field did not exist field=«geoip.country_name» rate_limit_secs=30Ha valakinek fel kell dolgoznia a geoip-et, nézze meg az eredeti utasításokat .

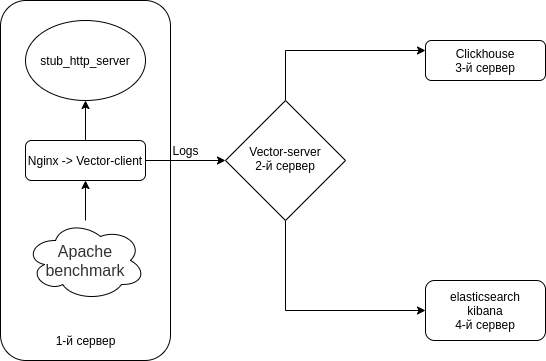

Az Nginx (Hozzáférési naplók) → Vector (Client | Filebeat) → Vector (Server | Logstash) → kombinációt külön fogjuk konfigurálni a Clickhouse-ban és külön az Elasticsearch-ben. 4 szervert telepítünk. Bár 3 szerverrel ki lehet kerülni.

A séma valami ilyesmi.

Tiltsa le a Selinuxot az összes szerverén

sed -i 's/^SELINUX=.*/SELINUX=disabled/g' /etc/selinux/config

rebootMinden szerverre telepítünk egy HTTP szerver emulátort + segédprogramokat

HTTP szerver emulátorként fogjuk használni -tól

A Nodejs-stub-server nem rendelkezik fordulatszámmal. fordulatszámot hozzon létre hozzá. rpm segítségével lesz lefordítva

Adja hozzá az antonpatsev/nodejs-stub-server lerakat

yum -y install yum-plugin-copr epel-release

yes | yum copr enable antonpatsev/nodejs-stub-serverA nodejs-stub-server, az Apache benchmark és a képernyőterminál multiplexer telepítése minden kiszolgálóra

yum -y install stub_http_server screen mc httpd-tools screenJavítottam a stub_http_server válaszidőt a /var/lib/stub_http_server/stub_http_server.js fájlban, hogy több napló legyen.

var max_sleep = 10;Indítsuk el a stub_http_servert.

systemctl start stub_http_server

systemctl enable stub_http_servera 3-as szerveren

A ClickHouse az SSE 4.2-es utasításkészletét használja, így ha nincs másképp megadva, további rendszerkövetelmény lesz a támogatás a használt processzorban. A következő parancs segítségével ellenőrizheti, hogy a jelenlegi processzor támogatja-e az SSE 4.2-t:

grep -q sse4_2 /proc/cpuinfo && echo "SSE 4.2 supported" || echo "SSE 4.2 not supported"Először csatlakoztatnia kell a hivatalos adattárat:

sudo yum install -y yum-utils

sudo rpm --import https://repo.clickhouse.tech/CLICKHOUSE-KEY.GPG

sudo yum-config-manager --add-repo https://repo.clickhouse.tech/rpm/stable/x86_64A csomagok telepítéséhez a következő parancsokat kell futtatnia:

sudo yum install -y clickhouse-server clickhouse-clientEngedélyezze a clickhouse-server számára a hálózati kártya meghallgatását az /etc/clickhouse-server/config.xml fájlban

<listen_host>0.0.0.0</listen_host>A naplózási szint módosítása nyomkövetésről hibakeresésre

hibakeresés

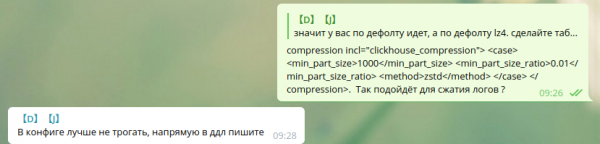

Standard tömörítési beállítások:

min_compress_block_size 65536

max_compress_block_size 1048576A Zstd tömörítés aktiválásához azt tanácsoltuk, hogy ne érintsük meg a konfigurációt, inkább használjunk DDL-t.

Nem találtam meg a zstd tömörítés használatát DDL-n keresztül a Google-ban. Szóval hagytam úgy ahogy van.

Azok a kollégák, akik zstd tömörítést használnak a Clickhouse-ban, ossza meg az utasításokat.

A szerver démonként való indításához futtassa:

service clickhouse-server startMost térjünk át a Clickhouse beállítására

Menj a Clickhouse-ba

clickhouse-client -h 172.26.10.109 -m172.26.10.109 — Annak a szervernek az IP-címe, amelyre a Clickhouse telepítve van.

Hozzunk létre egy vektoros adatbázist

CREATE DATABASE vector;Ellenőrizzük, hogy létezik-e az adatbázis.

show databases;Hozzon létre egy vector.logs táblát.

/* Это таблица где хранятся логи как есть */

CREATE TABLE vector.logs

(

`node_name` String,

`timestamp` DateTime,

`server_name` String,

`user_id` String,

`request_full` String,

`request_user_agent` String,

`request_http_host` String,

`request_uri` String,

`request_scheme` String,

`request_method` String,

`request_length` UInt64,

`request_time` Float32,

`request_referrer` String,

`response_status` UInt16,

`response_body_bytes_sent` UInt64,

`response_content_type` String,

`remote_addr` IPv4,

`remote_port` UInt32,

`remote_user` String,

`upstream_addr` IPv4,

`upstream_port` UInt32,

`upstream_bytes_received` UInt64,

`upstream_bytes_sent` UInt64,

`upstream_cache_status` String,

`upstream_connect_time` Float32,

`upstream_header_time` Float32,

`upstream_response_length` UInt64,

`upstream_response_time` Float32,

`upstream_status` UInt16,

`upstream_content_type` String,

INDEX idx_http_host request_http_host TYPE set(0) GRANULARITY 1

)

ENGINE = MergeTree()

PARTITION BY toYYYYMMDD(timestamp)

ORDER BY timestamp

TTL timestamp + toIntervalMonth(1)

SETTINGS index_granularity = 8192;Ellenőrizzük, hogy a táblák létrejöttek-e. Indítsuk el clickhouse-client és kérjen.

Menjünk a vektoros adatbázishoz.

use vector;

Ok.

0 rows in set. Elapsed: 0.001 sec.Nézzük a táblázatokat.

show tables;

┌─name────────────────┐

│ logs │

└─────────────────────┘Az elasticsearch telepítése a 4. szerverre, hogy ugyanazokat az adatokat elküldje az Elasticsearch-nek a Clickhouse-szal való összehasonlításhoz

Adjon hozzá egy nyilvános fordulatszám-kulcsot

rpm --import https://artifacts.elastic.co/GPG-KEY-elasticsearchHozzunk létre 2 repót:

/etc/yum.repos.d/elasticsearch.repo

[elasticsearch]

name=Elasticsearch repository for 7.x packages

baseurl=https://artifacts.elastic.co/packages/7.x/yum

gpgcheck=1

gpgkey=https://artifacts.elastic.co/GPG-KEY-elasticsearch

enabled=0

autorefresh=1

type=rpm-md/etc/yum.repos.d/kibana.repo

[kibana-7.x]

name=Kibana repository for 7.x packages

baseurl=https://artifacts.elastic.co/packages/7.x/yum

gpgcheck=1

gpgkey=https://artifacts.elastic.co/GPG-KEY-elasticsearch

enabled=1

autorefresh=1

type=rpm-mdTelepítse az elaszticsearch-ot és a kibanát

yum install -y kibana elasticsearchMivel 1 példányban lesz, a következőket kell hozzáadnia az /etc/elasticsearch/elasticsearch.yml fájlhoz:

discovery.type: single-nodeAhhoz, hogy ez a vektor adatokat küldhessen az elasticsearch-nek egy másik szerverről, változtassuk meg a network.host-ot.

network.host: 0.0.0.0A kibanához való csatlakozáshoz módosítsa a server.host paramétert az /etc/kibana/kibana.yml fájlban

server.host: "0.0.0.0"Régi és tartalmazza az elaszticsearch-t az automatikus indításban

systemctl enable elasticsearch

systemctl start elasticsearchés kibana

systemctl enable kibana

systemctl start kibanaAz Elasticsearch konfigurálása egycsomópontos módhoz 1 szilánk, 0 replika. Valószínűleg nagyszámú kiszolgálóból álló fürtje lesz, és ezt nem kell megtennie.

A jövőbeli indexekhez frissítse az alapértelmezett sablont:

curl -X PUT http://localhost:9200/_template/default -H 'Content-Type: application/json' -d '{"index_patterns": ["*"],"order": -1,"settings": {"number_of_shards": "1","number_of_replicas": "0"}}' Telepítés a Logstash helyettesítőjeként a 2-es szerveren

yum install -y https://packages.timber.io/vector/0.9.X/vector-x86_64.rpm mc httpd-tools screenÁllítsuk be a Vectort a Logstash helyettesítésére. Az /etc/vector/vector.toml fájl szerkesztése

# /etc/vector/vector.toml

data_dir = "/var/lib/vector"

[sources.nginx_input_vector]

# General

type = "vector"

address = "0.0.0.0:9876"

shutdown_timeout_secs = 30

[transforms.nginx_parse_json]

inputs = [ "nginx_input_vector" ]

type = "json_parser"

[transforms.nginx_parse_add_defaults]

inputs = [ "nginx_parse_json" ]

type = "lua"

version = "2"

hooks.process = """

function (event, emit)

function split_first(s, delimiter)

result = {};

for match in (s..delimiter):gmatch("(.-)"..delimiter) do

table.insert(result, match);

end

return result[1];

end

function split_last(s, delimiter)

result = {};

for match in (s..delimiter):gmatch("(.-)"..delimiter) do

table.insert(result, match);

end

return result[#result];

end

event.log.upstream_addr = split_first(split_last(event.log.upstream_addr, ', '), ':')

event.log.upstream_bytes_received = split_last(event.log.upstream_bytes_received, ', ')

event.log.upstream_bytes_sent = split_last(event.log.upstream_bytes_sent, ', ')

event.log.upstream_connect_time = split_last(event.log.upstream_connect_time, ', ')

event.log.upstream_header_time = split_last(event.log.upstream_header_time, ', ')

event.log.upstream_response_length = split_last(event.log.upstream_response_length, ', ')

event.log.upstream_response_time = split_last(event.log.upstream_response_time, ', ')

event.log.upstream_status = split_last(event.log.upstream_status, ', ')

if event.log.upstream_addr == "" then

event.log.upstream_addr = "127.0.0.1"

end

if (event.log.upstream_bytes_received == "-" or event.log.upstream_bytes_received == "") then

event.log.upstream_bytes_received = "0"

end

if (event.log.upstream_bytes_sent == "-" or event.log.upstream_bytes_sent == "") then

event.log.upstream_bytes_sent = "0"

end

if event.log.upstream_cache_status == "" then

event.log.upstream_cache_status = "DISABLED"

end

if (event.log.upstream_connect_time == "-" or event.log.upstream_connect_time == "") then

event.log.upstream_connect_time = "0"

end

if (event.log.upstream_header_time == "-" or event.log.upstream_header_time == "") then

event.log.upstream_header_time = "0"

end

if (event.log.upstream_response_length == "-" or event.log.upstream_response_length == "") then

event.log.upstream_response_length = "0"

end

if (event.log.upstream_response_time == "-" or event.log.upstream_response_time == "") then

event.log.upstream_response_time = "0"

end

if (event.log.upstream_status == "-" or event.log.upstream_status == "") then

event.log.upstream_status = "0"

end

emit(event)

end

"""

[transforms.nginx_parse_remove_fields]

inputs = [ "nginx_parse_add_defaults" ]

type = "remove_fields"

fields = ["data", "file", "host", "source_type"]

[transforms.nginx_parse_coercer]

type = "coercer"

inputs = ["nginx_parse_remove_fields"]

types.request_length = "int"

types.request_time = "float"

types.response_status = "int"

types.response_body_bytes_sent = "int"

types.remote_port = "int"

types.upstream_bytes_received = "int"

types.upstream_bytes_send = "int"

types.upstream_connect_time = "float"

types.upstream_header_time = "float"

types.upstream_response_length = "int"

types.upstream_response_time = "float"

types.upstream_status = "int"

types.timestamp = "timestamp"

[sinks.nginx_output_clickhouse]

inputs = ["nginx_parse_coercer"]

type = "clickhouse"

database = "vector"

healthcheck = true

host = "http://172.26.10.109:8123" # Адрес Clickhouse

table = "logs"

encoding.timestamp_format = "unix"

buffer.type = "disk"

buffer.max_size = 104900000

buffer.when_full = "block"

request.in_flight_limit = 20

[sinks.elasticsearch]

type = "elasticsearch"

inputs = ["nginx_parse_coercer"]

compression = "none"

healthcheck = true

# 172.26.10.116 - сервер где установен elasticsearch

host = "http://172.26.10.116:9200"

index = "vector-%Y-%m-%d"Beállíthatja a transforms.nginx_parse_add_defaults szakaszt.

Mint ezeket a konfigurációkat használja egy kis CDN-hez, és több érték is lehet az upstream_*-ban

Például:

"upstream_addr": "128.66.0.10:443, 128.66.0.11:443, 128.66.0.12:443"

"upstream_bytes_received": "-, -, 123"

"upstream_status": "502, 502, 200"Ha nem ez a helyzet, akkor ez a szakasz leegyszerűsíthető

Hozzuk létre a systemd /etc/systemd/system/vector.service szolgáltatás beállításait

# /etc/systemd/system/vector.service

[Unit]

Description=Vector

After=network-online.target

Requires=network-online.target

[Service]

User=vector

Group=vector

ExecStart=/usr/bin/vector

ExecReload=/bin/kill -HUP $MAINPID

Restart=no

StandardOutput=syslog

StandardError=syslog

SyslogIdentifier=vector

[Install]

WantedBy=multi-user.targetA táblázatok létrehozása után futtathatja a Vectort

systemctl enable vector

systemctl start vectorA vektornaplókat a következőképpen tekintheti meg:

journalctl -f -u vectorIlyen bejegyzéseknek kell lenniük a naplókban

INFO vector::topology::builder: Healthcheck: Passed.

INFO vector::topology::builder: Healthcheck: Passed.A kliensen (webszerver) - 1. szerver

Az nginx-szel rendelkező szerveren le kell tiltani az ipv6-ot, mivel a clickhouse naplótáblázata a mezőt használja upstream_addr IPv4, mivel nem használok ipv6-ot a hálózaton belül. Ha az ipv6 nincs kikapcsolva, hibák lépnek fel:

DB::Exception: Invalid IPv4 value.: (while read the value of key upstream_addr)Talán olvasók, adják hozzá az ipv6 támogatást.

Hozza létre az /etc/sysctl.d/98-disable-ipv6.conf fájlt

net.ipv6.conf.all.disable_ipv6 = 1

net.ipv6.conf.default.disable_ipv6 = 1

net.ipv6.conf.lo.disable_ipv6 = 1A beállítások alkalmazása

sysctl --systemTelepítsük az nginx-et.

nginx adattárfájl hozzáadva /etc/yum.repos.d/nginx.repo

[nginx-stable]

name=nginx stable repo

baseurl=http://nginx.org/packages/centos/$releasever/$basearch/

gpgcheck=1

enabled=1

gpgkey=https://nginx.org/keys/nginx_signing.key

module_hotfixes=trueTelepítse az nginx csomagot

yum install -y nginxElőször is be kell állítanunk a naplóformátumot az Nginxben az /etc/nginx/nginx.conf fájlban

user nginx;

# you must set worker processes based on your CPU cores, nginx does not benefit from setting more than that

worker_processes auto; #some last versions calculate it automatically

# number of file descriptors used for nginx

# the limit for the maximum FDs on the server is usually set by the OS.

# if you don't set FD's then OS settings will be used which is by default 2000

worker_rlimit_nofile 100000;

error_log /var/log/nginx/error.log warn;

pid /var/run/nginx.pid;

# provides the configuration file context in which the directives that affect connection processing are specified.

events {

# determines how much clients will be served per worker

# max clients = worker_connections * worker_processes

# max clients is also limited by the number of socket connections available on the system (~64k)

worker_connections 4000;

# optimized to serve many clients with each thread, essential for linux -- for testing environment

use epoll;

# accept as many connections as possible, may flood worker connections if set too low -- for testing environment

multi_accept on;

}

http {

include /etc/nginx/mime.types;

default_type application/octet-stream;

log_format main '$remote_addr - $remote_user [$time_local] "$request" '

'$status $body_bytes_sent "$http_referer" '

'"$http_user_agent" "$http_x_forwarded_for"';

log_format vector escape=json

'{'

'"node_name":"nginx-vector",'

'"timestamp":"$time_iso8601",'

'"server_name":"$server_name",'

'"request_full": "$request",'

'"request_user_agent":"$http_user_agent",'

'"request_http_host":"$http_host",'

'"request_uri":"$request_uri",'

'"request_scheme": "$scheme",'

'"request_method":"$request_method",'

'"request_length":"$request_length",'

'"request_time": "$request_time",'

'"request_referrer":"$http_referer",'

'"response_status": "$status",'

'"response_body_bytes_sent":"$body_bytes_sent",'

'"response_content_type":"$sent_http_content_type",'

'"remote_addr": "$remote_addr",'

'"remote_port": "$remote_port",'

'"remote_user": "$remote_user",'

'"upstream_addr": "$upstream_addr",'

'"upstream_bytes_received": "$upstream_bytes_received",'

'"upstream_bytes_sent": "$upstream_bytes_sent",'

'"upstream_cache_status":"$upstream_cache_status",'

'"upstream_connect_time":"$upstream_connect_time",'

'"upstream_header_time":"$upstream_header_time",'

'"upstream_response_length":"$upstream_response_length",'

'"upstream_response_time":"$upstream_response_time",'

'"upstream_status": "$upstream_status",'

'"upstream_content_type":"$upstream_http_content_type"'

'}';

access_log /var/log/nginx/access.log main;

access_log /var/log/nginx/access.json.log vector; # Новый лог в формате json

sendfile on;

#tcp_nopush on;

keepalive_timeout 65;

#gzip on;

include /etc/nginx/conf.d/*.conf;

}Annak érdekében, hogy ne törje meg a jelenlegi konfigurációt, az Nginx lehetővé teszi több access_log direktíva használatát

access_log /var/log/nginx/access.log main; # Стандартный лог

access_log /var/log/nginx/access.json.log vector; # Новый лог в формате jsonNe felejtsen el egy szabályt hozzáadni az új naplók logrotate-jához (ha a naplófájl nem végződik .log-al)

Távolítsa el a default.conf fájlt az /etc/nginx/conf.d/ fájlból

rm -f /etc/nginx/conf.d/default.confAdja hozzá az /etc/nginx/conf.d/vhost1.conf virtuális gazdagépet

server {

listen 80;

server_name vhost1;

location / {

proxy_pass http://172.26.10.106:8080;

}

}Adja hozzá az /etc/nginx/conf.d/vhost2.conf virtuális gazdagépet

server {

listen 80;

server_name vhost2;

location / {

proxy_pass http://172.26.10.108:8080;

}

}Adja hozzá az /etc/nginx/conf.d/vhost3.conf virtuális gazdagépet

server {

listen 80;

server_name vhost3;

location / {

proxy_pass http://172.26.10.109:8080;

}

}Adja hozzá az /etc/nginx/conf.d/vhost4.conf virtuális gazdagépet

server {

listen 80;

server_name vhost4;

location / {

proxy_pass http://172.26.10.116:8080;

}

}Adjon hozzá virtuális gazdagépeket (az nginx telepített kiszolgáló 172.26.10.106 ip-je) az összes kiszolgálóhoz az /etc/hosts fájlba:

172.26.10.106 vhost1

172.26.10.106 vhost2

172.26.10.106 vhost3

172.26.10.106 vhost4És ha minden készen van, akkor

nginx -t

systemctl restart nginxMost telepítsük mi magunk

yum install -y https://packages.timber.io/vector/0.9.X/vector-x86_64.rpmHozzon létre egy beállítási fájlt a systemd /etc/systemd/system/vector.service számára

[Unit]

Description=Vector

After=network-online.target

Requires=network-online.target

[Service]

User=vector

Group=vector

ExecStart=/usr/bin/vector

ExecReload=/bin/kill -HUP $MAINPID

Restart=no

StandardOutput=syslog

StandardError=syslog

SyslogIdentifier=vector

[Install]

WantedBy=multi-user.targetÉs állítsa be a Filebeat cserét az /etc/vector/vector.toml konfigurációban. A 172.26.10.108 IP-cím a naplószerver IP-címe (Vector-Server)

data_dir = "/var/lib/vector"

[sources.nginx_file]

type = "file"

include = [ "/var/log/nginx/access.json.log" ]

start_at_beginning = false

fingerprinting.strategy = "device_and_inode"

[sinks.nginx_output_vector]

type = "vector"

inputs = [ "nginx_file" ]

address = "172.26.10.108:9876"Ne felejtsd el hozzáadni a felhasználói vektort a megfelelő csoporthoz, hogy az be tudja olvasni a naplófájlokat. Például, nginx a centos naplókat hoz létre admin csoport jogosultságokkal.

usermod -a -G adm vectorIndítsuk el a vektorszolgáltatást

systemctl enable vector

systemctl start vectorA vektornaplókat a következőképpen tekintheti meg:

journalctl -f -u vectorIlyen bejegyzésnek kell lennie a naplókban

INFO vector::topology::builder: Healthcheck: Passed.Stressz tesztelés

A tesztelést Apache benchmark segítségével végezzük.

A httpd-tools csomag minden kiszolgálóra telepítve volt

Elkezdjük a tesztelést az Apache benchmark használatával 4 különböző szerverről a képernyőn. Először elindítjuk a képernyőterminál multiplexert, majd megkezdjük a tesztelést az Apache benchmark segítségével. A képernyővel való munkavégzés módja itt található .

1. szerverről

while true; do ab -H "User-Agent: 1server" -c 100 -n 10 -t 10 http://vhost1/; sleep 1; done2. szerverről

while true; do ab -H "User-Agent: 2server" -c 100 -n 10 -t 10 http://vhost2/; sleep 1; done3. szerverről

while true; do ab -H "User-Agent: 3server" -c 100 -n 10 -t 10 http://vhost3/; sleep 1; done4. szerverről

while true; do ab -H "User-Agent: 4server" -c 100 -n 10 -t 10 http://vhost4/; sleep 1; doneNézzük meg az adatokat a Clickhouse-ban

Menj a Clickhouse-ba

clickhouse-client -h 172.26.10.109 -mSQL lekérdezés készítése

SELECT * FROM vector.logs;

┌─node_name────┬───────────timestamp─┬─server_name─┬─user_id─┬─request_full───┬─request_user_agent─┬─request_http_host─┬─request_uri─┬─request_scheme─┬─request_method─┬─request_length─┬─request_time─┬─request_referrer─┬─response_status─┬─response_body_bytes_sent─┬─response_content_type─┬───remote_addr─┬─remote_port─┬─remote_user─┬─upstream_addr─┬─upstream_port─┬─upstream_bytes_received─┬─upstream_bytes_sent─┬─upstream_cache_status─┬─upstream_connect_time─┬─upstream_header_time─┬─upstream_response_length─┬─upstream_response_time─┬─upstream_status─┬─upstream_content_type─┐

│ nginx-vector │ 2020-08-07 04:32:42 │ vhost1 │ │ GET / HTTP/1.0 │ 1server │ vhost1 │ / │ http │ GET │ 66 │ 0.028 │ │ 404 │ 27 │ │ 172.26.10.106 │ 45886 │ │ 172.26.10.106 │ 0 │ 109 │ 97 │ DISABLED │ 0 │ 0.025 │ 27 │ 0.029 │ 404 │ │

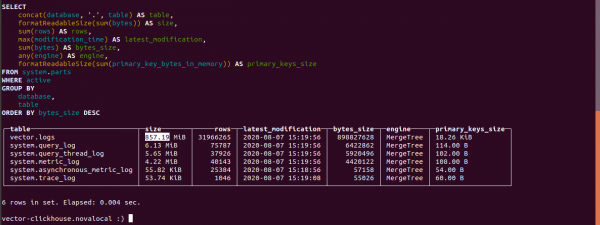

└──────────────┴─────────────────────┴─────────────┴─────────┴────────────────┴────────────────────┴───────────────────┴─────────────┴────────────────┴────────────────┴────────────────┴──────────────┴──────────────────┴─────────────────┴──────────────────────────┴───────────────────────┴───────────────┴─────────────┴─────────────┴───────────────┴───────────────┴─────────────────────────┴─────────────────────┴───────────────────────┴───────────────────────┴──────────────────────┴──────────────────────────┴────────────────────────┴─────────────────┴───────────────────────Tudja meg a Clickhouse asztalainak méretét

select concat(database, '.', table) as table,

formatReadableSize(sum(bytes)) as size,

sum(rows) as rows,

max(modification_time) as latest_modification,

sum(bytes) as bytes_size,

any(engine) as engine,

formatReadableSize(sum(primary_key_bytes_in_memory)) as primary_keys_size

from system.parts

where active

group by database, table

order by bytes_size desc;Nézzük meg, mennyi rönk foglalt el a Clickhouse-ban.

A naplótáblázat mérete 857.19 MB.

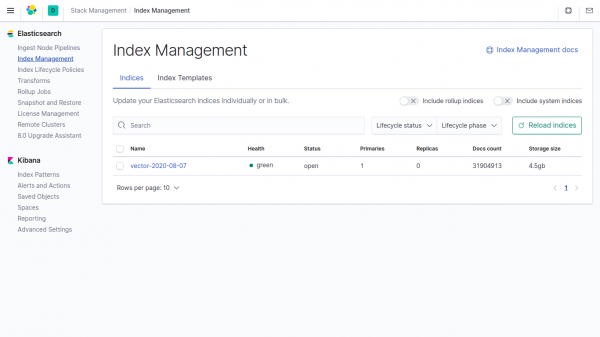

Ugyanennek az adatnak a mérete az Elasticsearch indexében 4,5 GB.

Ha nem ad meg adatokat a vektorban a paraméterekben, akkor a Clickhouse 4500/857.19 = 5.24-szer kevesebbet vesz igénybe, mint az Elasticsearch-ben.

A vektorban alapértelmezés szerint a tömörítési mezőt használják.

Telegram chat by

Telegram chat by

Telegram chat a következőtől: ""

Forrás: will.com