, שנועד לאסוף, לשנות ולשלוח נתוני יומן, מדדים ואירועים.

→

בהיותו כתוב בשפת Rust, הוא מאופיין בביצועים גבוהים וצריכת RAM נמוכה בהשוואה לאנלוגים שלו. בנוסף, מוקדשת תשומת לב רבה לפונקציות הקשורות לתקינות, בפרט, היכולת לשמור אירועים שלא נשלחו למאגר בדיסק ולסובב קבצים.

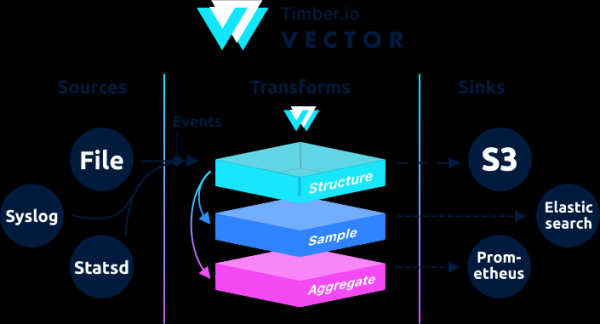

מבחינה אדריכלית, וקטור הוא נתב אירועים שמקבל הודעות מאחד או יותר מקורות, אופציונלי להחיל על הודעות אלה טרנספורמציות, ושליחתם לאחד או יותר מנקז.

וקטור הוא תחליף ל-filbeat ו-logstash, הוא יכול לפעול בשני התפקידים (קבלה ושליחה של יומנים), פרטים נוספים עליהם .

אם ב-Logstash השרשרת בנויה כקלט → פילטר → פלט אז בוקטור זה כן → →

דוגמאות ניתן למצוא בתיעוד.

הוראה זו היא הוראה מתוקנת מ . ההוראות המקוריות מכילות עיבוד geoip. בעת בדיקת geoip מרשת פנימית, וקטור נתן שגיאה.

Aug 05 06:25:31.889 DEBUG transform{name=nginx_parse_rename_fields type=rename_fields}: vector::transforms::rename_fields: Field did not exist field=«geoip.country_name» rate_limit_secs=30אם מישהו צריך לעבד geoip, עיין בהוראות המקוריות מ .

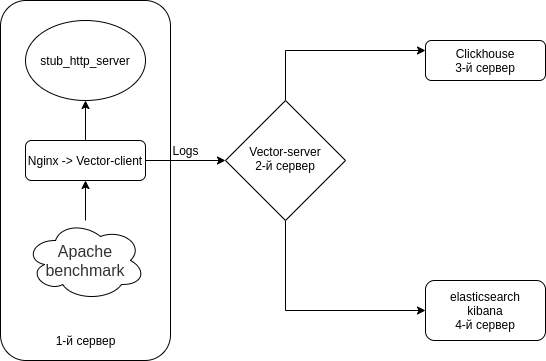

נגדיר את השילוב של Nginx (Access logs) → Vector (Client | Filebeat) → Vector (Server | Logstash) → בנפרד ב-Clickhouse ובנפרד ב-Elasticsearch. נתקין 4 שרתים. למרות שאתה יכול לעקוף את זה עם 3 שרתים.

התכנית היא משהו כזה.

השבת את Selinux בכל השרתים שלך

sed -i 's/^SELINUX=.*/SELINUX=disabled/g' /etc/selinux/config

rebootאנו מתקינים אמולטור שרת HTTP + כלי עזר בכל השרתים

כאמולטור שרת HTTP נשתמש מ

ל-Nodejs-stub-server אין סל"ד. ליצור עבורו סל"ד. סל"ד ייבנה באמצעות

הוסף את המאגר antonpatsev/nodejs-stub-server

yum -y install yum-plugin-copr epel-release

yes | yum copr enable antonpatsev/nodejs-stub-serverהתקן nodejs-stub-server, benchmark Apache ומרבב מסוף מסך בכל השרתים

yum -y install stub_http_server screen mc httpd-tools screenתיקנתי את זמן התגובה של stub_http_server בקובץ /var/lib/stub_http_server/stub_http_server.js כך שהיו יותר יומנים.

var max_sleep = 10;בואו נפעיל את stub_http_server.

systemctl start stub_http_server

systemctl enable stub_http_serverבשרת 3

ClickHouse משתמש בערכת ההוראות SSE 4.2, כך שאם לא צוין אחרת, התמיכה בו במעבד המשמש הופכת לדרישת מערכת נוספת. הנה הפקודה לבדוק אם המעבד הנוכחי תומך ב-SSE 4.2:

grep -q sse4_2 /proc/cpuinfo && echo "SSE 4.2 supported" || echo "SSE 4.2 not supported"ראשית עליך לחבר את המאגר הרשמי:

sudo yum install -y yum-utils

sudo rpm --import https://repo.clickhouse.tech/CLICKHOUSE-KEY.GPG

sudo yum-config-manager --add-repo https://repo.clickhouse.tech/rpm/stable/x86_64כדי להתקין חבילות עליך להפעיל את הפקודות הבאות:

sudo yum install -y clickhouse-server clickhouse-clientאפשר ל-clickhouse-server להאזין לכרטיס הרשת בקובץ /etc/clickhouse-server/config.xml

<listen_host>0.0.0.0</listen_host>שינוי רמת הרישום ממעקב לניפוי באגים

באגים

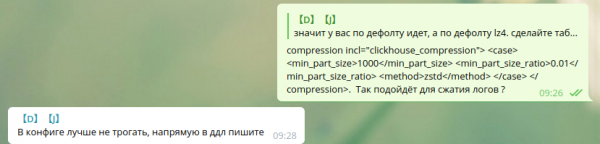

הגדרות דחיסה סטנדרטיות:

min_compress_block_size 65536

max_compress_block_size 1048576כדי להפעיל את דחיסת Zstd, הומלץ לא לגעת בתצורה, אלא להשתמש ב-DDL.

לא הצלחתי למצוא כיצד להשתמש בדחיסת zstd באמצעות DDL בגוגל. אז השארתי את זה כמו שהוא.

עמיתים שמשתמשים בדחיסת zstd ב-Clickhouse, אנא שתפו את ההוראות.

כדי להפעיל את השרת בתור דמון, הרץ:

service clickhouse-server startכעת נעבור להגדרת Clickhouse

עבור אל קליקהאוס

clickhouse-client -h 172.26.10.109 -m172.26.10.109 - IP של השרת שבו מותקן Clickhouse.

בואו ניצור מסד נתונים וקטורי

CREATE DATABASE vector;בוא נבדוק שמסד הנתונים קיים.

show databases;צור טבלת vector.logs.

/* Это таблица где хранятся логи как есть */

CREATE TABLE vector.logs

(

`node_name` String,

`timestamp` DateTime,

`server_name` String,

`user_id` String,

`request_full` String,

`request_user_agent` String,

`request_http_host` String,

`request_uri` String,

`request_scheme` String,

`request_method` String,

`request_length` UInt64,

`request_time` Float32,

`request_referrer` String,

`response_status` UInt16,

`response_body_bytes_sent` UInt64,

`response_content_type` String,

`remote_addr` IPv4,

`remote_port` UInt32,

`remote_user` String,

`upstream_addr` IPv4,

`upstream_port` UInt32,

`upstream_bytes_received` UInt64,

`upstream_bytes_sent` UInt64,

`upstream_cache_status` String,

`upstream_connect_time` Float32,

`upstream_header_time` Float32,

`upstream_response_length` UInt64,

`upstream_response_time` Float32,

`upstream_status` UInt16,

`upstream_content_type` String,

INDEX idx_http_host request_http_host TYPE set(0) GRANULARITY 1

)

ENGINE = MergeTree()

PARTITION BY toYYYYMMDD(timestamp)

ORDER BY timestamp

TTL timestamp + toIntervalMonth(1)

SETTINGS index_granularity = 8192;אנו בודקים שהטבלאות נוצרו. בואו נשיק clickhouse-client ולהגיש בקשה.

בוא נלך למסד הנתונים הווקטוריים.

use vector;

Ok.

0 rows in set. Elapsed: 0.001 sec.בואו נסתכל על הטבלאות.

show tables;

┌─name────────────────┐

│ logs │

└─────────────────────┘התקנת elasticsearch בשרת הרביעי כדי לשלוח את אותם נתונים אל Elasticsearch לצורך השוואה עם Clickhouse

הוסף מפתח סל"ד ציבורי

rpm --import https://artifacts.elastic.co/GPG-KEY-elasticsearchבואו ניצור 2 מאגר:

/etc/yum.repos.d/elasticsearch.repo

[elasticsearch]

name=Elasticsearch repository for 7.x packages

baseurl=https://artifacts.elastic.co/packages/7.x/yum

gpgcheck=1

gpgkey=https://artifacts.elastic.co/GPG-KEY-elasticsearch

enabled=0

autorefresh=1

type=rpm-md/etc/yum.repos.d/kibana.repo

[kibana-7.x]

name=Kibana repository for 7.x packages

baseurl=https://artifacts.elastic.co/packages/7.x/yum

gpgcheck=1

gpgkey=https://artifacts.elastic.co/GPG-KEY-elasticsearch

enabled=1

autorefresh=1

type=rpm-mdהתקן את elasticsearch ו-kibana

yum install -y kibana elasticsearchמכיוון שהוא יהיה בעותק אחד, עליך להוסיף את הדברים הבאים לקובץ /etc/elasticsearch/elasticsearch.yml:

discovery.type: single-nodeכדי שהווקטור הזה יוכל לשלוח נתונים אל elasticsearch משרת אחר, בואו נשנה את network.host.

network.host: 0.0.0.0כדי להתחבר ל-kibana, שנה את הפרמטר server.host בקובץ /etc/kibana/kibana.yml

server.host: "0.0.0.0"ישן וכולל elasticsearch ב-autostart

systemctl enable elasticsearch

systemctl start elasticsearchוקיבנה

systemctl enable kibana

systemctl start kibanaהגדרת Elasticsearch עבור מצב צומת יחיד 1 רסיס, 0 העתק. סביר להניח שיהיה לך אשכול של מספר רב של שרתים ואתה לא צריך לעשות זאת.

עבור אינדקסים עתידיים, עדכן את תבנית ברירת המחדל:

curl -X PUT http://localhost:9200/_template/default -H 'Content-Type: application/json' -d '{"index_patterns": ["*"],"order": -1,"settings": {"number_of_shards": "1","number_of_replicas": "0"}}' התקנה כתחליף ל-Logstash בשרת 2

yum install -y https://packages.timber.io/vector/0.9.X/vector-x86_64.rpm mc httpd-tools screenבואו נגדיר את וקטור כתחליף ל-Logstash. עריכת הקובץ /etc/vector/vector.toml

# /etc/vector/vector.toml

data_dir = "/var/lib/vector"

[sources.nginx_input_vector]

# General

type = "vector"

address = "0.0.0.0:9876"

shutdown_timeout_secs = 30

[transforms.nginx_parse_json]

inputs = [ "nginx_input_vector" ]

type = "json_parser"

[transforms.nginx_parse_add_defaults]

inputs = [ "nginx_parse_json" ]

type = "lua"

version = "2"

hooks.process = """

function (event, emit)

function split_first(s, delimiter)

result = {};

for match in (s..delimiter):gmatch("(.-)"..delimiter) do

table.insert(result, match);

end

return result[1];

end

function split_last(s, delimiter)

result = {};

for match in (s..delimiter):gmatch("(.-)"..delimiter) do

table.insert(result, match);

end

return result[#result];

end

event.log.upstream_addr = split_first(split_last(event.log.upstream_addr, ', '), ':')

event.log.upstream_bytes_received = split_last(event.log.upstream_bytes_received, ', ')

event.log.upstream_bytes_sent = split_last(event.log.upstream_bytes_sent, ', ')

event.log.upstream_connect_time = split_last(event.log.upstream_connect_time, ', ')

event.log.upstream_header_time = split_last(event.log.upstream_header_time, ', ')

event.log.upstream_response_length = split_last(event.log.upstream_response_length, ', ')

event.log.upstream_response_time = split_last(event.log.upstream_response_time, ', ')

event.log.upstream_status = split_last(event.log.upstream_status, ', ')

if event.log.upstream_addr == "" then

event.log.upstream_addr = "127.0.0.1"

end

if (event.log.upstream_bytes_received == "-" or event.log.upstream_bytes_received == "") then

event.log.upstream_bytes_received = "0"

end

if (event.log.upstream_bytes_sent == "-" or event.log.upstream_bytes_sent == "") then

event.log.upstream_bytes_sent = "0"

end

if event.log.upstream_cache_status == "" then

event.log.upstream_cache_status = "DISABLED"

end

if (event.log.upstream_connect_time == "-" or event.log.upstream_connect_time == "") then

event.log.upstream_connect_time = "0"

end

if (event.log.upstream_header_time == "-" or event.log.upstream_header_time == "") then

event.log.upstream_header_time = "0"

end

if (event.log.upstream_response_length == "-" or event.log.upstream_response_length == "") then

event.log.upstream_response_length = "0"

end

if (event.log.upstream_response_time == "-" or event.log.upstream_response_time == "") then

event.log.upstream_response_time = "0"

end

if (event.log.upstream_status == "-" or event.log.upstream_status == "") then

event.log.upstream_status = "0"

end

emit(event)

end

"""

[transforms.nginx_parse_remove_fields]

inputs = [ "nginx_parse_add_defaults" ]

type = "remove_fields"

fields = ["data", "file", "host", "source_type"]

[transforms.nginx_parse_coercer]

type = "coercer"

inputs = ["nginx_parse_remove_fields"]

types.request_length = "int"

types.request_time = "float"

types.response_status = "int"

types.response_body_bytes_sent = "int"

types.remote_port = "int"

types.upstream_bytes_received = "int"

types.upstream_bytes_send = "int"

types.upstream_connect_time = "float"

types.upstream_header_time = "float"

types.upstream_response_length = "int"

types.upstream_response_time = "float"

types.upstream_status = "int"

types.timestamp = "timestamp"

[sinks.nginx_output_clickhouse]

inputs = ["nginx_parse_coercer"]

type = "clickhouse"

database = "vector"

healthcheck = true

host = "http://172.26.10.109:8123" # Адрес Clickhouse

table = "logs"

encoding.timestamp_format = "unix"

buffer.type = "disk"

buffer.max_size = 104900000

buffer.when_full = "block"

request.in_flight_limit = 20

[sinks.elasticsearch]

type = "elasticsearch"

inputs = ["nginx_parse_coercer"]

compression = "none"

healthcheck = true

# 172.26.10.116 - сервер где установен elasticsearch

host = "http://172.26.10.116:9200"

index = "vector-%Y-%m-%d"אתה יכול להתאים את הקטע transforms.nginx_parse_add_defaults.

כמו משתמש בהגדרות אלה עבור CDN קטן ויכולים להיות מספר ערכים במעלה הזרם_*

לדוגמה:

"upstream_addr": "128.66.0.10:443, 128.66.0.11:443, 128.66.0.12:443"

"upstream_bytes_received": "-, -, 123"

"upstream_status": "502, 502, 200"אם זה לא המצב שלך, אז ניתן לפשט את הסעיף הזה

בואו ניצור הגדרות שירות עבור systemd /etc/systemd/system/vector.service

# /etc/systemd/system/vector.service

[Unit]

Description=Vector

After=network-online.target

Requires=network-online.target

[Service]

User=vector

Group=vector

ExecStart=/usr/bin/vector

ExecReload=/bin/kill -HUP $MAINPID

Restart=no

StandardOutput=syslog

StandardError=syslog

SyslogIdentifier=vector

[Install]

WantedBy=multi-user.targetלאחר יצירת הטבלאות, תוכל להפעיל את וקטור

systemctl enable vector

systemctl start vectorניתן לראות יומני וקטור כך:

journalctl -f -u vectorצריכות להיות ערכים כאלה ביומנים

INFO vector::topology::builder: Healthcheck: Passed.

INFO vector::topology::builder: Healthcheck: Passed.בלקוח (שרת אינטרנט) - שרת 1

בשרת עם nginx, אתה צריך להשבית את ipv6, מכיוון שטבלת היומנים ב-clickhouse משתמשת בשדה upstream_addr IPv4, מכיוון שאני לא משתמש ב-ipv6 בתוך הרשת. אם ipv6 לא כבוי, יהיו שגיאות:

DB::Exception: Invalid IPv4 value.: (while read the value of key upstream_addr)אולי קוראים, הוסף תמיכה ב-ipv6.

צור את הקובץ /etc/sysctl.d/98-disable-ipv6.conf

net.ipv6.conf.all.disable_ipv6 = 1

net.ipv6.conf.default.disable_ipv6 = 1

net.ipv6.conf.lo.disable_ipv6 = 1החלת ההגדרות

sysctl --systemבואו נתקין את nginx.

נוסף קובץ מאגר nginx /etc/yum.repos.d/nginx.repo

[nginx-stable]

name=nginx stable repo

baseurl=http://nginx.org/packages/centos/$releasever/$basearch/

gpgcheck=1

enabled=1

gpgkey=https://nginx.org/keys/nginx_signing.key

module_hotfixes=trueהתקן את חבילת nginx

yum install -y nginxראשית, עלינו להגדיר את פורמט היומן ב-Nginx בקובץ /etc/nginx/nginx.conf

user nginx;

# you must set worker processes based on your CPU cores, nginx does not benefit from setting more than that

worker_processes auto; #some last versions calculate it automatically

# number of file descriptors used for nginx

# the limit for the maximum FDs on the server is usually set by the OS.

# if you don't set FD's then OS settings will be used which is by default 2000

worker_rlimit_nofile 100000;

error_log /var/log/nginx/error.log warn;

pid /var/run/nginx.pid;

# provides the configuration file context in which the directives that affect connection processing are specified.

events {

# determines how much clients will be served per worker

# max clients = worker_connections * worker_processes

# max clients is also limited by the number of socket connections available on the system (~64k)

worker_connections 4000;

# optimized to serve many clients with each thread, essential for linux -- for testing environment

use epoll;

# accept as many connections as possible, may flood worker connections if set too low -- for testing environment

multi_accept on;

}

http {

include /etc/nginx/mime.types;

default_type application/octet-stream;

log_format main '$remote_addr - $remote_user [$time_local] "$request" '

'$status $body_bytes_sent "$http_referer" '

'"$http_user_agent" "$http_x_forwarded_for"';

log_format vector escape=json

'{'

'"node_name":"nginx-vector",'

'"timestamp":"$time_iso8601",'

'"server_name":"$server_name",'

'"request_full": "$request",'

'"request_user_agent":"$http_user_agent",'

'"request_http_host":"$http_host",'

'"request_uri":"$request_uri",'

'"request_scheme": "$scheme",'

'"request_method":"$request_method",'

'"request_length":"$request_length",'

'"request_time": "$request_time",'

'"request_referrer":"$http_referer",'

'"response_status": "$status",'

'"response_body_bytes_sent":"$body_bytes_sent",'

'"response_content_type":"$sent_http_content_type",'

'"remote_addr": "$remote_addr",'

'"remote_port": "$remote_port",'

'"remote_user": "$remote_user",'

'"upstream_addr": "$upstream_addr",'

'"upstream_bytes_received": "$upstream_bytes_received",'

'"upstream_bytes_sent": "$upstream_bytes_sent",'

'"upstream_cache_status":"$upstream_cache_status",'

'"upstream_connect_time":"$upstream_connect_time",'

'"upstream_header_time":"$upstream_header_time",'

'"upstream_response_length":"$upstream_response_length",'

'"upstream_response_time":"$upstream_response_time",'

'"upstream_status": "$upstream_status",'

'"upstream_content_type":"$upstream_http_content_type"'

'}';

access_log /var/log/nginx/access.log main;

access_log /var/log/nginx/access.json.log vector; # Новый лог в формате json

sendfile on;

#tcp_nopush on;

keepalive_timeout 65;

#gzip on;

include /etc/nginx/conf.d/*.conf;

}כדי לא לשבור את התצורה הנוכחית שלך, Nginx מאפשרת לך לקבל מספר הנחיות access_log

access_log /var/log/nginx/access.log main; # Стандартный лог

access_log /var/log/nginx/access.json.log vector; # Новый лог в формате jsonאל תשכח להוסיף כלל ל-logrotate עבור יומנים חדשים (אם קובץ היומן אינו מסתיים ב-.log)

הסר את default.conf מ-/etc/nginx/conf.d/

rm -f /etc/nginx/conf.d/default.confהוסף מארח וירטואלי /etc/nginx/conf.d/vhost1.conf

server {

listen 80;

server_name vhost1;

location / {

proxy_pass http://172.26.10.106:8080;

}

}הוסף מארח וירטואלי /etc/nginx/conf.d/vhost2.conf

server {

listen 80;

server_name vhost2;

location / {

proxy_pass http://172.26.10.108:8080;

}

}הוסף מארח וירטואלי /etc/nginx/conf.d/vhost3.conf

server {

listen 80;

server_name vhost3;

location / {

proxy_pass http://172.26.10.109:8080;

}

}הוסף מארח וירטואלי /etc/nginx/conf.d/vhost4.conf

server {

listen 80;

server_name vhost4;

location / {

proxy_pass http://172.26.10.116:8080;

}

}הוסף מארחים וירטואליים (172.26.10.106 ip של השרת שבו מותקן nginx) לכל השרתים לקובץ /etc/hosts:

172.26.10.106 vhost1

172.26.10.106 vhost2

172.26.10.106 vhost3

172.26.10.106 vhost4ואם הכל מוכן אז

nginx -t

systemctl restart nginxעכשיו בואו נתקין את זה בעצמנו

yum install -y https://packages.timber.io/vector/0.9.X/vector-x86_64.rpmבואו ניצור קובץ הגדרות עבור systemd /etc/systemd/system/vector.service

[Unit]

Description=Vector

After=network-online.target

Requires=network-online.target

[Service]

User=vector

Group=vector

ExecStart=/usr/bin/vector

ExecReload=/bin/kill -HUP $MAINPID

Restart=no

StandardOutput=syslog

StandardError=syslog

SyslogIdentifier=vector

[Install]

WantedBy=multi-user.targetוהגדר את החלפת Filebeat בתצורה /etc/vector/vector.toml. כתובת ה-IP 172.26.10.108 היא כתובת ה-IP של שרת היומן (Vector-Server)

data_dir = "/var/lib/vector"

[sources.nginx_file]

type = "file"

include = [ "/var/log/nginx/access.json.log" ]

start_at_beginning = false

fingerprinting.strategy = "device_and_inode"

[sinks.nginx_output_vector]

type = "vector"

inputs = [ "nginx_file" ]

address = "172.26.10.108:9876"אל תשכחו להוסיף את וקטור המשתמש לקבוצה המתאימה כדי שיוכל לקרוא קבצי יומן. לדוגמה, nginx ב- centos יוצר יומנים עם הרשאות קבוצת ניהול.

usermod -a -G adm vectorבואו נתחיל את שירות הווקטור

systemctl enable vector

systemctl start vectorניתן לראות יומני וקטור כך:

journalctl -f -u vectorצריך להיות ערך כזה ביומנים

INFO vector::topology::builder: Healthcheck: Passed.מבחן לחץ

הבדיקה מתבצעת באמצעות רף Apache.

חבילת httpd-tools הותקנה בכל השרתים

אנו מתחילים לבחון באמצעות Apache benchmark מ-4 שרתים שונים במסך. ראשית, אנו מפעילים את מרובי מסוף המסך, ולאחר מכן אנו מתחילים לבדוק באמצעות ה-benchmark של Apache. איך לעבוד עם מסך אתה יכול למצוא ב .

מהשרת הראשון

while true; do ab -H "User-Agent: 1server" -c 100 -n 10 -t 10 http://vhost1/; sleep 1; doneמהשרת הראשון

while true; do ab -H "User-Agent: 2server" -c 100 -n 10 -t 10 http://vhost2/; sleep 1; doneמהשרת הראשון

while true; do ab -H "User-Agent: 3server" -c 100 -n 10 -t 10 http://vhost3/; sleep 1; doneמהשרת הראשון

while true; do ab -H "User-Agent: 4server" -c 100 -n 10 -t 10 http://vhost4/; sleep 1; doneבואו נבדוק את הנתונים בקליקהאוס

עבור אל קליקהאוס

clickhouse-client -h 172.26.10.109 -mביצוע שאילתת SQL

SELECT * FROM vector.logs;

┌─node_name────┬───────────timestamp─┬─server_name─┬─user_id─┬─request_full───┬─request_user_agent─┬─request_http_host─┬─request_uri─┬─request_scheme─┬─request_method─┬─request_length─┬─request_time─┬─request_referrer─┬─response_status─┬─response_body_bytes_sent─┬─response_content_type─┬───remote_addr─┬─remote_port─┬─remote_user─┬─upstream_addr─┬─upstream_port─┬─upstream_bytes_received─┬─upstream_bytes_sent─┬─upstream_cache_status─┬─upstream_connect_time─┬─upstream_header_time─┬─upstream_response_length─┬─upstream_response_time─┬─upstream_status─┬─upstream_content_type─┐

│ nginx-vector │ 2020-08-07 04:32:42 │ vhost1 │ │ GET / HTTP/1.0 │ 1server │ vhost1 │ / │ http │ GET │ 66 │ 0.028 │ │ 404 │ 27 │ │ 172.26.10.106 │ 45886 │ │ 172.26.10.106 │ 0 │ 109 │ 97 │ DISABLED │ 0 │ 0.025 │ 27 │ 0.029 │ 404 │ │

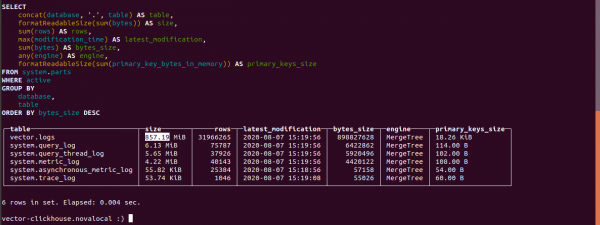

└──────────────┴─────────────────────┴─────────────┴─────────┴────────────────┴────────────────────┴───────────────────┴─────────────┴────────────────┴────────────────┴────────────────┴──────────────┴──────────────────┴─────────────────┴──────────────────────────┴───────────────────────┴───────────────┴─────────────┴─────────────┴───────────────┴───────────────┴─────────────────────────┴─────────────────────┴───────────────────────┴───────────────────────┴──────────────────────┴──────────────────────────┴────────────────────────┴─────────────────┴───────────────────────גלה את גודל השולחנות בקליקהאוס

select concat(database, '.', table) as table,

formatReadableSize(sum(bytes)) as size,

sum(rows) as rows,

max(modification_time) as latest_modification,

sum(bytes) as bytes_size,

any(engine) as engine,

formatReadableSize(sum(primary_key_bytes_in_memory)) as primary_keys_size

from system.parts

where active

group by database, table

order by bytes_size desc;בואו לגלות כמה יומנים תפסו בקליקהאוס.

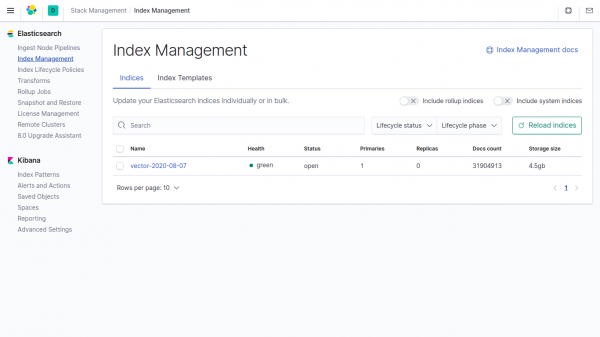

גודל טבלת היומנים הוא 857.19 MB.

גודלם של אותם נתונים באינדקס ב- Elasticsearch הוא 4,5GB.

אם לא מציינים נתונים בווקטור בפרמטרים, Clickhouse לוקח 4500/857.19 = פי 5.24 פחות מאשר ב- Elasticsearch.

בוקטור, שדה הדחיסה משמש כברירת מחדל.

צ'אט בטלגרם מאת

צ'אט בטלגרם מאת

צ'אט בטלגרם מאת ""

מקור: www.habr.com