こんにちは、私はドミトリー ログヴィネンコです。Vezet グループ企業の分析部門のデータ エンジニアです。

ETL プロセスを開発するための素晴らしいツールである Apache Airflow について説明します。 ただし、Airflow は非常に多機能で多面的であるため、データ フローに関与していないが、定期的にプロセスを起動してその実行を監視する必要がある場合でも、Airflow を詳しく検討する必要があります。

そして、はい、私は伝えるだけでなく、プログラムにも多くのコード、スクリーンショット、推奨事項が含まれていることを示します。

Airflow という単語を Google で検索するとよく表示されるもの / ウィキメディア コモンズ

目次

導入

Apache Airflow は Django に似ています。

- Pythonで書かれた

- 素晴らしい管理パネルがあり、

- 無限に拡大する

- より良いだけであり、まったく異なる目的のために作成されました。つまり、(kat の前に書かれているように):

- 無制限の数のマシンでタスクを実行および監視 (Celery / Kubernetes と良心が許す限り)

- 非常に書きやすく理解しやすい Python コードからの動的なワークフロー生成を使用

- そして、既製のコンポーネントと自家製プラグインの両方を使用して、データベースと API を相互に接続する機能 (これは非常に簡単です)。

Apache Airflow を次のように使用します。

- 当社は、DWH と ODS (Vertica と Clickhouse があります) のさまざまなソース (多くの SQL Server および PostgreSQL インスタンス、アプリケーション メトリクスを備えたさまざまな API、1C も含む) からデータを収集します。

- どのくらい進んでいるのか

cron、ODS 上でデータ統合プロセスを開始し、そのメンテナンスも監視します。

最近まで、私たちのニーズは 32 コアと 50 GB の RAM を備えた XNUMX 台の小型サーバーでカバーされていました。 Airflow では、これは次のように機能します。

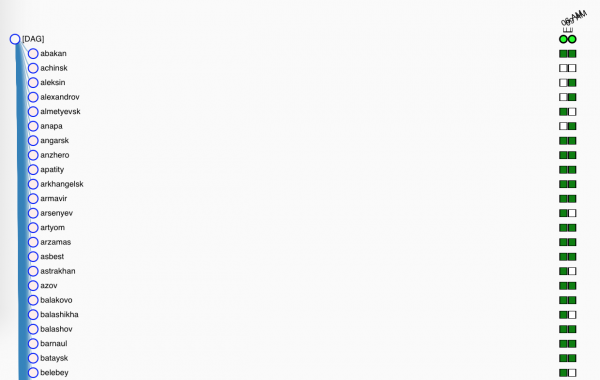

- более 200ダグ (実際にはタスクを詰め込んだワークフロー)、

- それぞれ平均して 70のタスク,

- この良さは始まります(平均的にも) XNUMX時間にXNUMX回.

そして、どのように拡張したかについては、以下に書きますが、ここで、解決する超問題を定義しましょう。

オリジナルの SQL Server が 50 つあり、それぞれに XNUMX のデータベース (それぞれ XNUMX つのプロジェクトのインスタンス) があり、それらは同じ構造 (ほぼどこでも、ムアハハハ) を持っています。つまり、それぞれに Orders テーブル (幸いなことに、そのテーブルが含まれています) があります。名前はあらゆるビジネスにプッシュできます)。 サービス フィールド (ソース サーバー、ソース データベース、ETL タスク ID) を追加してデータを取得し、それらをたとえば Vertica に単純に投入します。

行こう!

主要部分、実践的(そして少し理論的)

なぜ私たち(そしてあなた)は

木が大きくて私が素朴だった頃 SQL-schik ロシアのある小売店では、利用可能な XNUMX つのツールを使用して ETL プロセス、別名データ フローを詐欺しました。

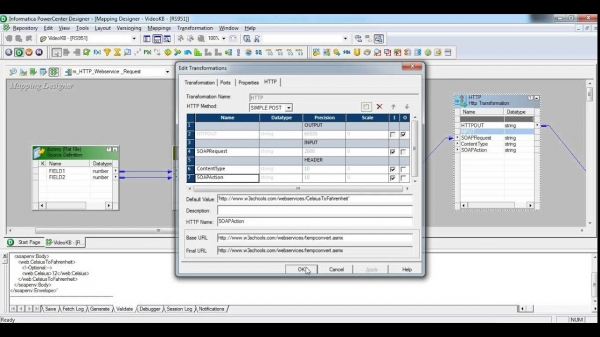

- インフォマティカ パワー センター - 独自のハードウェアと独自のバージョン管理を備えた、非常に普及し、非常に生産性の高いシステム。 神がその能力の1%を禁じたので使用しました。 なぜ? まず第一に、このインターフェイスは 380 年代のもので、私たちに精神的なプレッシャーを与えました。 第二に、この装置は非常に複雑なプロセス、コンポーネントの猛烈な再利用、その他の非常に重要な企業のトリック向けに設計されています。 エアバスAXNUMXの翼のように、年間費用がかかるという事実については、何も言いません。

スクリーンショットは 30 歳未満の人を少し傷つける可能性があるので注意してください

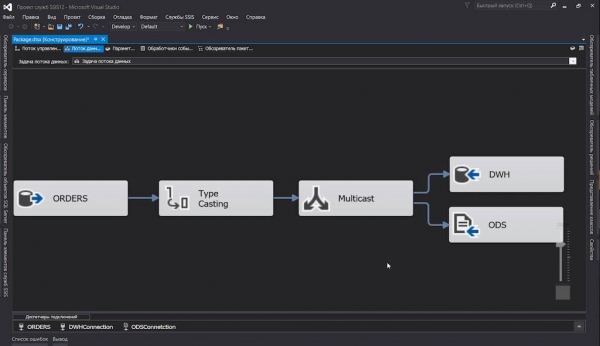

- SQL サーバー統合サーバー - プロジェクト内フローでこの仲間を使用しました。 実際のところ、私たちはすでに SQL Server を使用しており、その ETL ツールを使用しないのはどういうわけか不合理です。 その内容はすべて良好です。インターフェースも美しく、進捗レポートも優れています。しかし、これが私たちがソフトウェア製品を愛する理由ではありません。ああ、これのためではありません。 バージョンアップする

dtsx(保存時にノードがシャッフルされた XML です) できますが、何が意味があるのでしょうか? 何百ものテーブルをあるサーバーから別のサーバーにドラッグするタスク パッケージを作成してみてはどうでしょうか? はい、何と XNUMX 個です。マウスのボタンをクリックすると、人差し指が XNUMX 個の部分から落ちてしまいます。 しかし、そのほうがファッショナブルに見えるのは間違いありません。

私たちは確かに出口を探していました。 偶数の場合 殆ど 自作の SSIS パッケージ ジェネレーターにたどり着きました ...

…そして、新しい仕事が私を見つけました。 そして、Apache Airflow が私を追い越しました。

ETL プロセスの記述が単純な Python コードであると知ったとき、私はただ喜びに踊らされませんでした。 このようにして、データ ストリームのバージョン管理と差分が行われ、数百のデータベースから単一の構造を持つテーブルを 13 つのターゲットに流し込むことが、XNUMX つ半または XNUMX つの XNUMX インチ画面内の Python コードの問題になりました。

クラスターの組み立て

完全に幼稚園のようなものを手配したり、Airflow、選択したデータベース、Celery、ドックで説明されているその他のケースのインストールなど、完全に明白なことについてここで話すのはやめましょう。

すぐに実験を始められるように、スケッチをしました docker-compose.yml その中で:

- 実際に育ててみましょう エアフロー: スケジューラ、Web サーバー。 Flower もそこで回転して Celery タスクを監視します (すでにプッシュされているため)

apache/airflow:1.10.10-python3.7、でも気にしません) - PostgreSQLここに、Airflow はサービス情報 (スケジューラー データ、実行統計など) を書き込み、Celery は完了したタスクをマークします。

- Redisの、Celery のタスク ブローカーとして機能します。

- セロリ労働者、タスクの直接実行に従事します。

- フォルダへ

./dagsDAG の説明を含むファイルを追加します。 それらはその場で取得されるため、くしゃみをするたびにスタック全体をジャグリングする必要はありません。

例のコードは一部完全には示されていませんが (テキストが乱雑にならないように)、プロセスのどこかで変更されています。 完全に動作するコード例はリポジトリにあります。 .

docker-compose.yml

version: '3.4'

x-airflow-config: &airflow-config

AIRFLOW__CORE__DAGS_FOLDER: /dags

AIRFLOW__CORE__EXECUTOR: CeleryExecutor

AIRFLOW__CORE__FERNET_KEY: MJNz36Q8222VOQhBOmBROFrmeSxNOgTCMaVp2_HOtE0=

AIRFLOW__CORE__HOSTNAME_CALLABLE: airflow.utils.net:get_host_ip_address

AIRFLOW__CORE__SQL_ALCHEMY_CONN: postgres+psycopg2://airflow:airflow@airflow-db:5432/airflow

AIRFLOW__CORE__PARALLELISM: 128

AIRFLOW__CORE__DAG_CONCURRENCY: 16

AIRFLOW__CORE__MAX_ACTIVE_RUNS_PER_DAG: 4

AIRFLOW__CORE__LOAD_EXAMPLES: 'False'

AIRFLOW__CORE__LOAD_DEFAULT_CONNECTIONS: 'False'

AIRFLOW__EMAIL__DEFAULT_EMAIL_ON_RETRY: 'False'

AIRFLOW__EMAIL__DEFAULT_EMAIL_ON_FAILURE: 'False'

AIRFLOW__CELERY__BROKER_URL: redis://broker:6379/0

AIRFLOW__CELERY__RESULT_BACKEND: db+postgresql://airflow:airflow@airflow-db/airflow

x-airflow-base: &airflow-base

image: apache/airflow:1.10.10-python3.7

entrypoint: /bin/bash

restart: always

volumes:

- ./dags:/dags

- ./requirements.txt:/requirements.txt

services:

# Redis as a Celery broker

broker:

image: redis:6.0.5-alpine

# DB for the Airflow metadata

airflow-db:

image: postgres:10.13-alpine

environment:

- POSTGRES_USER=airflow

- POSTGRES_PASSWORD=airflow

- POSTGRES_DB=airflow

volumes:

- ./db:/var/lib/postgresql/data

# Main container with Airflow Webserver, Scheduler, Celery Flower

airflow:

<<: *airflow-base

environment:

<<: *airflow-config

AIRFLOW__SCHEDULER__DAG_DIR_LIST_INTERVAL: 30

AIRFLOW__SCHEDULER__CATCHUP_BY_DEFAULT: 'False'

AIRFLOW__SCHEDULER__MAX_THREADS: 8

AIRFLOW__WEBSERVER__LOG_FETCH_TIMEOUT_SEC: 10

depends_on:

- airflow-db

- broker

command: >

-c " sleep 10 &&

pip install --user -r /requirements.txt &&

/entrypoint initdb &&

(/entrypoint webserver &) &&

(/entrypoint flower &) &&

/entrypoint scheduler"

ports:

# Celery Flower

- 5555:5555

# Airflow Webserver

- 8080:8080

# Celery worker, will be scaled using `--scale=n`

worker:

<<: *airflow-base

environment:

<<: *airflow-config

command: >

-c " sleep 10 &&

pip install --user -r /requirements.txt &&

/entrypoint worker"

depends_on:

- airflow

- airflow-db

- broker備考:

- 構図の組み立てにおいては、よく知られたイメージに大きく依存しました。 - 必ずチェックしてください。 もしかしたら、あなたの人生には他に何も必要ないかもしれません。

- すべてのエアフロー設定は、

airflow.cfgだけでなく、(開発者のおかげで) 環境変数を介して、私はそれを悪意を持って利用しました。 - 当然のことながら、本番環境に対応したものではありません。意図的にコンテナにハートビートを設定せず、セキュリティを気にしませんでした。 しかし、私は実験者にふさわしい最低限のことは行いました。

- ご了承ください:

- DAG フォルダーは、スケジューラーとワーカーの両方がアクセスできる必要があります。

- 同じことがすべてのサードパーティ ライブラリにも当てはまります。これらはすべて、スケジューラとワーカーを備えたマシンにインストールする必要があります。

さて、これで簡単です。

$ docker-compose up --scale worker=3すべてが起動したら、Web インターフェイスを確認できます。

- 気流:

- 花:

コンセプト

これらすべての「ダグ」で何も理解できなかった場合は、次の短い辞書を参照してください。

- スケジューラ - Airflow で最も重要なおじさん。人間ではなくロボットが一生懸命働くことを制御します。スケジュールを監視し、データを更新し、タスクを開始します。

一般に、古いバージョンではメモリに問題があり (記憶喪失ではなく、リークです)、従来のパラメータが設定に残っていたこともありました。

run_duration— 再起動間隔。 しかし、今はすべてが順調です。 - DAG (別名「dug」) - 「有向非巡回グラフ」ですが、このような定義でわかる人はほとんどいませんが、実際には、相互作用するタスク (下記を参照) のコンテナー、または SSIS のパッケージや Informatica のワークフローに相当します。

ダグに加えて、サブダグがまだある可能性がありますが、おそらくそれらには到達しないでしょう。

- DAG 実行 - 独自に割り当てられた初期化された DAG

execution_date。 同じ DAG の Dagran は並行して動作できます (もちろん、タスクを冪等にしている場合)。 - Operator 特定のアクションの実行を担当するコードの一部です。 演算子には次の XNUMX 種類があります。

- アクション私たちのお気に入りのように

PythonOperator、任意の (有効な) Python コードを実行できます。 - 転送、データをある場所から別の場所へ転送します。

MsSqlToHiveTransfer; - センサー 一方、イベントが発生するまで反応したり、DAG のさらなる実行を遅らせたりすることができます。

HttpSensor指定されたエンドポイントをプルし、必要な応答が待機したら転送を開始しますGoogleCloudStorageToS3Operator。 好奇心旺盛な人はこう尋ねます。 結局のところ、演算子で直接繰り返しを実行できるのです。」 そして、中断されたオペレーターによってタスクのプールが詰まらないようにするためです。 センサーは起動して確認し、次の試行の前に停止します。

- アクション私たちのお気に入りのように

- 仕事 - 宣言されたオペレーターは、タイプに関係なく、DAG にアタッチされ、タスクのランクに昇格します。

- タスクインスタンス - 総合プランナーが、パフォーマーとワーカーの戦闘にタスクを送信する時期が来たと判断したとき (使用する場合はその場で)

LocalExecutorまたは、次の場合はリモート ノードに送信されます。CeleryExecutor)、それらにコンテキスト(つまり、一連の変数 - 実行パラメータ)を割り当て、コマンドまたはクエリ テンプレートを展開し、それらをプールします。

タスクを生成します

まず、ダグの一般的なスキームの概要を説明します。次に、いくつかの重要な解決策を適用するため、詳細をさらに詳しく見ていきます。

したがって、最も単純な形式では、このような DAG は次のようになります。

from datetime import timedelta, datetime

from airflow import DAG

from airflow.operators.python_operator import PythonOperator

from commons.datasources import sql_server_ds

dag = DAG('orders',

schedule_interval=timedelta(hours=6),

start_date=datetime(2020, 7, 8, 0))

def workflow(**context):

print(context)

for conn_id, schema in sql_server_ds:

PythonOperator(

task_id=schema,

python_callable=workflow,

provide_context=True,

dag=dag)それを理解しましょう:

- まず、必要なライブラリをインポートし、 何か他のもの;

sql_server_ds- ですList[namedtuple[str, str]]Airflow Connections からの接続の名前とプレートの取得元となるデータベース。dag- 私たちのダグの発表。これは必ず含まれている必要がありますglobals()そうでない場合、Airflow はそれを見つけられません。 ダグは次のようにも言う必要があります。- 彼の名前は

orders- この名前は Web インターフェイスに表示されます。 - 彼はXNUMX月XNUMX日の真夜中から働く予定だという。

- そして、それはおよそ 6 時間ごとに実行されるはずです (ここでは、代わりにタフな人のために

timedelta()許容可能cron-ライン0 0 0/6 ? * * *、あまりクールではない場合は、次のような表現です@daily);

- 彼の名前は

workflow()が主な仕事をしますが、今はそうではありません。 現時点では、コンテキストをログにダンプするだけです。- 次に、タスクを作成するという簡単な魔法を紹介します。

- 私たちは情報源を調べます。

- 初期化する

PythonOperator、ダミーを実行しますworkflow()。 タスクの一意の (DAG 内での) 名前を指定し、DAG 自体を結び付けることを忘れないでください。 国旗provide_context次に、追加の引数を関数に注ぎ込み、それを使用して慎重に収集します。**context.

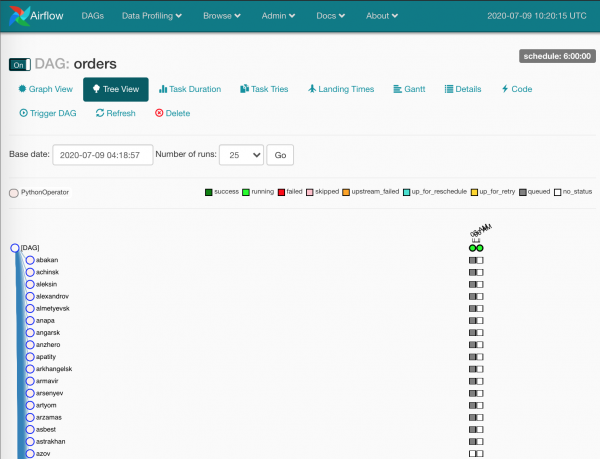

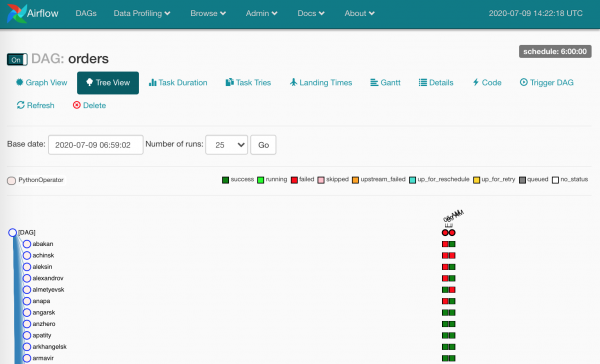

今のところはそれだけです。 得られたもの:

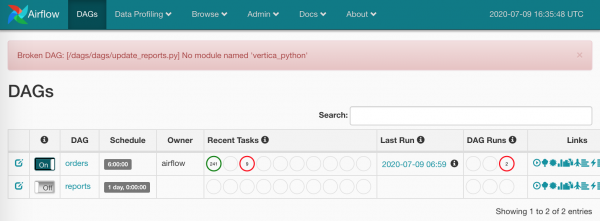

- Webインターフェイスの新しいDAG、

- XNUMX 個のタスクが並行して実行されます (Airflow、Celery の設定、およびサーバーの容量が許容する場合)。

まあ、ほぼわかりました。

依存関係をインストールするのは誰ですか?

この全体を単純化するために、私はねじ込みました docker-compose.yml 処理 requirements.txt すべてのノード上で。

今ではそれはなくなりました:

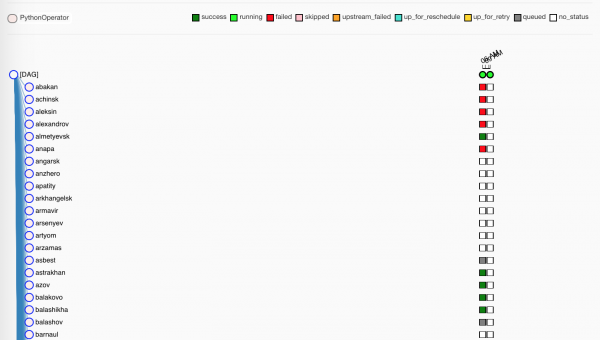

灰色の四角は、スケジューラによって処理されるタスク インスタンスです。

少し待ちます。ワーカーによってタスクが割り当てられます。

もちろん、緑色のものは無事に作業を完了しました。 レッズはあまり成功していない。

ちなみに、製品にはフォルダーはありません

./dags、マシン間に同期はありません - すべての DAG はgitGitlab 上で、Gitlab CI はマージ時にマシンに更新を配布します。master.

花について少し

労働者たちが私たちのおしゃぶりを叩きつけている間、私たちに何かを教えてくれるもう一つのツール、フラワーを思い出してみましょう。

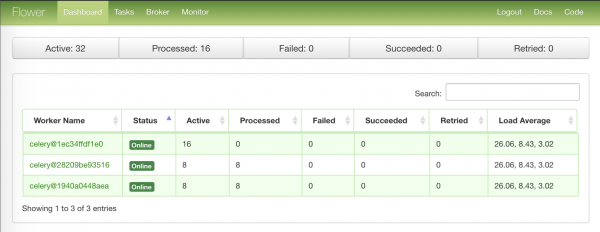

ワーカー ノードの概要情報を含む最初のページ:

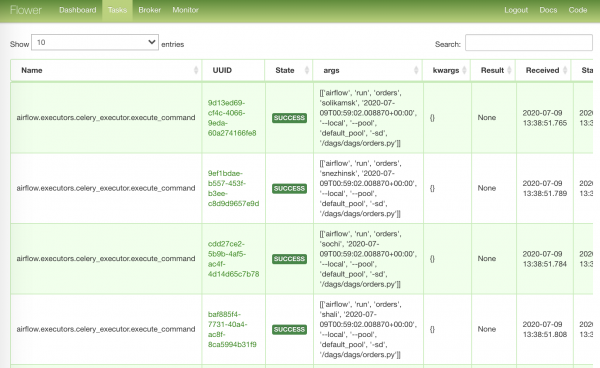

実行されたタスクが含まれる最も集中的なページ:

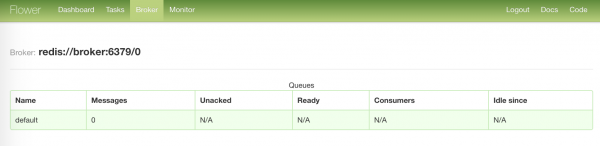

私たちのブローカーのステータスが記載された最も退屈なページ:

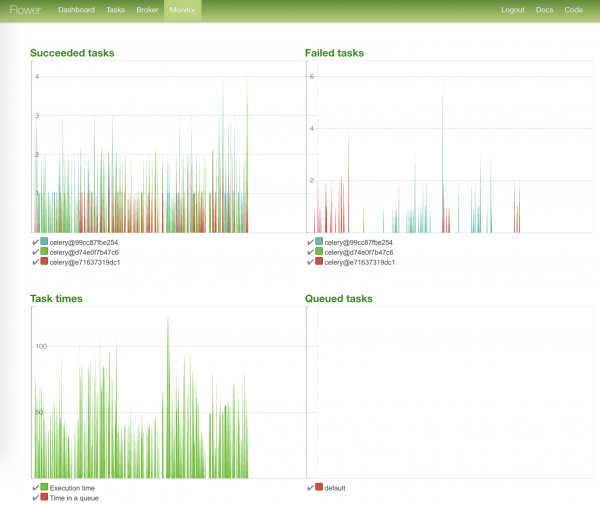

最も明るいページには、タスクのステータス グラフとその実行時間が表示されます。

不足しているものをロードします

それで、すべてのタスクがうまくいきました、あなたは負傷者を運び去ることができます。

そして、何らかの理由で多くの負傷者がいました。 Airflow を正しく使用した場合、これらの四角形は、データが確実に到着していないことを示しています。

ログを監視し、障害が発生したタスク インスタンスを再起動する必要があります。

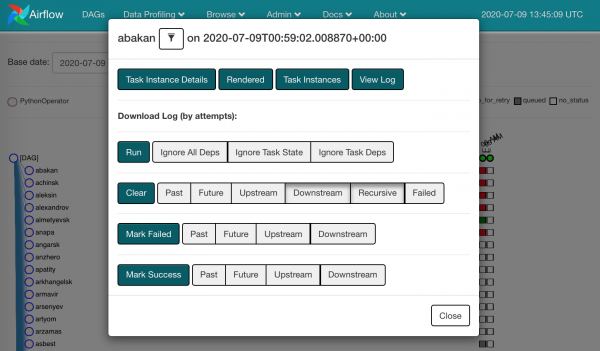

任意の四角形をクリックすると、利用可能なアクションが表示されます。

倒れているものを奪ってクリアすることができます。 つまり、そこで何かが失敗したことを忘れ、同じインスタンス タスクがスケジューラに送られることになります。

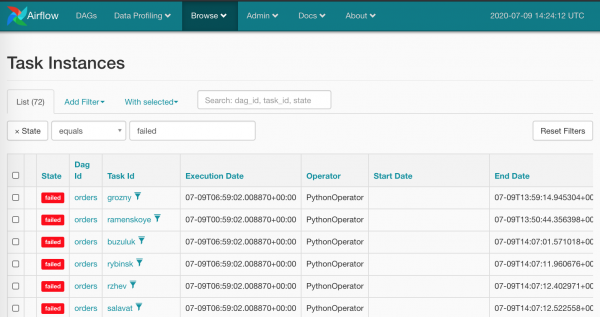

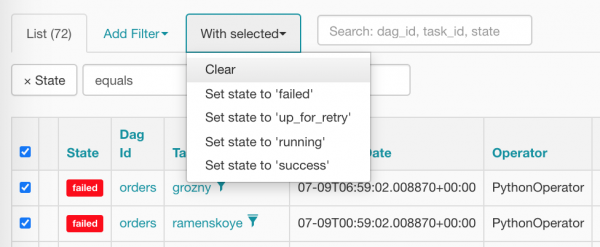

赤い四角がすべて表示された状態でマウスを使ってこれを行うのは、あまり人道的ではないことは明らかです。これは私たちが Airflow に期待するものではありません。 当然のことながら、私たちは大量破壊兵器を持っています。 Browse/Task Instances

すべてを一度に選択してゼロにリセットし、正しい項目をクリックしてみましょう。

清掃後のタクシーは次のようになります (タクシーはすでにスケジューラーがスケジュールを設定するのを待っています)。

接続、フック、その他の変数

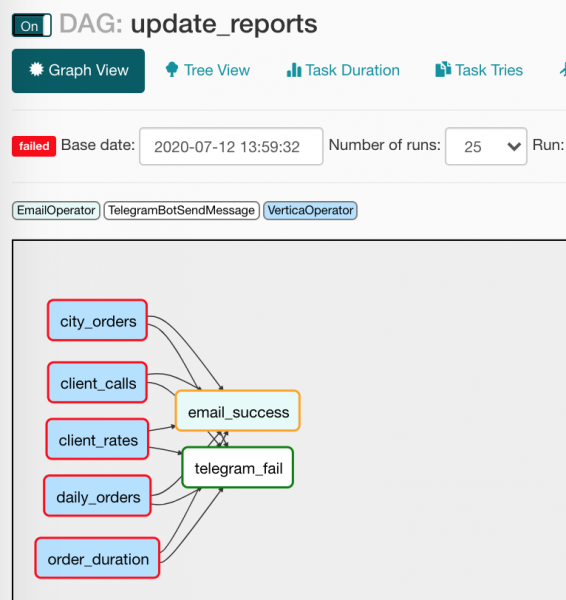

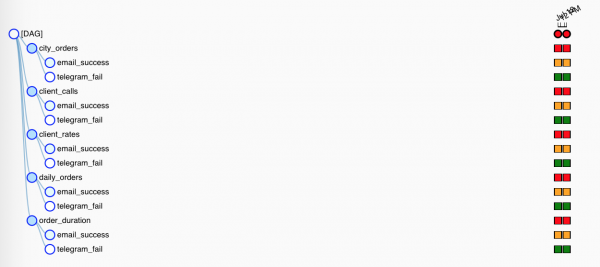

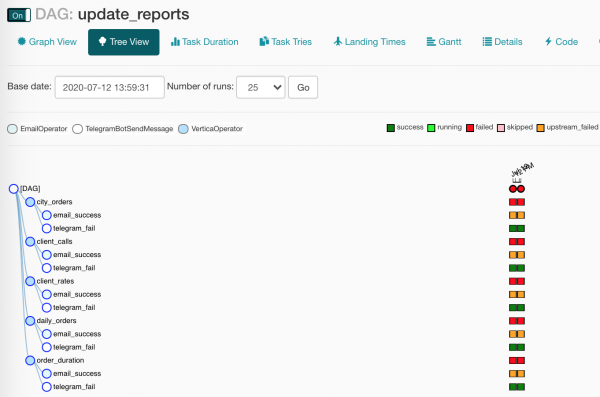

次の DAG を見てみましょう。 update_reports.py:

from collections import namedtuple

from datetime import datetime, timedelta

from textwrap import dedent

from airflow import DAG

from airflow.contrib.operators.vertica_operator import VerticaOperator

from airflow.operators.email_operator import EmailOperator

from airflow.utils.trigger_rule import TriggerRule

from commons.operators import TelegramBotSendMessage

dag = DAG('update_reports',

start_date=datetime(2020, 6, 7, 6),

schedule_interval=timedelta(days=1),

default_args={'retries': 3, 'retry_delay': timedelta(seconds=10)})

Report = namedtuple('Report', 'source target')

reports = [Report(f'{table}_view', table) for table in [

'reports.city_orders',

'reports.client_calls',

'reports.client_rates',

'reports.daily_orders',

'reports.order_duration']]

email = EmailOperator(

task_id='email_success', dag=dag,

to='{{ var.value.all_the_kings_men }}',

subject='DWH Reports updated',

html_content=dedent("""Господа хорошие, отчеты обновлены"""),

trigger_rule=TriggerRule.ALL_SUCCESS)

tg = TelegramBotSendMessage(

task_id='telegram_fail', dag=dag,

tg_bot_conn_id='tg_main',

chat_id='{{ var.value.failures_chat }}',

message=dedent("""

Наташ, просыпайся, мы {{ dag.dag_id }} уронили

"""),

trigger_rule=TriggerRule.ONE_FAILED)

for source, target in reports:

queries = [f"TRUNCATE TABLE {target}",

f"INSERT INTO {target} SELECT * FROM {source}"]

report_update = VerticaOperator(

task_id=target.replace('reports.', ''),

sql=queries, vertica_conn_id='dwh',

task_concurrency=1, dag=dag)

report_update >> [email, tg]皆さんはレポート更新をしたことがありますか? これも彼女の話です。データを取得するソースのリストがあります。 どこに置くかリストがあります。 何かが起こったり壊れたりしたときは、クラクションを鳴らすことを忘れないでください(まあ、これは私たちのことではありません、いいえ)。

ファイルをもう一度調べて、新たに不明瞭になった点を見てみましょう。

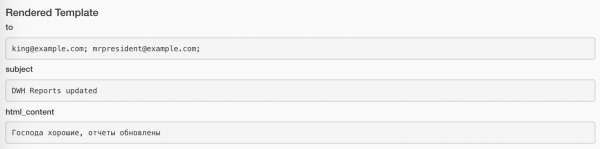

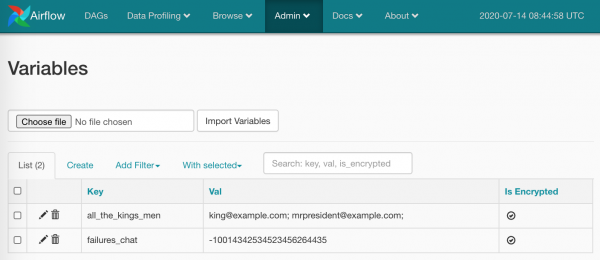

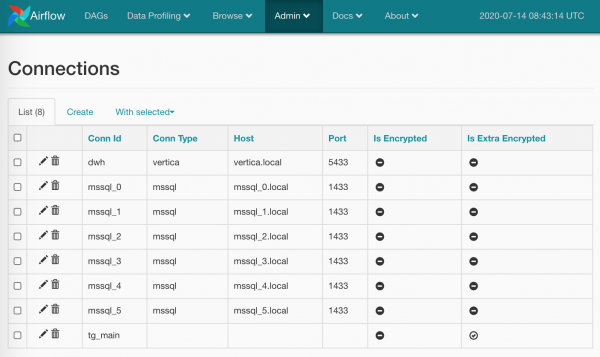

from commons.operators import TelegramBotSendMessage- 独自のオペレーターを作成することを妨げるものは何もありません。これを利用して、Unblocked にメッセージを送信するための小さなラッパーを作成しました。 (この演算子については後で詳しく説明します)。default_args={}- dag は、すべての演算子に同じ引数を配布できます。to='{{ var.value.all_the_kings_men }}'- 分野toハードコーディングはしませんが、Jinja と電子メールのリストを含む変数を使用して動的に生成します。これを慎重に入力しました。Admin/Variables;trigger_rule=TriggerRule.ALL_SUCCESS— オペレーターを開始するための条件。 私たちの場合、すべての依存関係がうまくいった場合にのみ、手紙が上司に届きます。 首尾よく;tg_bot_conn_id='tg_main'- 引数conn_idで作成した接続 ID を受け入れますAdmin/Connections;trigger_rule=TriggerRule.ONE_FAILED- Telegram 内のメッセージは、落ちたタスクがある場合にのみ飛び去ります。task_concurrency=1- XNUMX つのタスクの複数のタスク インスタンスを同時に起動することは禁止されています。 そうしないと、複数の製品が同時に発売されることになります。VerticaOperator(XNUMXつのテーブルを見ながら)report_update >> [email, tg]- 全てVerticaOperator次のような手紙やメッセージの送信に集中します。

ただし、Notifier オペレーターは起動条件が異なるため、機能するのは XNUMX つだけです。 ツリー ビューでは、すべてが少し視覚的に劣って見えます。

について少しお話します マクロ そして彼らの友人たち - 変数.

マクロは、さまざまな有用な情報を演算子の引数に置き換えることができる Jinja プレースホルダーです。 たとえば、次のようになります。

SELECT

id,

payment_dtm,

payment_type,

client_id

FROM orders.payments

WHERE

payment_dtm::DATE = '{{ ds }}'::DATE{{ ds }} コンテキスト変数の内容に展開されます execution_date 形式で YYYY-MM-DD: 2020-07-14。 最も優れている点は、コンテキスト変数が特定のタスク インスタンス (ツリー ビューの四角形) に固定されており、再起動するとプレースホルダーが同じ値に展開されることです。

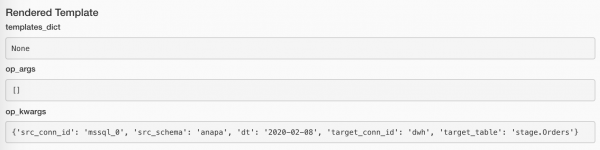

割り当てられた値は、各タスク インスタンスの [レンダリング] ボタンを使用して表示できます。 手紙を送るタスクは次のようになります。

そして、メッセージを送信するタスクでも次のようになります。

利用可能な最新バージョンの組み込みマクロの完全なリストは、ここから入手できます。

さらに、プラグインを使用すると独自のマクロを宣言できますが、それはまた別の話です。

事前定義されたものに加えて、変数の値を置き換えることもできます (これは上記のコードですでに使用しています)。 で作成しましょう Admin/Variables いくつかのこと:

使えるものはすべて:

TelegramBotSendMessage(chat_id='{{ var.value.failures_chat }}')値はスカラーにすることも、JSON にすることもできます。 JSONの場合:

bot_config

{

"bot": {

"token": 881hskdfASDA16641,

"name": "Verter"

},

"service": "TG"

}目的のキーへのパスを使用するだけです。 {{ var.json.bot_config.bot.token }}.

文字通り一言言って、スクリーンショットを XNUMX 枚見せます。 接続。 ここではすべてが基本的なものです: ページ上 Admin/Connections 接続を作成し、そこにログイン/パスワードとより具体的なパラメータを追加します。 このような:

パスワードは (デフォルトよりも徹底的に) 暗号化することも、(私が行ったように) 接続タイプを省略することもできます。 tg_main) - 実際のところ、タイプのリストは Airflow モデルに組み込まれており、ソース コードにアクセスしない限り拡張することはできません (突然何かをグーグルで検索しなかった場合は、修正してください) が、ただそれだけでクレジットを取得することを妨げるものは何もありません。名前。

同じ名前で複数の接続を作成することもできます。この場合、メソッドは BaseHook.get_connection()、名前で接続を取得します。 ランダム いくつかの同名者からの名前です (ラウンドロビンを作成する方が論理的ですが、それは Airflow 開発者の良心に任せましょう)。

変数と接続は確かに優れたツールですが、フローのどの部分をコード自体に保存し、どの部分を保存のために Airflow に渡すかというバランスを失わないことが重要です。 一方で、UI を通じてメール ボックスなどの値をすばやく変更できると便利です。 一方で、これは依然として、私たち (私) が排除したかったマウス クリックへの回帰です。

接続の操作もタスクの XNUMX つです フック。 一般に、Airflow フックは、サードパーティのサービスやライブラリに接続するためのポイントです。 例えば、 JiraHook Jira と対話するためのクライアントが開きます (タスクを前後に移動できます)。 SambaHook ローカルファイルをプッシュできます smb-点。

カスタム演算子の解析

そして、私たちはそれがどのように作られているかを見ることに近づきました TelegramBotSendMessage

コード commons/operators.py 実際の演算子を使用して:

from typing import Union

from airflow.operators import BaseOperator

from commons.hooks import TelegramBotHook, TelegramBot

class TelegramBotSendMessage(BaseOperator):

"""Send message to chat_id using TelegramBotHook

Example:

>>> TelegramBotSendMessage(

... task_id='telegram_fail', dag=dag,

... tg_bot_conn_id='tg_bot_default',

... chat_id='{{ var.value.all_the_young_dudes_chat }}',

... message='{{ dag.dag_id }} failed :(',

... trigger_rule=TriggerRule.ONE_FAILED)

"""

template_fields = ['chat_id', 'message']

def __init__(self,

chat_id: Union[int, str],

message: str,

tg_bot_conn_id: str = 'tg_bot_default',

*args, **kwargs):

super().__init__(*args, **kwargs)

self._hook = TelegramBotHook(tg_bot_conn_id)

self.client: TelegramBot = self._hook.client

self.chat_id = chat_id

self.message = message

def execute(self, context):

print(f'Send "{self.message}" to the chat {self.chat_id}')

self.client.send_message(chat_id=self.chat_id,

message=self.message)ここでは、Airflow の他のすべてと同様に、すべてが非常にシンプルです。

- 継承元

BaseOperatorかなりの数の Airflow 固有の機能が実装されています (暇なときに見てください) - 宣言されたフィールド

template_fieldsここで、Jinja は処理するマクロを探します。 - ~に対する正しい議論を整理した

__init__()、必要に応じてデフォルトを設定します。 - 祖先の初期化も忘れていません。

- 対応するフックを開いた

TelegramBotHookそこからクライアントオブジェクトを受け取りました。 - オーバーライドされた (再定義された) メソッド

BaseOperator.execute()、オペレーターを起動する時間が来ると、Airfowがけいれんします - その中に、ログインを忘れてメインアクションを実装します。 (ちなみに、私たちはログインしていますstdoutиstderr- 気流があらゆるものを遮断し、美しく包み込み、必要に応じて分解します。)

何があるか見てみましょう commons/hooks.py。 ファイルの最初の部分とフック自体は次のとおりです。

from typing import Union

from airflow.hooks.base_hook import BaseHook

from requests_toolbelt.sessions import BaseUrlSession

class TelegramBotHook(BaseHook):

"""Telegram Bot API hook

Note: add a connection with empty Conn Type and don't forget

to fill Extra:

{"bot_token": "YOuRAwEsomeBOtToKen"}

"""

def __init__(self,

tg_bot_conn_id='tg_bot_default'):

super().__init__(tg_bot_conn_id)

self.tg_bot_conn_id = tg_bot_conn_id

self.tg_bot_token = None

self.client = None

self.get_conn()

def get_conn(self):

extra = self.get_connection(self.tg_bot_conn_id).extra_dejson

self.tg_bot_token = extra['bot_token']

self.client = TelegramBot(self.tg_bot_token)

return self.clientここで何を説明すればよいのかわかりませんが、重要な点だけをメモしておきます。

- 継承し、引数について考えます - ほとんどの場合、引数は次の XNUMX つになります。

conn_id; - 標準的な方法をオーバーライドする: 自分自身に限界を設けた

get_conn()、ここでは接続パラメータを名前で取得し、セクションを取得するだけですextra(これは JSON フィールドです)。ここに (私自身の指示に従って!) Telegram ボット トークンを配置します。{"bot_token": "YOuRAwEsomeBOtToKen"}. - 私たちのインスタンスを作成します

TelegramBot、特定のトークンを与えます。

それで全部です。 次を使用してフックからクライアントを取得できます TelegramBotHook().clent または TelegramBotHook().get_conn().

ファイルの XNUMX 番目の部分では、同じものをドラッグしないように Telegram REST API のマイクロラッパーを作成します。 XNUMXつの方法について sendMessage.

class TelegramBot:

"""Telegram Bot API wrapper

Examples:

>>> TelegramBot('YOuRAwEsomeBOtToKen', '@myprettydebugchat').send_message('Hi, darling')

>>> TelegramBot('YOuRAwEsomeBOtToKen').send_message('Hi, darling', chat_id=-1762374628374)

"""

API_ENDPOINT = 'https://api.telegram.org/bot{}/'

def __init__(self, tg_bot_token: str, chat_id: Union[int, str] = None):

self._base_url = TelegramBot.API_ENDPOINT.format(tg_bot_token)

self.session = BaseUrlSession(self._base_url)

self.chat_id = chat_id

def send_message(self, message: str, chat_id: Union[int, str] = None):

method = 'sendMessage'

payload = {'chat_id': chat_id or self.chat_id,

'text': message,

'parse_mode': 'MarkdownV2'}

response = self.session.post(method, data=payload).json()

if not response.get('ok'):

raise TelegramBotException(response)

class TelegramBotException(Exception):

def __init__(self, *args, **kwargs):

super().__init__((args, kwargs))正しい方法は、すべてを合計することです。

TelegramBotSendMessage,TelegramBotHook,TelegramBot- プラグインをパブリックリポジトリに置き、オープンソースに提供します。

これらすべてを調査している間、レポートの更新は正常に失敗し、チャネルでエラー メッセージが送信されました。 間違ってないか確認してみます…

私たちの犬の中で何かが壊れました! それは私たちが期待していたことではありませんか? その通り!

注ぐつもりですか?

何かを見逃したような気がしますか? SQL Server から Vertica にデータを転送すると約束していたようですが、それを受け取って本題から逸れてしまいました、悪党!

この残虐行為は意図的なもので、私はあなたのためにいくつかの用語を解読する必要があっただけです。 これでさらに先へ進むことができます。

私たちの計画は次のとおりでした。

- ダグしてください

- タスクの生成

- すべてがどれほど美しいかを見てください

- フィルにセッション番号を割り当てる

- SQL Serverからデータを取得する

- データを Vertica に入れる

- 統計の収集

そこで、これをすべて実行できるようにするために、次の内容に小さな追加を加えました。 docker-compose.yml:

docker-compose.db.yml

version: '3.4'

x-mssql-base: &mssql-base

image: mcr.microsoft.com/mssql/server:2017-CU21-ubuntu-16.04

restart: always

environment:

ACCEPT_EULA: Y

MSSQL_PID: Express

SA_PASSWORD: SayThanksToSatiaAt2020

MSSQL_MEMORY_LIMIT_MB: 1024

services:

dwh:

image: jbfavre/vertica:9.2.0-7_ubuntu-16.04

mssql_0:

<<: *mssql-base

mssql_1:

<<: *mssql-base

mssql_2:

<<: *mssql-base

mssql_init:

image: mio101/py3-sql-db-client-base

command: python3 ./mssql_init.py

depends_on:

- mssql_0

- mssql_1

- mssql_2

environment:

SA_PASSWORD: SayThanksToSatiaAt2020

volumes:

- ./mssql_init.py:/mssql_init.py

- ./dags/commons/datasources.py:/commons/datasources.pyそこで私たちは次のように提起します。

- ホストとしての Vertica

dwhほとんどのデフォルト設定では、 - SQL Server の XNUMX つのインスタンス、

- 後者のデータベースにデータを入力します(いかなる場合も調べません)

mssql_init.py!)

前回よりも少し複雑なコマンドを使用して、すべての機能を起動します。

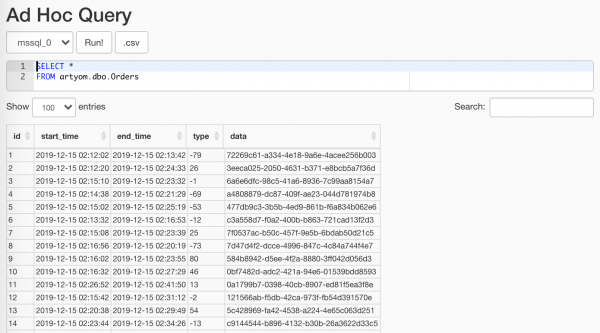

$ docker-compose -f docker-compose.yml -f docker-compose.db.yml up --scale worker=3私たちの奇跡のランダマイザーが生成したアイテムを使用できます Data Profiling/Ad Hoc Query:

重要なのは、それをアナリストに見せないことです

詳しく述べます ETLセッション しません。そこではすべてが些細なことです。ベースを作成し、その中に標識があり、すべてをコンテキスト マネージャーでラップして、次のことを行います。

with Session(task_name) as session:

print('Load', session.id, 'started')

# Load worflow

...

session.successful = True

session.loaded_rows = 15セッション.py

from sys import stderr

class Session:

"""ETL workflow session

Example:

with Session(task_name) as session:

print(session.id)

session.successful = True

session.loaded_rows = 15

session.comment = 'Well done'

"""

def __init__(self, connection, task_name):

self.connection = connection

self.connection.autocommit = True

self._task_name = task_name

self._id = None

self.loaded_rows = None

self.successful = None

self.comment = None

def __enter__(self):

return self.open()

def __exit__(self, exc_type, exc_val, exc_tb):

if any(exc_type, exc_val, exc_tb):

self.successful = False

self.comment = f'{exc_type}: {exc_val}n{exc_tb}'

print(exc_type, exc_val, exc_tb, file=stderr)

self.close()

def __repr__(self):

return (f'<{self.__class__.__name__} '

f'id={self.id} '

f'task_name="{self.task_name}">')

@property

def task_name(self):

return self._task_name

@property

def id(self):

return self._id

def _execute(self, query, *args):

with self.connection.cursor() as cursor:

cursor.execute(query, args)

return cursor.fetchone()[0]

def _create(self):

query = """

CREATE TABLE IF NOT EXISTS sessions (

id SERIAL NOT NULL PRIMARY KEY,

task_name VARCHAR(200) NOT NULL,

started TIMESTAMPTZ NOT NULL DEFAULT current_timestamp,

finished TIMESTAMPTZ DEFAULT current_timestamp,

successful BOOL,

loaded_rows INT,

comment VARCHAR(500)

);

"""

self._execute(query)

def open(self):

query = """

INSERT INTO sessions (task_name, finished)

VALUES (%s, NULL)

RETURNING id;

"""

self._id = self._execute(query, self.task_name)

print(self, 'opened')

return self

def close(self):

if not self._id:

raise SessionClosedError('Session is not open')

query = """

UPDATE sessions

SET

finished = DEFAULT,

successful = %s,

loaded_rows = %s,

comment = %s

WHERE

id = %s

RETURNING id;

"""

self._execute(query, self.successful, self.loaded_rows,

self.comment, self.id)

print(self, 'closed',

', successful: ', self.successful,

', Loaded: ', self.loaded_rows,

', comment:', self.comment)

class SessionError(Exception):

pass

class SessionClosedError(SessionError):

pass時が来た 私たちのデータを収集する XNUMX のテーブルから。 非常に気取らない行を使用してこれを実行してみましょう。

source_conn = MsSqlHook(mssql_conn_id=src_conn_id, schema=src_schema).get_conn()

query = f"""

SELECT

id, start_time, end_time, type, data

FROM dbo.Orders

WHERE

CONVERT(DATE, start_time) = '{dt}'

"""

df = pd.read_sql_query(query, source_conn)- Airflow から入手したフックを利用して

pymssql-接続 - 日付の形式の制限をリクエストに置き換えてみましょう。制限はテンプレート エンジンによって関数にスローされます。

- リクエストをフィードする

pandas誰が私たちを捕まえてくれるのかDataFrame- 将来的には役に立ちます。

代替品を使用しています

{dt}リクエストパラメータの代わりに%s私が悪いピノキオだからではなく、pandas対処できないpymssqlそして最後のものを滑りますparams: List彼は本当に望んでいるのにtuple.

開発者にも注意してくださいpymssqlもう彼をサポートしないことに決めた、そして引っ越しの時が来たpyodbc.

Airflow が関数の引数に何を詰め込んだかを見てみましょう。

データがない場合は、続行する意味がありません。 しかし、充填が成功したと考えるのも奇妙です。 しかし、これは間違いではありません。 ああ、ああ、どうすればいいですか? そして、これが次のとおりです。

if df.empty:

raise AirflowSkipException('No rows to load')AirflowSkipException Airflow にエラーがないことを伝えますが、タスクはスキップします。 インターフェイスには緑や赤の四角形はなく、ピンク色になります。

データを捨てましょう 複数の列:

df['etl_source'] = src_schema

df['etl_id'] = session.id

df['hash_id'] = hash_pandas_object(df[['etl_source', 'id']])すなわち:

- 注文を取得したデータベース、

- フラッディング セッションの ID (これは異なります) あらゆるタスクに対して),

- ソースと注文 ID からのハッシュ - 最終的なデータベース (すべてが XNUMX つのテーブルに注がれる) では、一意の注文 ID が得られます。

最後から XNUMX 番目のステップが残っています。すべてを Vertica に注ぎます。 そして、奇妙なことに、これを行う最も効果的で効果的な方法の XNUMX つは CSV を使用することです。

# Export data to CSV buffer

buffer = StringIO()

df.to_csv(buffer,

index=False, sep='|', na_rep='NUL', quoting=csv.QUOTE_MINIMAL,

header=False, float_format='%.8f', doublequote=False, escapechar='\')

buffer.seek(0)

# Push CSV

target_conn = VerticaHook(vertica_conn_id=target_conn_id).get_conn()

copy_stmt = f"""

COPY {target_table}({df.columns.to_list()})

FROM STDIN

DELIMITER '|'

ENCLOSED '"'

ABORT ON ERROR

NULL 'NUL'

"""

cursor = target_conn.cursor()

cursor.copy(copy_stmt, buffer)- 専用の受信機を製作中です

StringIO. pandas親切に私たちのを置きますDataFrameの形でCSV-行。- フックを使用してお気に入りの Vertica への接続を開いてみましょう。

- そして今、助けを借りて

copy()データを Vertika に直接送信してください。

ドライバーから何行が埋まっているかを取得し、セッション マネージャーにすべてが正常であることを伝えます。

session.loaded_rows = cursor.rowcount

session.successful = Trueそれだけです。

販売ではターゲットプレートを手作業で作成しております。 ここで私は自分自身に小さなマシンを許可しました。

create_schema_query = f'CREATE SCHEMA IF NOT EXISTS {target_schema};'

create_table_query = f"""

CREATE TABLE IF NOT EXISTS {target_schema}.{target_table} (

id INT,

start_time TIMESTAMP,

end_time TIMESTAMP,

type INT,

data VARCHAR(32),

etl_source VARCHAR(200),

etl_id INT,

hash_id INT PRIMARY KEY

);"""

create_table = VerticaOperator(

task_id='create_target',

sql=[create_schema_query,

create_table_query],

vertica_conn_id=target_conn_id,

task_concurrency=1,

dag=dag)使っています

VerticaOperator()データベース スキーマとテーブルを作成します (もちろん、まだ存在しない場合)。 主なことは、依存関係を正しく整理することです。

for conn_id, schema in sql_server_ds:

load = PythonOperator(

task_id=schema,

python_callable=workflow,

op_kwargs={

'src_conn_id': conn_id,

'src_schema': schema,

'dt': '{{ ds }}',

'target_conn_id': target_conn_id,

'target_table': f'{target_schema}.{target_table}'},

dag=dag)

create_table >> load要約

- そうですね、 - 小さなネズミは言いました、 - そうじゃないですか、今

私が森の中で最も恐ろしい動物だと確信していますか?

ジュリア・ドナルドソン「ザ・グラファロー」

同僚と私が競争したとしたら、誰がすぐに ETL プロセスを最初から作成して起動するか、彼らは SSIS とマウス、私は Airflow を持っていると思います...そして、メンテナンスのしやすさも比較するでしょう...わあ、私があらゆる面で彼らを倒すことにあなたも同意してくれると思います!

もう少し真剣に考えれば、Apache Airflow はプロセスをプログラム コードの形で記述することで私の役割を果たしました。 ずっと もっと快適に、もっと楽しく。

プラグインとスケーラビリティの両方の点で、その無制限の拡張性により、データの収集、準備、処理の全サイクル、さらには火星へのロケットの打ち上げなど、ほぼあらゆる分野で Airflow を使用する機会が得られます。コース)。

最終パート、参考資料および情報

私たちがあなたのために集めた熊手

start_date。 はい、これはすでにローカルミームです。 Doug の主な議論経由start_dateすべて合格します。 簡単に言うと、start_date現在の日付、およびschedule_interval- ある日、DAG は明日から始まります。start_date = datetime(2020, 7, 7, 0, 1, 2)もう問題はありません。

これに関連する別の実行時エラーがあります。

Task is missing the start_date parameterこれは、ほとんどの場合、dag オペレーターにバインドするのを忘れたことを示します。- すべて XNUMX 台のマシン上で。 はい、ベース (Airflow 自体と当社のコーティング)、Web サーバー、スケジューラー、ワーカーも含まれます。 そしてそれはうまくいきました。 しかし、時間の経過とともにサービスのタスクの数が増加し、PostgreSQL がインデックスに 20 ミリ秒ではなく 5 秒で応答し始めたとき、私たちはそれを取り上げて持ち去りました。

- ローカルエグゼキュータ。 はい、私たちはまだその上に座っています、そして私たちはすでに深淵の端に来ています。 これまでは LocalExecutor で十分でしたが、今度は少なくとも XNUMX 人のワーカーを追加して拡張する必要があり、CeleryExecutor に移行するために懸命に努力する必要があります。 そして、XNUMX 台のマシン上で作業できるという事実を考慮すると、たとえサーバー上であっても Celery を使用することを妨げるものは何もありません。「もちろん、正直に言って、本番環境に導入されることはありません!」

- 不使用 組み込みツール:

- つながり サービス資格情報を保存するため、

- SLA ミス 時間内に完了しなかったタスクに対応するため、

- エックスコム メタデータ交換のため(私は言いました) メタデータ!) DAG タスク間で。

- メール虐待。 さて、何と言えばいいでしょうか? 失敗したタスクのすべての繰り返しに対してアラートが設定されました。 現在、私の職場の Gmail には Airflow からのメールが 90 件を超えていますが、Web メールの銃口では一度に 100 件を超えるメールの受信と削除が拒否されています。

さらに落とし穴:

その他の自動化ツール

私たちが手を使わずに頭を使ってさらに作業できるように、Airflow は以下を用意しました。

- - 彼はまだ実験段階のステータスを保持しているため、作業が妨げられることはありません。 これを使用すると、DAG とタスクに関する情報を取得できるだけでなく、DAG の停止/開始、DAG 実行またはプールの作成もできます。

- - 多くのツールはコマンド ラインから利用できますが、WebUI から使用するのが不便なだけでなく、通常は存在しません。 例えば:

backfillタスクインスタンスを再起動するために必要です。

たとえば、アナリストがやって来てこう言いました。「そして同志、あなたは1月13日からXNUMX日までのデータにナンセンスがあります! 直して、直して、直して、直して!」 そして、あなたはとても趣味の良い人です。airflow backfill -s '2020-01-01' -e '2020-01-13' orders- 基本サービス:

initdb,resetdb,upgradedb,checkdb. runこれにより、XNUMX つのインスタンス タスクを実行し、すべての依存関係のスコアを取得することもできます。 さらに、次経由で実行できますLocalExecutorセロリのクラスターがある場合でも。- ほぼ同じことをします

test、ベースのみにも何も書き込みません。 connectionsシェルから接続を大量に作成できます。

- - プラグインを対象としたかなりハードコアな対話方法であり、小さな手でそれに群がることはありません。 しかし、私たちが行くのを誰が止めるのでしょうか

/home/airflow/dags、 走るipythonそしていじり始めますか? たとえば、次のコードを使用してすべての接続をエクスポートできます。from airflow import settings from airflow.models import Connection fields = 'conn_id conn_type host port schema login password extra'.split() session = settings.Session() for conn in session.query(Connection).order_by(Connection.conn_id): d = {field: getattr(conn, field) for field in fields} print(conn.conn_id, '=', d) - Airflow メタデータベースに接続します。 これに書き込むことはお勧めしませんが、さまざまな特定のメトリクスのタスク状態を取得することは、API を使用するよりもはるかに高速かつ簡単です。

すべてのタスクが冪等であるわけではありませんが、場合によっては失敗する可能性があり、これは正常であるとします。 ただし、いくつかの詰まりはすでに疑わしいため、確認する必要があります。

SQLに注意してください!

WITH last_executions AS ( SELECT task_id, dag_id, execution_date, state, row_number() OVER ( PARTITION BY task_id, dag_id ORDER BY execution_date DESC) AS rn FROM public.task_instance WHERE execution_date > now() - INTERVAL '2' DAY ), failed AS ( SELECT task_id, dag_id, execution_date, state, CASE WHEN rn = row_number() OVER ( PARTITION BY task_id, dag_id ORDER BY execution_date DESC) THEN TRUE END AS last_fail_seq FROM last_executions WHERE state IN ('failed', 'up_for_retry') ) SELECT task_id, dag_id, count(last_fail_seq) AS unsuccessful, count(CASE WHEN last_fail_seq AND state = 'failed' THEN 1 END) AS failed, count(CASE WHEN last_fail_seq AND state = 'up_for_retry' THEN 1 END) AS up_for_retry FROM failed GROUP BY task_id, dag_id HAVING count(last_fail_seq) > 0

リファレンス

そしてもちろん、Google が発行した最初の XNUMX 個のリンクは、私のブックマークの Airflow フォルダーの内容です。

- - もちろん、オフィスから始めなければなりません。 マニュアルはありますが、誰がその説明書を読むのでしょうか?

- - まあ、少なくともクリエイターからの推奨事項を読んでください。

- - まさに始まり: 写真のユーザーインターフェイス

- - (突然!) 私の説明で何か理解できなかったとしても、基本的な概念は十分に説明されています。

- - Airflow クラスターをセットアップするための短いガイド。

- - おそらく形式主義が多く、例が少ないことを除いて、ほぼ同じ興味深い記事です。

- — Celery との連携について。

- - タスクのべき等性、日付ではなく ID によるロード、変換、ファイル構造、その他の興味深い点について。

- - タスクとトリガー ルールの依存関係。これはついでに触れました。

- - スケジューラの一部の「意図したとおりに動作する」問題を解決し、失われたデータをダウンロードし、タスクに優先順位を付ける方法。

- — Airflow メタデータに対する便利な SQL クエリ。

- - カスタム センサーの作成に関する役立つセクションがあります。

- — データサイエンス用の AWS でのインフラストラクチャの構築に関する興味深い短いメモ。

- - よくある間違い(誰かがまだ説明書を読んでいない場合)。

- - Connections を使用することもできますが、パスワードを保存するのに苦労している人々の様子に微笑んでください。

- - 暗黙的な DAG 転送、関数でのコンテキストのスロー、依存関係、およびタスク起動のスキップについても説明します。

- - ご利用について

default argumentsиparamsテンプレートだけでなく、変数や接続でも使用できます。 - - プランナーが Airflow 2.0 に向けてどのように準備しているかについての話。

- - クラスターのデプロイに関する少し古い記事

docker-compose. - - テンプレートとコンテキスト転送を使用した動的タスク。

- — メールと Slack による標準およびカスタム通知。

- - タスク、マクロ、XCom の分岐。

記事内で使用されているリンク:

- - テンプレートで使用できるプレースホルダー。

- — ダッグを作成するときによくある間違い。

- -

docker-compose実験やデバッグなどに。 - — Telegram REST API の Python ラッパー。

出所: habr.com