TL;DR: Praevisus dux ad compages comparandas pro applicationibus currit in vasis. Facultates Docker aliaeque huiusmodi systemata considerentur.

Historia parva ubi omnia

historia

Prima nota methodi applicationis segregandi chroot est. Systema vocationis eiusdem nominis efficit ut radix directorii mutetur - ita ut programma quod appellatur ei accessum habeat solum ad lima in illo presul. Sed si progressio privilegiorum radicitus interne datur, potentia "effugere" chroo potest et accedere ad principale systematis operandi. Etiam, praeter directorium radicis mutandum, aliae facultates (RAM, processor), accessus reticularis non limitantur.

Methodus sequens est systema operandi plenum intra receptaculum currere, mechanismis nuclei systematis operandi utens. Haec methodus nomina diversa in diversis systematibus operandi habet, sed essentia eadem est: plura systemata operandi independentia currere, quorum unumquodque eundem nucleum ac systema operandi principale currit. Hoc includit carceres FreeBSD, zonas Solaris, OpenVZ, et LXC pro... LinuxIsolatio non solum spatio disci, sed etiam aliis opibus praebetur; praesertim, unumquodque receptaculum limites temporis CPU, RAM, et latitudinis retialis habere potest. Comparatum cum chroot, exire ex receptaculo difficilius est, cum superusor in receptaculo tantum ad interna receptaculi accessum habeat. Attamen, propter necessitatem systematis operandi intra receptaculum renovandi et usum versionum nuclei vetustiorum (pertinens ad... Linux(et minore modo FreeBSD) probabilitas non nulla est "perrumpendi" systema isolationis nuclei et aditum ad systema operandi principale adipiscendi.

Potius quam plenae armaturae systematis operandi in vase (cum systemate initializatione, involucro procuratori, etc.) deducendis statim applicationes deducere potes, summa res est applicationes tali opportunitate praebere (praesentibus bibliothecis necessariis. et alia lima). Haec idea pro fundamento applicationis virtualisationis continentis inserviebat, cuius repraesentativum praestantissimum et notissimum est Docker. Comparati ad systemata priora, machinationes solitariae flexibiles, quae constructae-in subsidiis virtualis reticulis inter continentia et applicationem status sequi intra continentem, consecuta sunt in facultate aedificandi unum ambitum cohaerentem e magno numero ministrantium physicarum ad currentium vasorum. sine manuali subsidiorum necessitate procuratio.

Docker

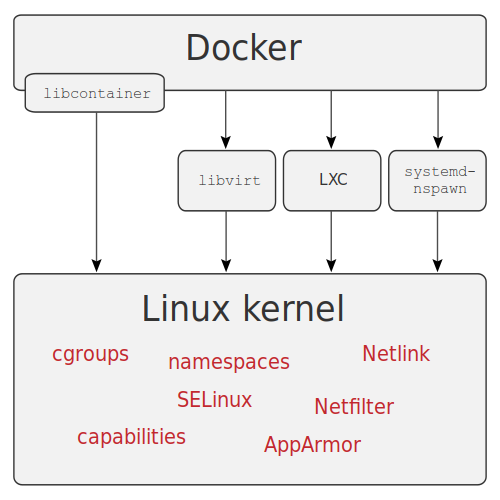

Docker est programmatura ad applicationes in receptacula collocandas notissimum. Lingua Go scriptum est et facultates nuclei nativas adhibet. Linux — greges programmatum (cgroups), spatia nominum, facultates, et cetera, necnon systemata fasciculorum Aufs et similia ad spatium disci conservandum.

Source: wikimedia

Architecture

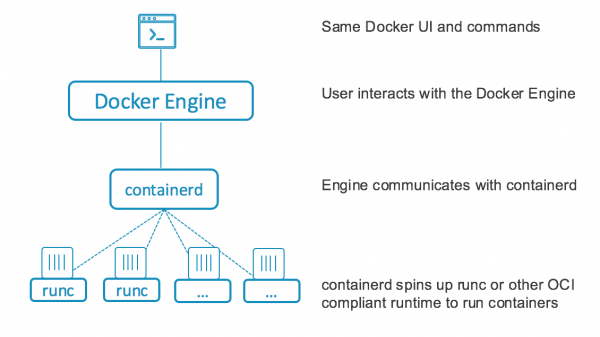

Ante versionem 1.11, Docker ut unum ministerium agebat quod omnes operationes continentium curabat: imagines continentium detrahendas, continentia incipienda, et petitiones API tractandas. A versione 1.11 incipiens, Docker in plures partes inter se agentes divisus est: containerd, quae totum cyclum vitae continentium (assignationem discorum, detractionem imaginum, connexionem retiariam, initium, institutionem, et monitorationem status continentium) curabat, et runC, ambitum executionis continentium in cgroups et aliis notis nuclei fundatum. LinuxIpsum ministerium Docker manet, sed nunc tantum ad petitiones API ad containerd transmissas tractandas inservit.

Installation et configuratione

Meus ventus modus ad instituendum docker machina est machina, quae, praeter directe inaugurari et configurare in remotis servientibus (nubila variis inclusis), efficit ut possibilitas operandi cum fasciculi systematibus remotis servientibus ac etiam varia mandata currere possit.

Attamen, ab anno 2018 proiectum vix elaboratum est, itaque illud instituemus methodo consueta plerisque distributionibus utentes. Linux methodus — repositorium addendo et fasciculos necessarios instituendo.

Haec methodus etiam ad institutionem automated adhibetur, exempli gratia Ansible vel aliis similibus systematibus utens, sed in hoc articulo non considero.

Installatio peragetur die Centos 7, machinam virtualem ut servitorem utar, ad institutionem satis est mandata infra exsequi:

# yum install -y yum-utils

# yum-config-manager --add-repo https://download.docker.com/linux/centos/docker-ce.repo

# yum install docker-ce docker-ce-cli containerd.ioPost institutionem, officium incipere debes et in startup pone:

# systemctl enable docker

# systemctl start docker

# firewall-cmd --zone=public --add-port=2377/tcp --permanentAccedit, catervam docularium creare potes, cuius usores sine sudo operari possunt, colligationem erige, aditum ad API ab extra da, nec obliviscere pressius parietem configurare (omnia quae non licet. prohibetur in exemplis supra et infra - hoc pro simplici et perspicuitate omisi), sed hic planius non ingrediar.

alius features

Praeter supra memoratam machinam technologiam subcriptio etiam est subcriptio, instrumentum ad imagines reponendas pro vasis, ac in vasis componendis, instrumentum ad automandum instruere applicationes in vasis, fasciculi YAML ad vasa construenda et configuranda. aliaeque res affines (exempli gratia retiacula, systemata fasciculi persistentes pro reposita notitia).

Ponere etiam potest vectores pro CICD. Alia notatio interesting in botro modo laborat, qui modus examinandi sic dictus (ante versionem 1.12 notus erat examen docker), quod permittit te unum infrastructuram convenire a pluribus servientibus pro vasis currentibus. Subsidium virtualis retis super omnibus servientibus est, constructum-in librario oneris, ac subsidium secretorum pro vasis.

YAML fasciculi ex docker componunt, cum minoribus modificationibus, pro talibus racemis adhiberi possunt, omnino automando sustentationem racemis parvis et mediocribus ad varios usus. Kubernetes pro magnis racemis potior est quia sumptibus sustentationis modus examinandi illos Kubernetes excedere potest. Praeter runC, instituere potes, exempli gratia, continens supplicium environment

Operantes cum Docker

Post institutionem et configurationem, botrum convenire conabimur in quo explicabimus GitLab et Docker Subcriptio pro quadrigis evolutionis. Utar tribus machinis virtualibus servientibus, in quibus dispersas FS GlusterFS insuper explicabo, eo utar in repositione volumina, verbi gratia, ut in scriptione technicae versionis vitiosum tolerantem concurram. Clavis partium ad currendum: Docker Subcriptio, Postgresql, Redis, GitLab cum auxilio GitLab Cursor super examen. Postgresql cum pampineis immittet nobis , ideo non opus est ut GlusterFS ad notitias Postgresql reponendas. Reliquae notitiae criticae in GlusterFS reponendae sunt.

GlusterFS omnibus servientibus explicandis (nodi1, node2, node3) explicandis, fasciculos instituere debes, firewall da, et necessaria directoria crea;

# yum -y install centos-release-gluster7

# yum -y install glusterfs-server

# systemctl enable glusterd

# systemctl start glusterd

# firewall-cmd --add-service=glusterfs --permanent

# firewall-cmd --reload

# mkdir -p /srv/gluster

# mkdir -p /srv/docker

# echo "$(hostname):/docker /srv/docker glusterfs defaults,_netdev 0 0" >> /etc/fstabPost institutionem, opus figurandi GlusterFS ab una nodo protrahendum est, exempli gratia nodi1:

# gluster peer probe node2

# gluster peer probe node3

# gluster volume create docker replica 3 node1:/srv/gluster node2:/srv/gluster node3:/srv/gluster force

# gluster volume start dockerTunc opus est ut inde volumen conscendas (quod mandatum est omnibus servientibus exsecutioni mandandum);

# mount /srv/dockerExamen modum configuratur in uno ex ministris, qui erit Dux, reliqui iungendi botrum debebunt, ut effectus mandati servientis exsequi debebit exscribere et in alios exscribere.

Botrus initialis paro, praeceptum in node1 curro;

# docker swarm init

Swarm initialized: current node (a5jpfrh5uvo7svzz1ajduokyq) is now a manager.

To add a worker to this swarm, run the following command:

docker swarm join --token SWMTKN-1-0c5mf7mvzc7o7vjk0wngno2dy70xs95tovfxbv4tqt9280toku-863hyosdlzvd76trfptd4xnzd xx.xx.xx.xx:2377

To add a manager to this swarm, run 'docker swarm join-token manager' and follow the instructions.

# docker swarm join-token managerEx secundo mandato nos imitari et illum in node2 et nodi3 facere;

# docker swarm join --token SWMTKN-x-xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx-xxxxxxxxx xx.xx.xx.xx:2377

This node joined a swarm as a manager.Hoc loco, praevia ministrantium conformatio, ad officia instituenda procedamus, mandata exsecutioni mandanda node1, nisi aliud constet.

Primum retiacula pro vasis crearemus:

# docker network create --driver=overlay etcd

# docker network create --driver=overlay pgsql

# docker network create --driver=overlay redis

# docker network create --driver=overlay traefik

# docker network create --driver=overlay gitlabTunc servientibus notamus, necesse est ut servientibus aliqua officia ligare debeamus;

# docker node update --label-add nodename=node1 node1

# docker node update --label-add nodename=node2 node2

# docker node update --label-add nodename=node3 node3Deinde directoria creamus ad notitias etcd reponendas, KV repositiones, quae ad Traefik et Stolon opus sunt. Similia cum Postgresql, haec vasa servientibus ligata erunt, hoc mandatum in omnibus servientibus curritur:

# mkdir -p /srv/etcdDeinde, limam configurare etcd et uti;

00etcd.yml

version: '3.7'

services:

etcd1:

image: quay.io/coreos/etcd:latest

hostname: etcd1

command:

- etcd

- --name=etcd1

- --data-dir=/data.etcd

- --advertise-client-urls=http://etcd1:2379

- --listen-client-urls=http://0.0.0.0:2379

- --initial-advertise-peer-urls=http://etcd1:2380

- --listen-peer-urls=http://0.0.0.0:2380

- --initial-cluster=etcd1=http://etcd1:2380,etcd2=http://etcd2:2380,etcd3=http://etcd3:2380

- --initial-cluster-state=new

- --initial-cluster-token=etcd-cluster

networks:

- etcd

volumes:

- etcd1vol:/data.etcd

deploy:

replicas: 1

placement:

constraints: [node.labels.nodename == node1]

etcd2:

image: quay.io/coreos/etcd:latest

hostname: etcd2

command:

- etcd

- --name=etcd2

- --data-dir=/data.etcd

- --advertise-client-urls=http://etcd2:2379

- --listen-client-urls=http://0.0.0.0:2379

- --initial-advertise-peer-urls=http://etcd2:2380

- --listen-peer-urls=http://0.0.0.0:2380

- --initial-cluster=etcd1=http://etcd1:2380,etcd2=http://etcd2:2380,etcd3=http://etcd3:2380

- --initial-cluster-state=new

- --initial-cluster-token=etcd-cluster

networks:

- etcd

volumes:

- etcd2vol:/data.etcd

deploy:

replicas: 1

placement:

constraints: [node.labels.nodename == node2]

etcd3:

image: quay.io/coreos/etcd:latest

hostname: etcd3

command:

- etcd

- --name=etcd3

- --data-dir=/data.etcd

- --advertise-client-urls=http://etcd3:2379

- --listen-client-urls=http://0.0.0.0:2379

- --initial-advertise-peer-urls=http://etcd3:2380

- --listen-peer-urls=http://0.0.0.0:2380

- --initial-cluster=etcd1=http://etcd1:2380,etcd2=http://etcd2:2380,etcd3=http://etcd3:2380

- --initial-cluster-state=new

- --initial-cluster-token=etcd-cluster

networks:

- etcd

volumes:

- etcd3vol:/data.etcd

deploy:

replicas: 1

placement:

constraints: [node.labels.nodename == node3]

volumes:

etcd1vol:

driver: local

driver_opts:

type: none

o: bind

device: "/srv/etcd"

etcd2vol:

driver: local

driver_opts:

type: none

o: bind

device: "/srv/etcd"

etcd3vol:

driver: local

driver_opts:

type: none

o: bind

device: "/srv/etcd"

networks:

etcd:

external: true# docker stack deploy --compose-file 00etcd.yml etcdPost aliquod tempus coercemus quod botrus etcd ascendit;

# docker exec $(docker ps | awk '/etcd/ {print $1}') etcdctl member list

ade526d28b1f92f7: name=etcd1 peerURLs=http://etcd1:2380 clientURLs=http://etcd1:2379 isLeader=false

bd388e7810915853: name=etcd3 peerURLs=http://etcd3:2380 clientURLs=http://etcd3:2379 isLeader=false

d282ac2ce600c1ce: name=etcd2 peerURLs=http://etcd2:2380 clientURLs=http://etcd2:2379 isLeader=true

# docker exec $(docker ps | awk '/etcd/ {print $1}') etcdctl cluster-health

member ade526d28b1f92f7 is healthy: got healthy result from http://etcd1:2379

member bd388e7810915853 is healthy: got healthy result from http://etcd3:2379

member d282ac2ce600c1ce is healthy: got healthy result from http://etcd2:2379

cluster is healthyDirectoria pro Postgresql creamus, imperium de omnibus servientibus exercemus;

# mkdir -p /srv/pgsqlDeinde, limam creare Postgresql configurare:

01pgsql.yml

version: '3.7'

services:

pgsentinel:

image: sorintlab/stolon:master-pg10

command:

- gosu

- stolon

- stolon-sentinel

- --cluster-name=stolon-cluster

- --store-backend=etcdv3

- --store-endpoints=http://etcd1:2379,http://etcd2:2379,http://etcd3:2379

- --log-level=debug

networks:

- etcd

- pgsql

deploy:

replicas: 3

update_config:

parallelism: 1

delay: 30s

order: stop-first

failure_action: pause

pgkeeper1:

image: sorintlab/stolon:master-pg10

hostname: pgkeeper1

command:

- gosu

- stolon

- stolon-keeper

- --pg-listen-address=pgkeeper1

- --pg-repl-username=replica

- --uid=pgkeeper1

- --pg-su-username=postgres

- --pg-su-passwordfile=/run/secrets/pgsql

- --pg-repl-passwordfile=/run/secrets/pgsql_repl

- --data-dir=/var/lib/postgresql/data

- --cluster-name=stolon-cluster

- --store-backend=etcdv3

- --store-endpoints=http://etcd1:2379,http://etcd2:2379,http://etcd3:2379

networks:

- etcd

- pgsql

environment:

- PGDATA=/var/lib/postgresql/data

volumes:

- pgkeeper1:/var/lib/postgresql/data

secrets:

- pgsql

- pgsql_repl

deploy:

replicas: 1

placement:

constraints: [node.labels.nodename == node1]

pgkeeper2:

image: sorintlab/stolon:master-pg10

hostname: pgkeeper2

command:

- gosu

- stolon

- stolon-keeper

- --pg-listen-address=pgkeeper2

- --pg-repl-username=replica

- --uid=pgkeeper2

- --pg-su-username=postgres

- --pg-su-passwordfile=/run/secrets/pgsql

- --pg-repl-passwordfile=/run/secrets/pgsql_repl

- --data-dir=/var/lib/postgresql/data

- --cluster-name=stolon-cluster

- --store-backend=etcdv3

- --store-endpoints=http://etcd1:2379,http://etcd2:2379,http://etcd3:2379

networks:

- etcd

- pgsql

environment:

- PGDATA=/var/lib/postgresql/data

volumes:

- pgkeeper2:/var/lib/postgresql/data

secrets:

- pgsql

- pgsql_repl

deploy:

replicas: 1

placement:

constraints: [node.labels.nodename == node2]

pgkeeper3:

image: sorintlab/stolon:master-pg10

hostname: pgkeeper3

command:

- gosu

- stolon

- stolon-keeper

- --pg-listen-address=pgkeeper3

- --pg-repl-username=replica

- --uid=pgkeeper3

- --pg-su-username=postgres

- --pg-su-passwordfile=/run/secrets/pgsql

- --pg-repl-passwordfile=/run/secrets/pgsql_repl

- --data-dir=/var/lib/postgresql/data

- --cluster-name=stolon-cluster

- --store-backend=etcdv3

- --store-endpoints=http://etcd1:2379,http://etcd2:2379,http://etcd3:2379

networks:

- etcd

- pgsql

environment:

- PGDATA=/var/lib/postgresql/data

volumes:

- pgkeeper3:/var/lib/postgresql/data

secrets:

- pgsql

- pgsql_repl

deploy:

replicas: 1

placement:

constraints: [node.labels.nodename == node3]

postgresql:

image: sorintlab/stolon:master-pg10

command: gosu stolon stolon-proxy --listen-address 0.0.0.0 --cluster-name stolon-cluster --store-backend=etcdv3 --store-endpoints http://etcd1:2379,http://etcd2:2379,http://etcd3:2379

networks:

- etcd

- pgsql

deploy:

replicas: 3

update_config:

parallelism: 1

delay: 30s

order: stop-first

failure_action: rollback

volumes:

pgkeeper1:

driver: local

driver_opts:

type: none

o: bind

device: "/srv/pgsql"

pgkeeper2:

driver: local

driver_opts:

type: none

o: bind

device: "/srv/pgsql"

pgkeeper3:

driver: local

driver_opts:

type: none

o: bind

device: "/srv/pgsql"

secrets:

pgsql:

file: "/srv/docker/postgres"

pgsql_repl:

file: "/srv/docker/replica"

networks:

etcd:

external: true

pgsql:

external: trueSecreta generamus et tabella utimur:

# </dev/urandom tr -dc 234567890qwertyuopasdfghjkzxcvbnmQWERTYUPASDFGHKLZXCVBNM | head -c $(((RANDOM%3)+15)) > /srv/docker/replica

# </dev/urandom tr -dc 234567890qwertyuopasdfghjkzxcvbnmQWERTYUPASDFGHKLZXCVBNM | head -c $(((RANDOM%3)+15)) > /srv/docker/postgres

# docker stack deploy --compose-file 01pgsql.yml pgsqlPost aliquod tempus (vide de imperio output docker ministerium lsut omnia officia sursum sint) nos initialize the Postgresql cluster;

# docker exec $(docker ps | awk '/pgkeeper/ {print $1}') stolonctl --cluster-name=stolon-cluster --store-backend=etcdv3 --store-endpoints=http://etcd1:2379,http://etcd2:2379,http://etcd3:2379 initReperiens aviditatem Postgresql botrum ;

# docker exec $(docker ps | awk '/pgkeeper/ {print $1}') stolonctl --cluster-name=stolon-cluster --store-backend=etcdv3 --store-endpoints=http://etcd1:2379,http://etcd2:2379,http://etcd3:2379 status

=== Active sentinels ===

ID LEADER

26baa11d false

74e98768 false

a8cb002b true

=== Active proxies ===

ID

4d233826

9f562f3b

b0c79ff1

=== Keepers ===

UID HEALTHY PG LISTENADDRESS PG HEALTHY PG WANTEDGENERATION PG CURRENTGENERATION

pgkeeper1 true pgkeeper1:5432 true 2 2

pgkeeper2 true pgkeeper2:5432 true 2 2

pgkeeper3 true pgkeeper3:5432 true 3 3

=== Cluster Info ===

Master Keeper: pgkeeper3

===== Keepers/DB tree =====

pgkeeper3 (master)

├─pgkeeper2

└─pgkeeper1

Configuramus traefik aperire aditum vasis ab extra;

03traefik.yml

version: '3.7'

services:

traefik:

image: traefik:latest

command: >

--log.level=INFO

--providers.docker=true

--entryPoints.web.address=:80

--providers.providersThrottleDuration=2

--providers.docker.watch=true

--providers.docker.swarmMode=true

--providers.docker.swarmModeRefreshSeconds=15s

--providers.docker.exposedbydefault=false

--accessLog.bufferingSize=0

--api=true

--api.dashboard=true

--api.insecure=true

networks:

- traefik

ports:

- 80:80

volumes:

- /var/run/docker.sock:/var/run/docker.sock

deploy:

replicas: 3

placement:

constraints:

- node.role == manager

preferences:

- spread: node.id

labels:

- traefik.enable=true

- traefik.http.routers.traefik.rule=Host(`traefik.example.com`)

- traefik.http.services.traefik.loadbalancer.server.port=8080

- traefik.docker.network=traefik

networks:

traefik:

external: true# docker stack deploy --compose-file 03traefik.yml traefikRedis Clusterum deprimimus, ut hoc facere possimus indicem repositionis omnium nodis creare:

# mkdir -p /srv/redis05redis.yml

version: '3.7'

services:

redis-master:

image: 'bitnami/redis:latest'

networks:

- redis

ports:

- '6379:6379'

environment:

- REDIS_REPLICATION_MODE=master

- REDIS_PASSWORD=xxxxxxxxxxx

deploy:

mode: global

restart_policy:

condition: any

volumes:

- 'redis:/opt/bitnami/redis/etc/'

redis-replica:

image: 'bitnami/redis:latest'

networks:

- redis

ports:

- '6379'

depends_on:

- redis-master

environment:

- REDIS_REPLICATION_MODE=slave

- REDIS_MASTER_HOST=redis-master

- REDIS_MASTER_PORT_NUMBER=6379

- REDIS_MASTER_PASSWORD=xxxxxxxxxxx

- REDIS_PASSWORD=xxxxxxxxxxx

deploy:

mode: replicated

replicas: 3

update_config:

parallelism: 1

delay: 10s

restart_policy:

condition: any

redis-sentinel:

image: 'bitnami/redis:latest'

networks:

- redis

ports:

- '16379'

depends_on:

- redis-master

- redis-replica

entrypoint: |

bash -c 'bash -s <<EOF

"/bin/bash" -c "cat <<EOF > /opt/bitnami/redis/etc/sentinel.conf

port 16379

dir /tmp

sentinel monitor master-node redis-master 6379 2

sentinel down-after-milliseconds master-node 5000

sentinel parallel-syncs master-node 1

sentinel failover-timeout master-node 5000

sentinel auth-pass master-node xxxxxxxxxxx

sentinel announce-ip redis-sentinel

sentinel announce-port 16379

EOF"

"/bin/bash" -c "redis-sentinel /opt/bitnami/redis/etc/sentinel.conf"

EOF'

deploy:

mode: global

restart_policy:

condition: any

volumes:

redis:

driver: local

driver_opts:

type: 'none'

o: 'bind'

device: "/srv/redis"

networks:

redis:

external: true# docker stack deploy --compose-file 05redis.yml redisAdde Docker Subcriptio:

06registry.yml

version: '3.7'

services:

registry:

image: registry:2.6

networks:

- traefik

volumes:

- registry_data:/var/lib/registry

deploy:

replicas: 1

placement:

constraints: [node.role == manager]

restart_policy:

condition: on-failure

labels:

- traefik.enable=true

- traefik.http.routers.registry.rule=Host(`registry.example.com`)

- traefik.http.services.registry.loadbalancer.server.port=5000

- traefik.docker.network=traefik

volumes:

registry_data:

driver: local

driver_opts:

type: none

o: bind

device: "/srv/docker/registry"

networks:

traefik:

external: true# mkdir /srv/docker/registry

# docker stack deploy --compose-file 06registry.yml registryPostremo - GitLab;

08gitlab-runner.yml

version: '3.7'

services:

gitlab:

image: gitlab/gitlab-ce:latest

networks:

- pgsql

- redis

- traefik

- gitlab

ports:

- 22222:22

environment:

GITLAB_OMNIBUS_CONFIG: |

postgresql['enable'] = false

redis['enable'] = false

gitlab_rails['registry_enabled'] = false

gitlab_rails['db_username'] = "gitlab"

gitlab_rails['db_password'] = "XXXXXXXXXXX"

gitlab_rails['db_host'] = "postgresql"

gitlab_rails['db_port'] = "5432"

gitlab_rails['db_database'] = "gitlab"

gitlab_rails['db_adapter'] = 'postgresql'

gitlab_rails['db_encoding'] = 'utf8'

gitlab_rails['redis_host'] = 'redis-master'

gitlab_rails['redis_port'] = '6379'

gitlab_rails['redis_password'] = 'xxxxxxxxxxx'

gitlab_rails['smtp_enable'] = true

gitlab_rails['smtp_address'] = "smtp.yandex.ru"

gitlab_rails['smtp_port'] = 465

gitlab_rails['smtp_user_name'] = "noreply@example.com"

gitlab_rails['smtp_password'] = "xxxxxxxxx"

gitlab_rails['smtp_domain'] = "example.com"

gitlab_rails['gitlab_email_from'] = 'noreply@example.com'

gitlab_rails['smtp_authentication'] = "login"

gitlab_rails['smtp_tls'] = true

gitlab_rails['smtp_enable_starttls_auto'] = true

gitlab_rails['smtp_openssl_verify_mode'] = 'peer'

external_url 'http://gitlab.example.com/'

gitlab_rails['gitlab_shell_ssh_port'] = 22222

volumes:

- gitlab_conf:/etc/gitlab

- gitlab_logs:/var/log/gitlab

- gitlab_data:/var/opt/gitlab

deploy:

mode: replicated

replicas: 1

placement:

constraints:

- node.role == manager

labels:

- traefik.enable=true

- traefik.http.routers.gitlab.rule=Host(`gitlab.example.com`)

- traefik.http.services.gitlab.loadbalancer.server.port=80

- traefik.docker.network=traefik

gitlab-runner:

image: gitlab/gitlab-runner:latest

networks:

- gitlab

volumes:

- gitlab_runner_conf:/etc/gitlab

- /var/run/docker.sock:/var/run/docker.sock

deploy:

mode: replicated

replicas: 1

placement:

constraints:

- node.role == manager

volumes:

gitlab_conf:

driver: local

driver_opts:

type: none

o: bind

device: "/srv/docker/gitlab/conf"

gitlab_logs:

driver: local

driver_opts:

type: none

o: bind

device: "/srv/docker/gitlab/logs"

gitlab_data:

driver: local

driver_opts:

type: none

o: bind

device: "/srv/docker/gitlab/data"

gitlab_runner_conf:

driver: local

driver_opts:

type: none

o: bind

device: "/srv/docker/gitlab/runner"

networks:

pgsql:

external: true

redis:

external: true

traefik:

external: true

gitlab:

external: true# mkdir -p /srv/docker/gitlab/conf

# mkdir -p /srv/docker/gitlab/logs

# mkdir -p /srv/docker/gitlab/data

# mkdir -p /srv/docker/gitlab/runner

# docker stack deploy --compose-file 08gitlab-runner.yml gitlabStatus botri et officia finalis;

# docker service ls

ID NAME MODE REPLICAS IMAGE PORTS

lef9n3m92buq etcd_etcd1 replicated 1/1 quay.io/coreos/etcd:latest

ij6uyyo792x5 etcd_etcd2 replicated 1/1 quay.io/coreos/etcd:latest

fqttqpjgp6pp etcd_etcd3 replicated 1/1 quay.io/coreos/etcd:latest

hq5iyga28w33 gitlab_gitlab replicated 1/1 gitlab/gitlab-ce:latest *:22222->22/tcp

dt7s6vs0q4qc gitlab_gitlab-runner replicated 1/1 gitlab/gitlab-runner:latest

k7uoezno0h9n pgsql_pgkeeper1 replicated 1/1 sorintlab/stolon:master-pg10

cnrwul4r4nse pgsql_pgkeeper2 replicated 1/1 sorintlab/stolon:master-pg10

frflfnpty7tr pgsql_pgkeeper3 replicated 1/1 sorintlab/stolon:master-pg10

x7pqqchi52kq pgsql_pgsentinel replicated 3/3 sorintlab/stolon:master-pg10

mwu2wl8fti4r pgsql_postgresql replicated 3/3 sorintlab/stolon:master-pg10

9hkbe2vksbzb redis_redis-master global 3/3 bitnami/redis:latest *:6379->6379/tcp

l88zn8cla7dc redis_redis-replica replicated 3/3 bitnami/redis:latest *:30003->6379/tcp

1utp309xfmsy redis_redis-sentinel global 3/3 bitnami/redis:latest *:30002->16379/tcp

oteb824ylhyp registry_registry replicated 1/1 registry:2.6

qovrah8nzzu8 traefik_traefik replicated 3/3 traefik:latest *:80->80/tcp, *:443->443/tcpQuid aliud emendari potest? Vide Traefik configurare ut continentia super https currere, encryption tls addere pro Postgresql et Redis. Sed generatim iam poC dari potest tincidunt. Nunc alterum ad Docker videamus.

podman

Aliud satis notum machinam ad decurrentem vascula per siliquas (siliquas, catervas vasorum in unum explicant). Dissimilis Docker, non requirit aliquod officium ut vasa currendi, omne opus per bibliothecam libpod. Etiam in Go scriptum, postulat runtime OCI compatible ut vasis currendi, ut runC.

Podman laborans plerumque simile est illius pro Docker, adeo ut hoc facere possis (ut a multis dictum est, qui id experti sunt, auctori huius articuli);

$ alias docker=podmanac pergere potes. In genere, res cum Podman valde iucunda est, quia si primae versiones Kubernetes cum Docker laboraverunt, tunc circa 2015, post normas mundi continentium (OCI - Aperi Continentis Initiativum) et divisio Docker in continentem et runC; jocus Docker ad currendum in Kubernetes evolvens: CRI-O. Podman hac in re jocus est ad Docker, in principiis Kubernetes constructum, inter continentes coagmentatum, sed praecipuum propositi propositum est continentibus Docker-style sine additis officiis deducendi. Ex apertis rationibus nullus est examinatio modus, quia clare tincidunt dicunt, si botri opus est, accipe Kubernetes.

Occasum

Ad institutionem in Centos 7, repositorium Extras tantum activa et deinde omnia hoc mandato instala:

# yum -y install podmanalius features

Podman potest unitates generare pro systemd, ita problema solvendo vasis incipiendi post reboot servitoris. Accedit systemd quod recte operari declaratur sicut pid 1 in vase. Instrumentum constructum separatum est pro vasis aedificandis, sunt instrumenta tertia-partium - analoga docker-compositi, quae etiam genera conformationis imaginum compatitur cum Kubernetes, ita transitus a Podman ad Kubernetes quam maxime facilior est.

Opus Podman

Cum modus examinandi non sit (si botrus ad Kubernetes mutandae putemur), in vasis separatis colligemus.

Instrue podman-componere:

# yum -y install python3-pip

# pip3 install podman-composeConfigurationis lima inde pro podman paulo diversa est, ut exempli gratia singula volumina sectionem directe ad sectionem cum officiis movere debebamus.

gitlab-podman.yml

version: '3.7'

services:

gitlab:

image: gitlab/gitlab-ce:latest

hostname: gitlab.example.com

restart: unless-stopped

environment:

GITLAB_OMNIBUS_CONFIG: |

gitlab_rails['gitlab_shell_ssh_port'] = 22222

ports:

- "80:80"

- "22222:22"

volumes:

- /srv/podman/gitlab/conf:/etc/gitlab

- /srv/podman/gitlab/data:/var/opt/gitlab

- /srv/podman/gitlab/logs:/var/log/gitlab

networks:

- gitlab

gitlab-runner:

image: gitlab/gitlab-runner:alpine

restart: unless-stopped

depends_on:

- gitlab

volumes:

- /srv/podman/gitlab/runner:/etc/gitlab-runner

- /var/run/docker.sock:/var/run/docker.sock

networks:

- gitlab

networks:

gitlab:# podman-compose -f gitlab-runner.yml -d upExitus:

# podman ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

da53da946c01 docker.io/gitlab/gitlab-runner:alpine run --user=gitlab... About a minute ago Up About a minute ago 0.0.0.0:22222->22/tcp, 0.0.0.0:80->80/tcp root_gitlab-runner_1

781c0103c94a docker.io/gitlab/gitlab-ce:latest /assets/wrapper About a minute ago Up About a minute ago 0.0.0.0:22222->22/tcp, 0.0.0.0:80->80/tcp root_gitlab_1Videamus quid pro systemd et kubernetes generat, hoc enim nomen vel id vasculi quaerendum est:

# podman pod ls

POD ID NAME STATUS CREATED # OF CONTAINERS INFRA ID

71fc2b2a5c63 root Running 11 minutes ago 3 db40ab8bf84bKubernetes:

# podman generate kube 71fc2b2a5c63

# Generation of Kubernetes YAML is still under development!

#

# Save the output of this file and use kubectl create -f to import

# it into Kubernetes.

#

# Created with podman-1.6.4

apiVersion: v1

kind: Pod

metadata:

creationTimestamp: "2020-07-29T19:22:40Z"

labels:

app: root

name: root

spec:

containers:

- command:

- /assets/wrapper

env:

- name: PATH

value: /opt/gitlab/embedded/bin:/opt/gitlab/bin:/assets:/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin

- name: TERM

value: xterm

- name: HOSTNAME

value: gitlab.example.com

- name: container

value: podman

- name: GITLAB_OMNIBUS_CONFIG

value: |

gitlab_rails['gitlab_shell_ssh_port'] = 22222

- name: LANG

value: C.UTF-8

image: docker.io/gitlab/gitlab-ce:latest

name: rootgitlab1

ports:

- containerPort: 22

hostPort: 22222

protocol: TCP

- containerPort: 80

hostPort: 80

protocol: TCP

resources: {}

securityContext:

allowPrivilegeEscalation: true

capabilities: {}

privileged: false

readOnlyRootFilesystem: false

volumeMounts:

- mountPath: /var/opt/gitlab

name: srv-podman-gitlab-data

- mountPath: /var/log/gitlab

name: srv-podman-gitlab-logs

- mountPath: /etc/gitlab

name: srv-podman-gitlab-conf

workingDir: /

- command:

- run

- --user=gitlab-runner

- --working-directory=/home/gitlab-runner

env:

- name: PATH

value: /usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin

- name: TERM

value: xterm

- name: HOSTNAME

- name: container

value: podman

image: docker.io/gitlab/gitlab-runner:alpine

name: rootgitlab-runner1

resources: {}

securityContext:

allowPrivilegeEscalation: true

capabilities: {}

privileged: false

readOnlyRootFilesystem: false

volumeMounts:

- mountPath: /etc/gitlab-runner

name: srv-podman-gitlab-runner

- mountPath: /var/run/docker.sock

name: var-run-docker.sock

workingDir: /

volumes:

- hostPath:

path: /srv/podman/gitlab/runner

type: Directory

name: srv-podman-gitlab-runner

- hostPath:

path: /var/run/docker.sock

type: File

name: var-run-docker.sock

- hostPath:

path: /srv/podman/gitlab/data

type: Directory

name: srv-podman-gitlab-data

- hostPath:

path: /srv/podman/gitlab/logs

type: Directory

name: srv-podman-gitlab-logs

- hostPath:

path: /srv/podman/gitlab/conf

type: Directory

name: srv-podman-gitlab-conf

status: {}Systemd:

# podman generate systemd 71fc2b2a5c63

# pod-71fc2b2a5c6346f0c1c86a2dc45dbe78fa192ea02aac001eb8347ccb8c043c26.service

# autogenerated by Podman 1.6.4

# Thu Jul 29 15:23:28 EDT 2020

[Unit]

Description=Podman pod-71fc2b2a5c6346f0c1c86a2dc45dbe78fa192ea02aac001eb8347ccb8c043c26.service

Documentation=man:podman-generate-systemd(1)

Requires=container-781c0103c94aaa113c17c58d05ddabf8df4bf39707b664abcf17ed2ceff467d3.service container-da53da946c01449f500aa5296d9ea6376f751948b17ca164df438b7df6607864.service

Before=container-781c0103c94aaa113c17c58d05ddabf8df4bf39707b664abcf17ed2ceff467d3.service container-da53da946c01449f500aa5296d9ea6376f751948b17ca164df438b7df6607864.service

[Service]

Restart=on-failure

ExecStart=/usr/bin/podman start db40ab8bf84bf35141159c26cb6e256b889c7a98c0418eee3c4aa683c14fccaa

ExecStop=/usr/bin/podman stop -t 10 db40ab8bf84bf35141159c26cb6e256b889c7a98c0418eee3c4aa683c14fccaa

KillMode=none

Type=forking

PIDFile=/var/run/containers/storage/overlay-containers/db40ab8bf84bf35141159c26cb6e256b889c7a98c0418eee3c4aa683c14fccaa/userdata/conmon.pid

[Install]

WantedBy=multi-user.target

# container-da53da946c01449f500aa5296d9ea6376f751948b17ca164df438b7df6607864.service

# autogenerated by Podman 1.6.4

# Thu Jul 29 15:23:28 EDT 2020

[Unit]

Description=Podman container-da53da946c01449f500aa5296d9ea6376f751948b17ca164df438b7df6607864.service

Documentation=man:podman-generate-systemd(1)

RefuseManualStart=yes

RefuseManualStop=yes

BindsTo=pod-71fc2b2a5c6346f0c1c86a2dc45dbe78fa192ea02aac001eb8347ccb8c043c26.service

After=pod-71fc2b2a5c6346f0c1c86a2dc45dbe78fa192ea02aac001eb8347ccb8c043c26.service

[Service]

Restart=on-failure

ExecStart=/usr/bin/podman start da53da946c01449f500aa5296d9ea6376f751948b17ca164df438b7df6607864

ExecStop=/usr/bin/podman stop -t 10 da53da946c01449f500aa5296d9ea6376f751948b17ca164df438b7df6607864

KillMode=none

Type=forking

PIDFile=/var/run/containers/storage/overlay-containers/da53da946c01449f500aa5296d9ea6376f751948b17ca164df438b7df6607864/userdata/conmon.pid

[Install]

WantedBy=multi-user.target

# container-781c0103c94aaa113c17c58d05ddabf8df4bf39707b664abcf17ed2ceff467d3.service

# autogenerated by Podman 1.6.4

# Thu Jul 29 15:23:28 EDT 2020

[Unit]

Description=Podman container-781c0103c94aaa113c17c58d05ddabf8df4bf39707b664abcf17ed2ceff467d3.service

Documentation=man:podman-generate-systemd(1)

RefuseManualStart=yes

RefuseManualStop=yes

BindsTo=pod-71fc2b2a5c6346f0c1c86a2dc45dbe78fa192ea02aac001eb8347ccb8c043c26.service

After=pod-71fc2b2a5c6346f0c1c86a2dc45dbe78fa192ea02aac001eb8347ccb8c043c26.service

[Service]

Restart=on-failure

ExecStart=/usr/bin/podman start 781c0103c94aaa113c17c58d05ddabf8df4bf39707b664abcf17ed2ceff467d3

ExecStop=/usr/bin/podman stop -t 10 781c0103c94aaa113c17c58d05ddabf8df4bf39707b664abcf17ed2ceff467d3

KillMode=none

Type=forking

PIDFile=/var/run/containers/storage/overlay-containers/781c0103c94aaa113c17c58d05ddabf8df4bf39707b664abcf17ed2ceff467d3/userdata/conmon.pid

[Install]

WantedBy=multi-user.targetInfeliciter, sine vasis deducendis, unitas generata ad systema nihil aliud agit (exempli gratia, vascula vetera redigit cum talis servitus sistat), sic debebis scribere talia teipsum.

In principio, Podman satis est experiri quae vasa sunt, antiquas figurationes pro componendis sculpendis transferre, et ad Kubernetes movere, si botro indiges, aut faciliorem utendo ad Docker.

rkt

project ante sex menses ob hoc quod RedHat emit, accuratius in eo non habito. Super, optimam impressionem reliquit, sed Docker et praesertim Podman comparatus, coniunctio similis est. Distributio CoreOS super rkt aedificata fuit (quamvis Docker primitus habuerat), sed hoc etiam in subsidiis post RedHat emptionis finem fecit.

Plush

magis cuius auctor modo vasa construere et currere voluit. Ex documentis et codice iudicans, auctor signa non secutus est, sed solum exsecutionem suam scribere constituit, quae in principio fecit.

Inventiones

Res cum Kubernetis admodum iucunda est: ex altera parte, cum Docker botrum (examinis modo), cum quo etiam productum ambitus clientium currere potes, hoc maxime verum est pro parvis iugis (3-5 homines); aut cum parvo altiore onere, aut non desiderio intelligendi ambages statuendi Kubernetes, pro magnis oneribus inclusis.

Podman plenam convenientiam non praebet, sed unum magnum commodum habet - convenientiam cum Kubernetes, additis instrumentis (aedificiis et aliis). Ideo aggrediar electionem instrumenti operis hoc modo: pro parvis iugis, vel cum praescriptione limitata - Docker (cum examinis modo possibili), ad explicandum me in personali locali - Podman comitum et pro omnibus aliis. — Kubernetes.

Non certus sum an res cum Docker in futuro non mutetur, nam illi sunt pionerii, et gradatim paulatim ad normam perveniunt, sed Podman, omnibus suis defectibus non obstantibus (solum in... operans...) Linux, nullae actiones coacervationis, congregationis, aliaeque a solutionibus tertiarum partium peraguntur) futurum clarius est, ergo omnes invito ut de his inventis in commentariis disserant.

PS Die 3 Augusti deducimus "", ubi plura de opere suo cognoscere potes. Omnia instrumenta eius resolvemus: ab abstractis fundamentalibus ad parametris retis, nuances operandi cum variis systematibus operandis et linguis programmandis. Nota fies cum technicae artis et intellege ubi et quam optime utatur Docker. Nos quoque casuum praxim participemus.

Pre-ordo pretium ante remissionem: CONLINO 5000. Potes videre Docker Video cursus program .

Source: www.habr.com