,旨在收集、轉換和發送日誌數據、指標和事件。

→

它是用 Rust 語言編寫的,與同類產品相比,它具有高性能和低 RAM 消耗的特點。 此外,非常注意與正確性相關的功能,特別是將未發送的事件保存到磁盤緩衝區和文件輪換的能力。

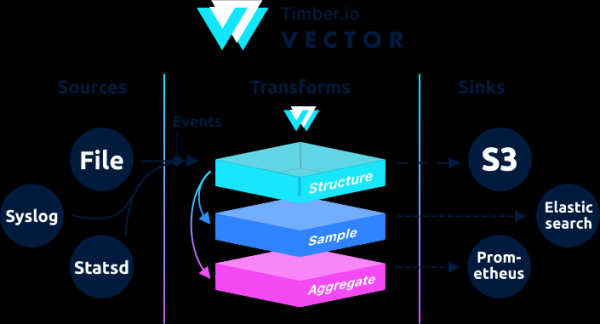

在架構上,Vector 是一個事件路由器,它接受來自一個或多個 資料來源,可選地應用這些消息 轉變,並將它們發送給一個或多個 排水溝.

Vector 是 filebeat 和 logstash 的替代品,它可以充當兩種角色(接收和發送日誌),關於它們的更多詳細信息 .

如果在 Logstash 中鏈構建為輸入 → 過濾器 → 輸出,那麼在 Vector 中它是 → →

可以在文檔中找到示例。

該指令是從 . 原始指令有geoip處理。 從內部網絡測試 geoip 時,vector 給我一個錯誤。

Aug 05 06:25:31.889 DEBUG transform{name=nginx_parse_rename_fields type=rename_fields}: vector::transforms::rename_fields: Field did not exist field=«geoip.country_name» rate_limit_secs=30如果有人需要處理geoip,那麼參考原文說明 .

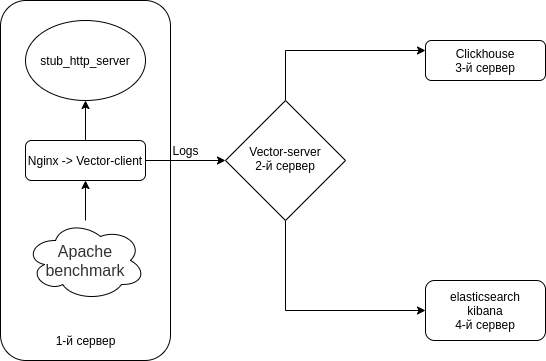

我們將在 Clickhouse 和 Elasticsearch 中分別配置 Nginx(訪問日誌)→ Vector(Client | Filebeat)→ Vector(Server | Logstash)→。 設置4台服務器。 儘管可以繞過 3 個服務器。

該計劃是這樣的。

關閉所有服務器上的 Selinux

sed -i 's/^SELINUX=.*/SELINUX=disabled/g' /etc/selinux/config

reboot在所有服務器上安裝 HTTP 服務器模擬器 + 實用程序

作為 HTTP 服務器模擬器,我們將使用 從

nodejs-stub-server 沒有 rpm。 為它創建rpm。 rpm 將使用構建

添加 antonpatsev/nodejs-stub-server 存儲庫

yum -y install yum-plugin-copr epel-release

yes | yum copr enable antonpatsev/nodejs-stub-server在所有服務器上安裝 nodejs-stub-server、Apache benchmark 和 screen terminal multiplexer

yum -y install stub_http_server screen mc httpd-tools screen更正了 /var/lib/stub_http_server/stub_http_server.js 文件中的 stub_http_server 響應時間以包含更多日誌。

var max_sleep = 10;讓我們啟動 stub_http_server。

systemctl start stub_http_server

systemctl enable stub_http_server在服務器 3 上

ClickHouse 使用 SSE 4.2 指令集,因此,除非另有說明,否則它在所用處理器中的支持成為一項額外的系統要求。 這是檢查當前處理器是否支持 SSE 4.2 的命令:

grep -q sse4_2 /proc/cpuinfo && echo "SSE 4.2 supported" || echo "SSE 4.2 not supported"首先需要連接官方倉庫:

sudo yum install -y yum-utils

sudo rpm --import https://repo.clickhouse.tech/CLICKHOUSE-KEY.GPG

sudo yum-config-manager --add-repo https://repo.clickhouse.tech/rpm/stable/x86_64要安裝軟件包,請運行以下命令:

sudo yum install -y clickhouse-server clickhouse-client我們在/etc/clickhouse-server/config.xml文件中讓clickhouse-server監聽網卡

<listen_host>0.0.0.0</listen_host>將日誌記錄級別從跟踪更改為調試

調試

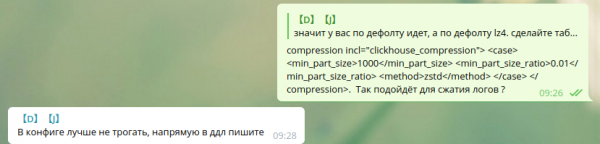

壓縮設置是標準的:

min_compress_block_size 65536

max_compress_block_size 1048576要激活 Zstd 等待,配置建議不要動,但最好使用 DDL。

我沒有在 Google 中找到如何通過 DDL 應用 zstd 壓縮。 所以我保留原樣。

在 Clickhouse 中使用 zstd 壓縮的同事 - 請分享說明。

要將服務器作為守護進程啟動,請運行:

service clickhouse-server start現在讓我們繼續設置 Clickhouse

前往Clickhouse

clickhouse-client -h 172.26.10.109 -m172.26.10.109 - 安裝了 Clickhouse 的服務器的 IP。

創建矢量數據庫

CREATE DATABASE vector;讓我們檢查一下是否有數據庫。

show databases;我們創建表 vector.logs。

/* Это таблица где хранятся логи как есть */

CREATE TABLE vector.logs

(

`node_name` String,

`timestamp` DateTime,

`server_name` String,

`user_id` String,

`request_full` String,

`request_user_agent` String,

`request_http_host` String,

`request_uri` String,

`request_scheme` String,

`request_method` String,

`request_length` UInt64,

`request_time` Float32,

`request_referrer` String,

`response_status` UInt16,

`response_body_bytes_sent` UInt64,

`response_content_type` String,

`remote_addr` IPv4,

`remote_port` UInt32,

`remote_user` String,

`upstream_addr` IPv4,

`upstream_port` UInt32,

`upstream_bytes_received` UInt64,

`upstream_bytes_sent` UInt64,

`upstream_cache_status` String,

`upstream_connect_time` Float32,

`upstream_header_time` Float32,

`upstream_response_length` UInt64,

`upstream_response_time` Float32,

`upstream_status` UInt16,

`upstream_content_type` String,

INDEX idx_http_host request_http_host TYPE set(0) GRANULARITY 1

)

ENGINE = MergeTree()

PARTITION BY toYYYYMMDD(timestamp)

ORDER BY timestamp

TTL timestamp + toIntervalMonth(1)

SETTINGS index_granularity = 8192;檢查表是否已創建。 我們推出 clickhouse-client 並提出要求。

讓我們去矢量數據庫。

use vector;

Ok.

0 rows in set. Elapsed: 0.001 sec.讓我們看看表格。

show tables;

┌─name────────────────┐

│ logs │

└─────────────────────┘在第 4 台服務器上安裝 elasticsearch 將相同的數據發送到 Elasticsearch 與 Clickhouse 進行比較

添加公共 rpm 密鑰

rpm --import https://artifacts.elastic.co/GPG-KEY-elasticsearch讓我們創建 2 個回購協議:

/etc/yum.repos.d/elasticsearch.repo

[elasticsearch]

name=Elasticsearch repository for 7.x packages

baseurl=https://artifacts.elastic.co/packages/7.x/yum

gpgcheck=1

gpgkey=https://artifacts.elastic.co/GPG-KEY-elasticsearch

enabled=0

autorefresh=1

type=rpm-md/etc/yum.repos.d/kibana.repo

[kibana-7.x]

name=Kibana repository for 7.x packages

baseurl=https://artifacts.elastic.co/packages/7.x/yum

gpgcheck=1

gpgkey=https://artifacts.elastic.co/GPG-KEY-elasticsearch

enabled=1

autorefresh=1

type=rpm-md安裝 elasticsearch 和 kibana

yum install -y kibana elasticsearch由於它將在 1 個實例中,您需要添加到 /etc/elasticsearch/elasticsearch.yml 文件:

discovery.type: single-node為了讓 vector 從另一台服務器向 elasticsearch 發送數據,我們將更改 network.host。

network.host: 0.0.0.0要連接到 kibana,請更改 /etc/kibana/kibana.yml 文件中的 server.host 參數

server.host: "0.0.0.0"舊的並在自動運行中包含 elasticsearch

systemctl enable elasticsearch

systemctl start elasticsearch和kibana

systemctl enable kibana

systemctl start kibana為單節點模式設置 Elasticsearch 1 個分片,0 個副本。 您很可能會有一個包含大量服務器的集群,而您不需要這樣做。

對於未來的索引,更新默認模板:

curl -X PUT http://localhost:9200/_template/default -H 'Content-Type: application/json' -d '{"index_patterns": ["*"],"order": -1,"settings": {"number_of_shards": "1","number_of_replicas": "0"}}' 安裝 作為 2 服務器上 Logstash 的替代品

yum install -y https://packages.timber.io/vector/0.9.X/vector-x86_64.rpm mc httpd-tools screen讓我們將 Vector 設置為 Logstash 的替代品。 編輯文件 /etc/vector/vector.toml

# /etc/vector/vector.toml

data_dir = "/var/lib/vector"

[sources.nginx_input_vector]

# General

type = "vector"

address = "0.0.0.0:9876"

shutdown_timeout_secs = 30

[transforms.nginx_parse_json]

inputs = [ "nginx_input_vector" ]

type = "json_parser"

[transforms.nginx_parse_add_defaults]

inputs = [ "nginx_parse_json" ]

type = "lua"

version = "2"

hooks.process = """

function (event, emit)

function split_first(s, delimiter)

result = {};

for match in (s..delimiter):gmatch("(.-)"..delimiter) do

table.insert(result, match);

end

return result[1];

end

function split_last(s, delimiter)

result = {};

for match in (s..delimiter):gmatch("(.-)"..delimiter) do

table.insert(result, match);

end

return result[#result];

end

event.log.upstream_addr = split_first(split_last(event.log.upstream_addr, ', '), ':')

event.log.upstream_bytes_received = split_last(event.log.upstream_bytes_received, ', ')

event.log.upstream_bytes_sent = split_last(event.log.upstream_bytes_sent, ', ')

event.log.upstream_connect_time = split_last(event.log.upstream_connect_time, ', ')

event.log.upstream_header_time = split_last(event.log.upstream_header_time, ', ')

event.log.upstream_response_length = split_last(event.log.upstream_response_length, ', ')

event.log.upstream_response_time = split_last(event.log.upstream_response_time, ', ')

event.log.upstream_status = split_last(event.log.upstream_status, ', ')

if event.log.upstream_addr == "" then

event.log.upstream_addr = "127.0.0.1"

end

if (event.log.upstream_bytes_received == "-" or event.log.upstream_bytes_received == "") then

event.log.upstream_bytes_received = "0"

end

if (event.log.upstream_bytes_sent == "-" or event.log.upstream_bytes_sent == "") then

event.log.upstream_bytes_sent = "0"

end

if event.log.upstream_cache_status == "" then

event.log.upstream_cache_status = "DISABLED"

end

if (event.log.upstream_connect_time == "-" or event.log.upstream_connect_time == "") then

event.log.upstream_connect_time = "0"

end

if (event.log.upstream_header_time == "-" or event.log.upstream_header_time == "") then

event.log.upstream_header_time = "0"

end

if (event.log.upstream_response_length == "-" or event.log.upstream_response_length == "") then

event.log.upstream_response_length = "0"

end

if (event.log.upstream_response_time == "-" or event.log.upstream_response_time == "") then

event.log.upstream_response_time = "0"

end

if (event.log.upstream_status == "-" or event.log.upstream_status == "") then

event.log.upstream_status = "0"

end

emit(event)

end

"""

[transforms.nginx_parse_remove_fields]

inputs = [ "nginx_parse_add_defaults" ]

type = "remove_fields"

fields = ["data", "file", "host", "source_type"]

[transforms.nginx_parse_coercer]

type = "coercer"

inputs = ["nginx_parse_remove_fields"]

types.request_length = "int"

types.request_time = "float"

types.response_status = "int"

types.response_body_bytes_sent = "int"

types.remote_port = "int"

types.upstream_bytes_received = "int"

types.upstream_bytes_send = "int"

types.upstream_connect_time = "float"

types.upstream_header_time = "float"

types.upstream_response_length = "int"

types.upstream_response_time = "float"

types.upstream_status = "int"

types.timestamp = "timestamp"

[sinks.nginx_output_clickhouse]

inputs = ["nginx_parse_coercer"]

type = "clickhouse"

database = "vector"

healthcheck = true

host = "http://172.26.10.109:8123" # Адрес Clickhouse

table = "logs"

encoding.timestamp_format = "unix"

buffer.type = "disk"

buffer.max_size = 104900000

buffer.when_full = "block"

request.in_flight_limit = 20

[sinks.elasticsearch]

type = "elasticsearch"

inputs = ["nginx_parse_coercer"]

compression = "none"

healthcheck = true

# 172.26.10.116 - сервер где установен elasticsearch

host = "http://172.26.10.116:9200"

index = "vector-%Y-%m-%d"您可以編輯 transforms.nginx_parse_add_defaults 部分。

如 將這些配置用於小型 CDN,並且有幾個值可以到達上游_*

例如:

"upstream_addr": "128.66.0.10:443, 128.66.0.11:443, 128.66.0.12:443"

"upstream_bytes_received": "-, -, 123"

"upstream_status": "502, 502, 200"如果這不是您的情況,則可以簡化此部分

為 systemd /etc/systemd/system/vector.service 創建服務設置

# /etc/systemd/system/vector.service

[Unit]

Description=Vector

After=network-online.target

Requires=network-online.target

[Service]

User=vector

Group=vector

ExecStart=/usr/bin/vector

ExecReload=/bin/kill -HUP $MAINPID

Restart=no

StandardOutput=syslog

StandardError=syslog

SyslogIdentifier=vector

[Install]

WantedBy=multi-user.target創建表後,您可以運行 Vector

systemctl enable vector

systemctl start vector矢量日誌可以這樣查看

journalctl -f -u vector日誌應包含這樣的條目

INFO vector::topology::builder: Healthcheck: Passed.

INFO vector::topology::builder: Healthcheck: Passed.在客戶端(Web 服務器)- 第一台服務器

在有nginx的服務器上,需要關閉ipv6,因為clickhouse中的logs表使用了該字段 upstream_addr IPv4 因為我不在內部使用 ipv6。 如果沒有禁用ipv6,那麼就會出現錯誤:

DB::Exception: Invalid IPv4 value.: (while read the value of key upstream_addr)也許讀者,添加對 ipv6 的支持。

創建文件/etc/sysctl.d/98-disable-ipv6.conf

net.ipv6.conf.all.disable_ipv6 = 1

net.ipv6.conf.default.disable_ipv6 = 1

net.ipv6.conf.lo.disable_ipv6 = 1應用設置

sysctl --system安裝 nginx。

添加了 nginx 存儲庫文件 /etc/yum.repos.d/nginx.repo

[nginx-stable]

name=nginx stable repo

baseurl=http://nginx.org/packages/centos/$releasever/$basearch/

gpgcheck=1

enabled=1

gpgkey=https://nginx.org/keys/nginx_signing.key

module_hotfixes=true安裝 nginx 包

yum install -y nginx首先我們需要在/etc/nginx/nginx.conf文件中配置Nginx的日誌格式

user nginx;

# you must set worker processes based on your CPU cores, nginx does not benefit from setting more than that

worker_processes auto; #some last versions calculate it automatically

# number of file descriptors used for nginx

# the limit for the maximum FDs on the server is usually set by the OS.

# if you don't set FD's then OS settings will be used which is by default 2000

worker_rlimit_nofile 100000;

error_log /var/log/nginx/error.log warn;

pid /var/run/nginx.pid;

# provides the configuration file context in which the directives that affect connection processing are specified.

events {

# determines how much clients will be served per worker

# max clients = worker_connections * worker_processes

# max clients is also limited by the number of socket connections available on the system (~64k)

worker_connections 4000;

# optimized to serve many clients with each thread, essential for linux -- for testing environment

use epoll;

# accept as many connections as possible, may flood worker connections if set too low -- for testing environment

multi_accept on;

}

http {

include /etc/nginx/mime.types;

default_type application/octet-stream;

log_format main '$remote_addr - $remote_user [$time_local] "$request" '

'$status $body_bytes_sent "$http_referer" '

'"$http_user_agent" "$http_x_forwarded_for"';

log_format vector escape=json

'{'

'"node_name":"nginx-vector",'

'"timestamp":"$time_iso8601",'

'"server_name":"$server_name",'

'"request_full": "$request",'

'"request_user_agent":"$http_user_agent",'

'"request_http_host":"$http_host",'

'"request_uri":"$request_uri",'

'"request_scheme": "$scheme",'

'"request_method":"$request_method",'

'"request_length":"$request_length",'

'"request_time": "$request_time",'

'"request_referrer":"$http_referer",'

'"response_status": "$status",'

'"response_body_bytes_sent":"$body_bytes_sent",'

'"response_content_type":"$sent_http_content_type",'

'"remote_addr": "$remote_addr",'

'"remote_port": "$remote_port",'

'"remote_user": "$remote_user",'

'"upstream_addr": "$upstream_addr",'

'"upstream_bytes_received": "$upstream_bytes_received",'

'"upstream_bytes_sent": "$upstream_bytes_sent",'

'"upstream_cache_status":"$upstream_cache_status",'

'"upstream_connect_time":"$upstream_connect_time",'

'"upstream_header_time":"$upstream_header_time",'

'"upstream_response_length":"$upstream_response_length",'

'"upstream_response_time":"$upstream_response_time",'

'"upstream_status": "$upstream_status",'

'"upstream_content_type":"$upstream_http_content_type"'

'}';

access_log /var/log/nginx/access.log main;

access_log /var/log/nginx/access.json.log vector; # Новый лог в формате json

sendfile on;

#tcp_nopush on;

keepalive_timeout 65;

#gzip on;

include /etc/nginx/conf.d/*.conf;

}為了不破壞你當前的配置,Nginx 允許你有幾個 access_log 指令

access_log /var/log/nginx/access.log main; # Стандартный лог

access_log /var/log/nginx/access.json.log vector; # Новый лог в формате json不要忘記為新日誌添加規則以進行 logrotate(如果日誌文件不以 .log 結尾)

從 /etc/nginx/conf.d/ 中刪除 default.conf

rm -f /etc/nginx/conf.d/default.conf添加虛擬主機 /etc/nginx/conf.d/vhost1.conf

server {

listen 80;

server_name vhost1;

location / {

proxy_pass http://172.26.10.106:8080;

}

}添加虛擬主機 /etc/nginx/conf.d/vhost2.conf

server {

listen 80;

server_name vhost2;

location / {

proxy_pass http://172.26.10.108:8080;

}

}添加虛擬主機 /etc/nginx/conf.d/vhost3.conf

server {

listen 80;

server_name vhost3;

location / {

proxy_pass http://172.26.10.109:8080;

}

}添加虛擬主機 /etc/nginx/conf.d/vhost4.conf

server {

listen 80;

server_name vhost4;

location / {

proxy_pass http://172.26.10.116:8080;

}

}在/etc/hosts文件中為所有服務器添加虛擬主機(安裝nginx的服務器的172.26.10.106 ip):

172.26.10.106 vhost1

172.26.10.106 vhost2

172.26.10.106 vhost3

172.26.10.106 vhost4如果一切準備就緒

nginx -t

systemctl restart nginx現在讓我們安裝

yum install -y https://packages.timber.io/vector/0.9.X/vector-x86_64.rpm為 systemd /etc/systemd/system/vector.service 創建一個設置文件

[Unit]

Description=Vector

After=network-online.target

Requires=network-online.target

[Service]

User=vector

Group=vector

ExecStart=/usr/bin/vector

ExecReload=/bin/kill -HUP $MAINPID

Restart=no

StandardOutput=syslog

StandardError=syslog

SyslogIdentifier=vector

[Install]

WantedBy=multi-user.target並在 /etc/vector/vector.toml 配置中配置 Filebeat 替換。 IP地址172.26.10.108為日誌服務器(Vector-Server)的IP地址

data_dir = "/var/lib/vector"

[sources.nginx_file]

type = "file"

include = [ "/var/log/nginx/access.json.log" ]

start_at_beginning = false

fingerprinting.strategy = "device_and_inode"

[sinks.nginx_output_vector]

type = "vector"

inputs = [ "nginx_file" ]

address = "172.26.10.108:9876"別忘了將使用者向量新增到相應的群組,以便它可以讀取日誌檔案。例如,nginx 在 centos 建立具有管理員群組權限的日誌。

usermod -a -G adm vector讓我們開始矢量服務

systemctl enable vector

systemctl start vector矢量日誌可以這樣查看

journalctl -f -u vector日誌應該有這樣的東西

INFO vector::topology::builder: Healthcheck: Passed.壓力測試

測試是使用 Apache 基准進行的。

httpd-tools 軟件包已安裝在所有服務器上

我們開始使用屏幕上 4 台不同服務器的 Apache 基準測試。 首先,我們啟動屏幕終端多路復用器,然後我們開始使用 Apache 基準測試。 如何使用屏幕,您可以在 .

從第一台服務器

while true; do ab -H "User-Agent: 1server" -c 100 -n 10 -t 10 http://vhost1/; sleep 1; done從第一台服務器

while true; do ab -H "User-Agent: 2server" -c 100 -n 10 -t 10 http://vhost2/; sleep 1; done從第一台服務器

while true; do ab -H "User-Agent: 3server" -c 100 -n 10 -t 10 http://vhost3/; sleep 1; done從第一台服務器

while true; do ab -H "User-Agent: 4server" -c 100 -n 10 -t 10 http://vhost4/; sleep 1; done我們在Clickhouse中查看數據

前往Clickhouse

clickhouse-client -h 172.26.10.109 -m進行 SQL 查詢

SELECT * FROM vector.logs;

┌─node_name────┬───────────timestamp─┬─server_name─┬─user_id─┬─request_full───┬─request_user_agent─┬─request_http_host─┬─request_uri─┬─request_scheme─┬─request_method─┬─request_length─┬─request_time─┬─request_referrer─┬─response_status─┬─response_body_bytes_sent─┬─response_content_type─┬───remote_addr─┬─remote_port─┬─remote_user─┬─upstream_addr─┬─upstream_port─┬─upstream_bytes_received─┬─upstream_bytes_sent─┬─upstream_cache_status─┬─upstream_connect_time─┬─upstream_header_time─┬─upstream_response_length─┬─upstream_response_time─┬─upstream_status─┬─upstream_content_type─┐

│ nginx-vector │ 2020-08-07 04:32:42 │ vhost1 │ │ GET / HTTP/1.0 │ 1server │ vhost1 │ / │ http │ GET │ 66 │ 0.028 │ │ 404 │ 27 │ │ 172.26.10.106 │ 45886 │ │ 172.26.10.106 │ 0 │ 109 │ 97 │ DISABLED │ 0 │ 0.025 │ 27 │ 0.029 │ 404 │ │

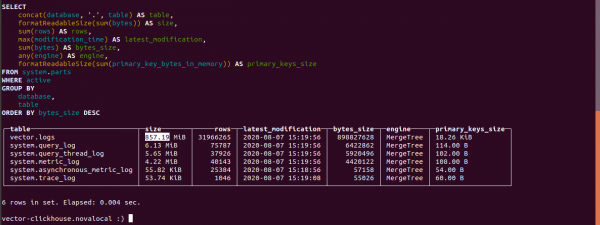

└──────────────┴─────────────────────┴─────────────┴─────────┴────────────────┴────────────────────┴───────────────────┴─────────────┴────────────────┴────────────────┴────────────────┴──────────────┴──────────────────┴─────────────────┴──────────────────────────┴───────────────────────┴───────────────┴─────────────┴─────────────┴───────────────┴───────────────┴─────────────────────────┴─────────────────────┴───────────────────────┴───────────────────────┴──────────────────────┴──────────────────────────┴────────────────────────┴─────────────────┴───────────────────────找出 Clickhouse 中表的大小

select concat(database, '.', table) as table,

formatReadableSize(sum(bytes)) as size,

sum(rows) as rows,

max(modification_time) as latest_modification,

sum(bytes) as bytes_size,

any(engine) as engine,

formatReadableSize(sum(primary_key_bytes_in_memory)) as primary_keys_size

from system.parts

where active

group by database, table

order by bytes_size desc;讓我們看看在 Clickhouse 中有多少日誌。

日誌表的大小為 857.19 MB。

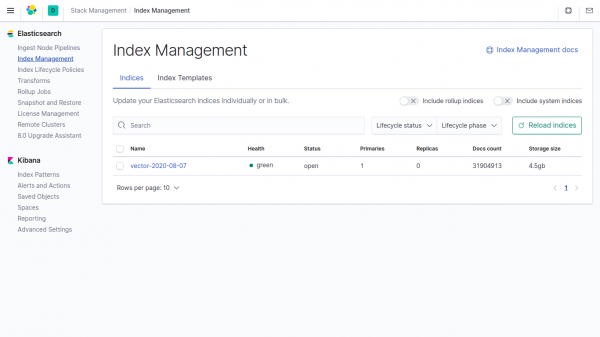

Elasticsearch 中索引中相同數據的大小為 4,5 GB。

如果在Clickhouse中不指定向量參數,則數據比在Elasticsearch中少了4500/857.19 = 5.24倍。

在 vector 中,默認使用壓縮字段。

電報聊天

電報聊天

電報聊天“"

來源: www.habr.com