That's right, after the release at the beginning of May 2019, Consul can natively authorize applications and services running in Kubernetes.

In this tutorial, we will create step by step (Proof of concept, PoC) demonstrating this new feature. You are expected to have basic knowledge of Kubernetes and Hashicorp's Consul. And while you can use any cloud platform or local environment, in this tutorial, we will be using Google's Cloud Platform.

Review

If we go to , we will get a brief overview of its purpose and use case, as well as some technical details and a general overview of the logic. I highly recommend reading it at least once before proceeding, as I'll be explaining and chewing it all up now.

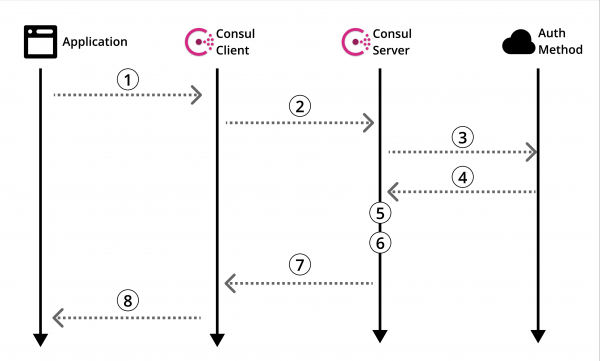

Scheme 1: Official overview of the Consul authorization method

Let's look into .

Of course, there is useful information there, but there is no guide on how to actually use it all. So, like any sane person, you scour the Internet for guidance. And then... Be defeated. It happens. Let's fix this.

Before we move on to creating our POC, let's go back to the Consul authorization methods overview (Diagram 1) and refine it in the context of Kubernetes.

Architecture

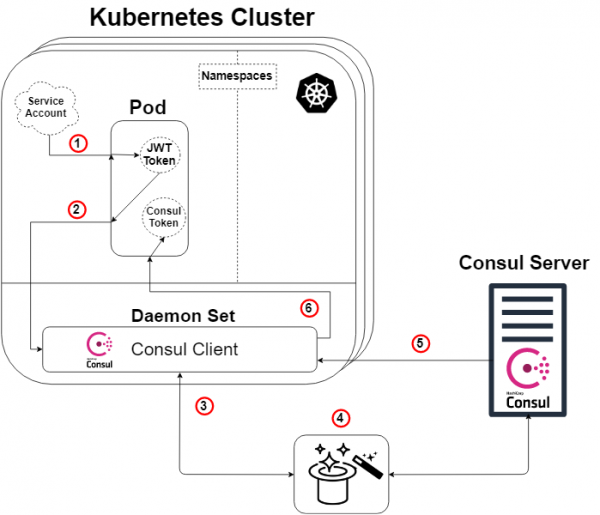

In this tutorial, we will create a Consul server on a separate machine that will interact with a Kubernetes cluster with the Consul client installed. We will then create our dummy app in the pod and use our configured authorization method to read from our Consul key/value store.

The diagram below shows in detail the architecture we create in this tutorial, as well as the authorization method logic, which will be explained later.

Diagram 2: Kubernetes Authorization Method Overview

A quick note: the Consul server doesn't need to live outside of the Kubernetes cluster for this to work. But yes, he can either way.

So, taking the Consul overview schema (Scheme 1) and applying Kubernetes to it, we get the scheme above (Scheme 2), and here the logic will be as follows:

- Each pod will have a service account attached to it, containing a JWT token generated and known by Kubernetes. This token is also inserted into the pod by default.

- Our application or service inside a pod will initiate a login command to our Consul client. The login request will also include our token and name specially created authorization method (like Kubernetes). This step #2 corresponds to step 1 of the Consul circuit (Diagram 1).

- Our Consul client will then forward this request to our Consul server.

- MAGIC! This is where the Consul server authenticates the request, collects the identity of the request, and compares it against any associated predefined rules. Below is another diagram to illustrate this. This step corresponds to steps 3, 4 and 5 of the Consul overview diagram (Diagram 1).

- Our Consul server generates a Consul token with permissions according to the authorization method rules we specified (which we defined) regarding the identity of the requester. It will then send that token back. This corresponds to step 6 of the Consul scheme (Diagram 1).

- Our Consul client forwards the token to the requesting application or service.

Our application or service can now use this Consul token to communicate with our Consul data, as defined by the token's privileges.

The magic is revealed!

For those of you who aren't happy with just a bunny out of a hat and want to know how it works...let me "show you how deep rabbit hole».

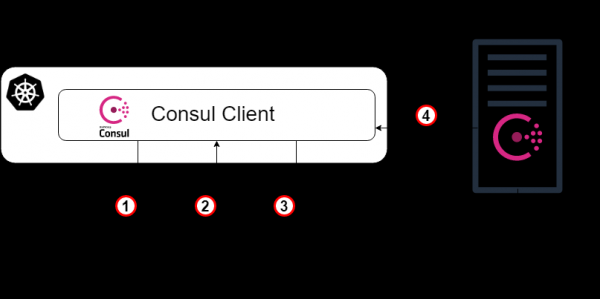

As mentioned earlier, our "magic" step (Scheme 2: Step 4) is for the Consul server to authenticate the request, gather information about the request, and compare it against any associated predefined rules. This step corresponds to steps 3, 4 and 5 of the Consul overview diagram (Diagram 1). Below is a diagram (Scheme 3), the purpose of which is to clearly show what is actually happening under the hood specific Kubernetes authorization method.

Diagram 3: The magic is revealed!

- As a starting point, our Consul client forwards the login request to our Consul server with the Kubernetes account token and the specific instance name of the authorization method we created earlier. This step corresponds to step 3 in the previous diagram explanation.

- Now the Consul server (or leader) needs to verify the authenticity of the received token. Therefore, it will consult with the Kubernetes cluster (via the Consul client) and, with the appropriate permissions, we will find out if the token is genuine and who owns it.

- The validated request is then returned to the Consul leader, and the Consul server is searched for an authorization method instance with the specified name from the login request (and Kubernetes type).

- The Consul leader determines the specified authorization method instance (if found) and reads the set of binding rules that are attached to it. It then reads those rules and compares them to the verified identity attributes.

- TA-dah! Go to step 5 in the previous circuit explanation.

Run Consul-server in a normal virtual machine

From now on, I will mainly give instructions for creating this POC, often in paragraphs, without explanatory whole sentences. Also, as noted earlier, I will use GCP to create the entire infrastructure, but you can create the same infrastructure anywhere else.

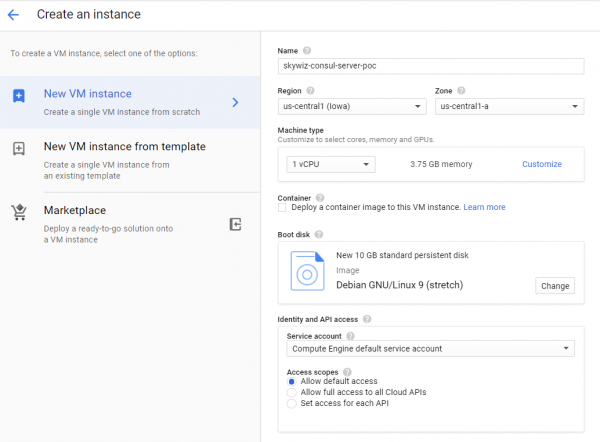

- Start the virtual machine (instance / server).

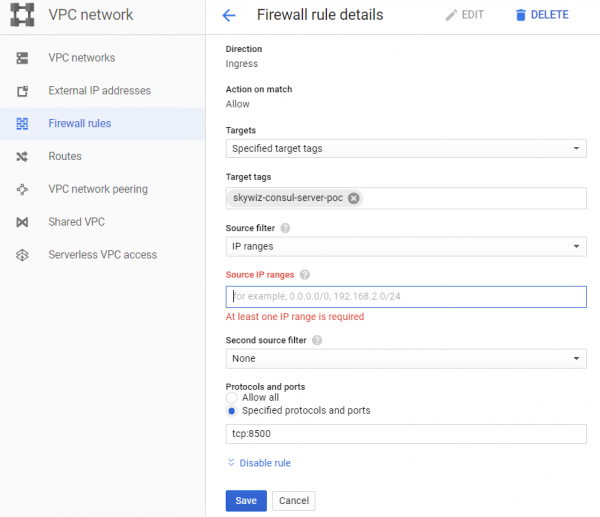

- Create a firewall rule (a security group in AWS):

- I like to assign the same machine name to the rule and the network tag, in this case "skywiz-consul-server-poc".

- Find the IP address of your local computer and add it to the list of source IP addresses so we can access the user interface (UI).

- Open port 8500 for UI. Click Create. We will change this firewall again soon [].

- Add a firewall rule to the instance. Go back to the VM dashboard on the Consul server and add "skywiz-consul-server-poc" to the network tags field. Click Save.

- Install Consul on a virtual machine, check here. Remember you need Consul version ≥ 1.5 [link]

- Let's create a single node Consul - the configuration is as follows.

groupadd --system consul

useradd -s /sbin/nologin --system -g consul consul

mkdir -p /var/lib/consul

chown -R consul:consul /var/lib/consul

chmod -R 775 /var/lib/consul

mkdir /etc/consul.d

chown -R consul:consul /etc/consul.d- For a more detailed guide on installing Consul and setting up a 3-node cluster, see .

- Create file /etc/consul.d/agent.json like this []:

### /etc/consul.d/agent.json

{

"acl" : {

"enabled": true,

"default_policy": "deny",

"enable_token_persistence": true

}

}- Start our Consul server:

consul agent

-server

-ui

-client 0.0.0.0

-data-dir=/var/lib/consul

-bootstrap-expect=1

-config-dir=/etc/consul.d- You should see a bunch of output and eventually "... update blocked by ACLs".

- Find the external IP address of the Consul server and open a browser with that IP address on port 8500. Make sure the UI opens.

- Try adding a key/value pair. There must be an error. This is because we loaded the Consul server with an ACL and denied all rules.

- Go back to your shell on the Consul server and start the process in the background or some other way to make it work and type the following:

consul acl bootstrap- Find the "SecretID" value and return to the UI. On the ACL tab, enter the secret token ID you just copied. Copy the SecretID somewhere else, we'll need it later.

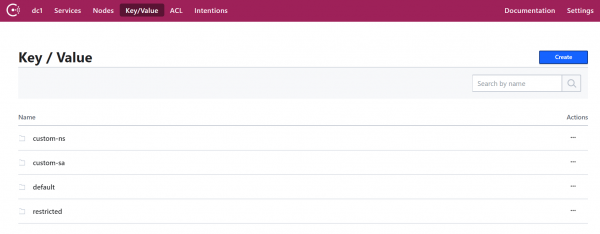

- Now add a key/value pair. For this POC, add the following: key: "custom-ns/test_key", value: "I'm in the custom-ns folder!"

Starting a Kubernetes cluster for our application with the Consul client as a Daemonset

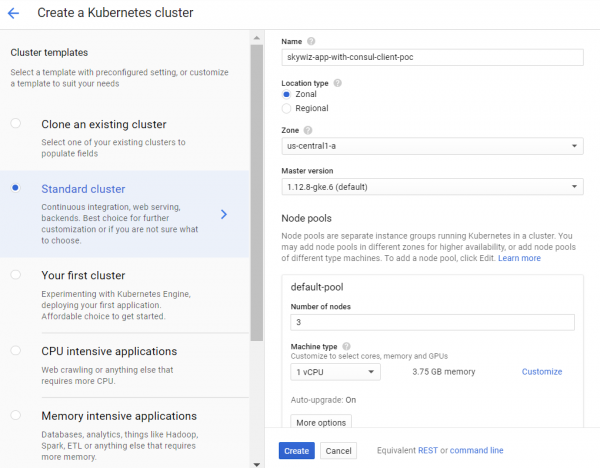

- Create a K8s (Kubernetes) cluster. We will create it in the same zone as the server for faster access and so we can use the same subnet to easily connect with internal IPs. We'll name it "skywiz-app-with-consul-client-poc".

- As a side note, here is a good guide I came across when setting up a Consul POC cluster with Consul Connect.

- We will also use the Hashicorp helm chart with an extended value file.

- Install and configure Helm. Configuration steps:

kubectl create serviceaccount tiller --namespace kube-system

kubectl create clusterrolebinding tiller-admin-binding

--clusterrole=cluster-admin --serviceaccount=kube-system:tiller

./helm init --service-account=tiller

./helm update- helmchart:

- Use the following values file (note I have disabled most):

### poc-helm-consul-values.yaml

global:

enabled: false

image: "consul:latest"

# Expose the Consul UI through this LoadBalancer

ui:

enabled: false

# Allow Consul to inject the Connect proxy into Kubernetes containers

connectInject:

enabled: false

# Configure a Consul client on Kubernetes nodes. GRPC listener is required for Connect.

client:

enabled: true

join: ["<PRIVATE_IP_CONSUL_SERVER>"]

extraConfig: |

{

"acl" : {

"enabled": true,

"default_policy": "deny",

"enable_token_persistence": true

}

}

# Minimal Consul configuration. Not suitable for production.

server:

enabled: false

# Sync Kubernetes and Consul services

syncCatalog:

enabled: false- Apply helm chart:

./helm install -f poc-helm-consul-values.yaml ./consul-helm - name skywiz-app-with-consul-client-poc- When it tries to run, it will need permissions for the Consul server, so let's add them.

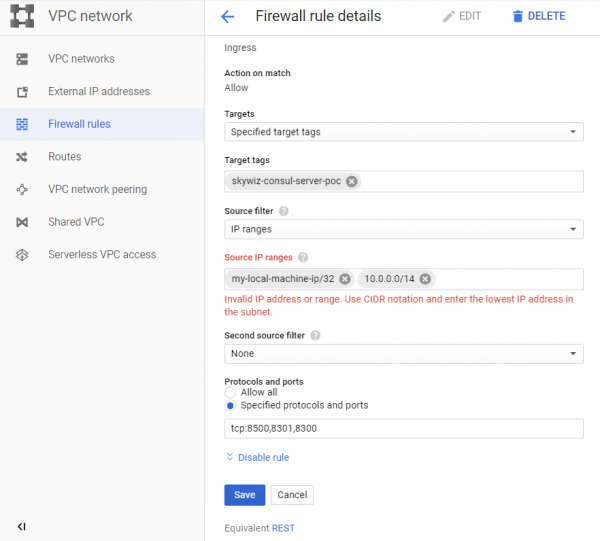

- Notice the "Pod address range" located on the cluster dashboard and go back to our "skywiz-consul-server-poc" firewall rule.

- Add the address range for the pod to the list of IP addresses and open ports 8301 and 8300.

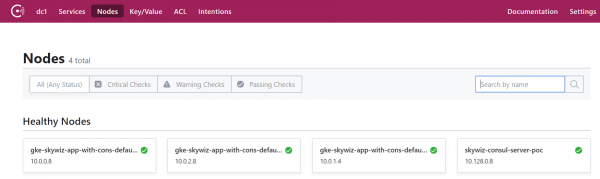

- Go to Consul UI and in a few minutes you will see our cluster appear in the nodes tab.

Customizing the authorization method by integrating Consul with Kubernetes

- Return to the Consul server shell and export the token you saved earlier:

export CONSUL_HTTP_TOKEN=<SecretID>- We need information from our Kubernetes cluster to instantiate the auth method:

- kubernetes host

kubectl get endpoints | grep kubernetes- kubernetes-service-account-jwt

kubectl get sa <helm_deployment_name>-consul-client -o yaml | grep "- name:"

kubectl get secret <secret_name_from_prev_command> -o yaml | grep token:- The token is base64 encoded, so decrypt it with your favorite tool []

- kubernetes-ca-cert

kubectl get secret <secret_name_from_prev_command> -o yaml | grep ca.crt:- Take the “ca.crt” certificate (after base64 decoding) and put it in the “ca.crt” file.

- Now instantiate the auth method, replacing the placeholders with the values you just received.

consul acl auth-method create

-type "kubernetes"

-name "auth-method-skywiz-consul-poc"

-description "This is an auth method using kubernetes for the cluster skywiz-app-with-consul-client-poc"

-kubernetes-host "<k8s_endpoint_retrieved earlier>"

-kubernetes-ca-cert=@ca.crt

-kubernetes-service-account-

jwt="<decoded_token_retrieved_earlier>"- Next, we need to create a rule and attach it to the new role. For this part, you can use Consul UI, but we will use the command line.

- Write a rule

### kv-custom-ns-policy.hcl

key_prefix "custom-ns/" {

policy = "write"

}- Apply the rule

consul acl policy create

-name kv-custom-ns-policy

-description "This is an example policy for kv at custom-ns/"

-rules @kv-custom-ns-policy.hcl- Find the ID of the rule you just created from the output.

- Create a role with a new rule.

consul acl role create

-name "custom-ns-role"

-description "This is an example role for custom-ns namespace"

-policy-id <policy_id>- We will now associate our new role with the auth method instance. Note that the "selector" flag determines whether our login request will receive this role. Check here for other selector options:

consul acl binding-rule create

-method=auth-method-skywiz-consul-poc

-bind-type=role

-bind-name='custom-ns-role'

-selector='serviceaccount.namespace=="custom-ns"'Configurations in the end

Access rights

- Create permissions. We need to give permission to Consul to verify and identify the identity of the K8s service account token.

- Write the following to the file :

###skywiz-poc-consul-server_rbac.yaml

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: review-tokens

namespace: default

subjects:

- kind: ServiceAccount

name: skywiz-app-with-consul-client-poc-consul-client

namespace: default

roleRef:

kind: ClusterRole

name: system:auth-delegator

apiGroup: rbac.authorization.k8s.io

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: service-account-getter

namespace: default

rules:

- apiGroups: [""]

resources: ["serviceaccounts"]

verbs: ["get"]

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: get-service-accounts

namespace: default

subjects:

- kind: ServiceAccount

name: skywiz-app-with-consul-client-poc-consul-client

namespace: default

roleRef:

kind: ClusterRole

name: service-account-getter

apiGroup: rbac.authorization.k8s.io- Let's create access rights

kubectl create -f skywiz-poc-consul-server_rbac.yamlConnecting to Consul Client

- As mentioned , there are several options for connecting to the daemonset, but we'll move on to the next simple solution:

- Apply the following file [].

### poc-consul-client-ds-svc.yaml

apiVersion: v1

kind: Service

metadata:

name: consul-ds-client

spec:

selector:

app: consul

chart: consul-helm

component: client

hasDNS: "true"

release: skywiz-app-with-consul-client-poc

ports:

- protocol: TCP

port: 80

targetPort: 8500- Then use the following built-in command to create a configmap []. Note that we are referring to the name of our service, change it if necessary.

cat <<EOF | kubectl apply -f -

apiVersion: v1

kind: ConfigMap

metadata:

labels:

addonmanager.kubernetes.io/mode: EnsureExists

name: kube-dns

namespace: kube-system

data:

stubDomains: |

{"consul": ["$(kubectl get svc consul-ds-client -o jsonpath='{.spec.clusterIP}')"]}

EOFTesting the auth method

Now let's see the magic in action!

- Create a few more key folders with the same top-level key (i.e. /sample_key) and a value of your choice. Create the appropriate policies and roles for the new key paths. We'll do the bindings later.

Custom namespace test:

- Let's create our own namespace:

kubectl create namespace custom-ns- Let's create a pod in our new namespace. Write the configuration for the pod.

###poc-ubuntu-custom-ns.yaml

apiVersion: v1

kind: Pod

metadata:

name: poc-ubuntu-custom-ns

namespace: custom-ns

spec:

containers:

- name: poc-ubuntu-custom-ns

image: ubuntu

command: ["/bin/bash", "-ec", "sleep infinity"]

restartPolicy: Never- Create under:

kubectl create -f poc-ubuntu-custom-ns.yaml- Once the container is up and running, go there and install curl.

kubectl exec poc-ubuntu-custom-ns -n custom-ns -it /bin/bash

apt-get update && apt-get install curl -y- We will now send a login request to Consul using the authorization method we created earlier [].

- To view the entered token from your service account:

cat /run/secrets/kubernetes.io/serviceaccount/token- Write the following to a file inside the container:

### payload.json

{

"AuthMethod": "auth-method-test",

"BearerToken": "<jwt_token>"

}- Login!

curl

--request POST

--data @payload.json

consul-ds-client.default.svc.cluster.local/v1/acl/login- To run the above steps in one line (since we will be running multiple tests), you can do the following:

echo "{

"AuthMethod": "auth-method-skywiz-consul-poc",

"BearerToken": "$(cat /run/secrets/kubernetes.io/serviceaccount/token)"

}"

| curl

--request POST

--data @-

consul-ds-client.default.svc.cluster.local/v1/acl/login- Works! Should, at least. Now take the SecretID and try to access the key/value we need to have access to.

curl

consul-ds-client.default.svc.cluster.local/v1/kv/custom-ns/test_key --header “X-Consul-Token: <SecretID_from_prev_response>”- You can decode "Value" base64 and see that it matches the value in custom-ns/test_key in the UI. If you used the same value given earlier in this guide, your encoded value would be IkknbSBpbiB0aGUgY3VzdG9tLW5zIGZvbGRlciEi.

User Service Account Test:

- Create a custom ServiceAccount with the following command [].

kubectl apply -f - <<EOF

apiVersion: v1

kind: ServiceAccount

metadata:

name: custom-sa

EOF- Create a new configuration file for the pod. Please note that I included the curl installation to save labor :)

###poc-ubuntu-custom-sa.yaml

apiVersion: v1

kind: Pod

metadata:

name: poc-ubuntu-custom-sa

namespace: default

spec:

serviceAccountName: custom-sa

containers:

- name: poc-ubuntu-custom-sa

image: ubuntu

command: ["/bin/bash","-ec"]

args: ["apt-get update && apt-get install curl -y; sleep infinity"]

restartPolicy: Never- After that, start a shell inside the container.

kubectl exec -it poc-ubuntu-custom-sa /bin/bash- Login!

echo "{

"AuthMethod": "auth-method-skywiz-consul-poc",

"BearerToken": "$(cat /run/secrets/kubernetes.io/serviceaccount/token)"

}"

| curl

--request POST

--data @-

consul-ds-client.default.svc.cluster.local/v1/acl/login- Permission denied. Oh, we forgot to add a new rule binding with the appropriate permissions, let's do that now.

Repeat the previous steps above:

a) Create an identical Policy for the "custom-sa/" prefix.

b) Create a Role, name it "custom-sa-role"

c) Attach the Policy to the Role.

- Create a Rule-Binding (only possible from cli/api). Notice the different value of the selector flag.

consul acl binding-rule create

-method=auth-method-skywiz-consul-poc

-bind-type=role

-bind-name='custom-sa-role'

-selector='serviceaccount.name=="custom-sa"'- Please re-login from the "poc-" container.ubuntu-custom-sa". Success!

- Check our access to the custom-sa/ key path.

curl

consul-ds-client.default.svc.cluster.local/v1/kv/custom-sa/test_key --header “X-Consul-Token: <SecretID>”- You can also make sure that this token does not grant access to kv in "custom-ns/". Just repeat the above command after replacing "custom-sa" with "custom-ns" prefix.

Permission denied.

Overlay example:

- It's worth noting that all rule-binding matches will be added to the token with these rights.

- Our container "poc-ubuntu-custom-sa" is in the default namespace - so let's use it for another rule-binding.

- Repeat previous steps:

a) Create an identical Policy for the "default/" key prefix.

b) Create a Role, name it “default-ns-role”

c) Attach the Policy to the Role. - Create a Rule-Binding (only possible from cli/api)

consul acl binding-rule create

-method=auth-method-skywiz-consul-poc

-bind-type=role

-bind-name='default-ns-role'

-selector='serviceaccount.namespace=="default"'- Return to our "poc-" containerubuntu-custom-sa" and try to access the path "default/" kv.

- Permission denied.

You can view the specified credentials for each token in the UI under ACL > Tokens. As you can see, there is only one "custom-sa-role" attached to our current token. The token we are currently using was generated when we logged in and there was only one rule-binding then that matched. We need to login again and use the new token. - Make sure you can read both "custom-sa/" and "default/" kv paths.

Success!

This is due to the fact that our "poc-ubuntu-custom-sa" corresponds to the "custom-sa" and "default-ns" rule bindings.

Conclusion

TTL token mgmt?

At the time of this writing, there is no integrated way to determine the TTL for tokens generated by this authorization method. It would be a fantastic opportunity to provide secure authorization automation for Consul.

There is an option to manually create a token with TTL:

Expiration Time - The time this token will be revoked. (Optional; added in Consul 1.5.0)- Exists only for manual creation/updating

Hopefully, soon we will be able to control how tokens are generated (for each rule or authorization method) and add TTL.

Until then, it is suggested to use the logout endpoint in your logic.

Also read other articles on our blog:

Source: habr.com