Microsoft announced the open source release of the OpenHCL paravirtualization layer and the OpenVMM virtual machine monitor, specifically developed to support OpenHCL. The OpenVMM and OpenHCL code is written in Rust and distributed under the MIT license. OpenVMM is a second-layer hypervisor, operating in the same protection ring as the operating system kernel, similar to products like VirtualBox and VMware Workstation. It supports running on top of host systems based on Linux (x86_64), Windows (x86_64, Aarch64) and macOS (x86_64, Aarch64), using the virtualization APIs KVM, SHV (Microsoft Hypervisor), WHP (provided by the OS data)Windows Hypervisor Platform) and Hypervisor.framework.

Among the features supported in OpenVMM are:

- Boot in UEFI and BIOS modes, direct kernel boot Linux;

- Support for paravirtualization based on Virtio drivers (virtio-fs, virtio-9p, virtio-net, virtio-pmem)

- Support for paravirtualization based on VMBus (storvsp, netvsp, vpci, framebuffer);

- Emulation of vTPM, NVMe, UART, i440BX + PIIX4 chipset, IDE HDD, PCI and VGA;

- Backends for passing graphics, input devices, console, storage and network access;

- Management via command line interface, interactive console, gRPC and ttrpc.

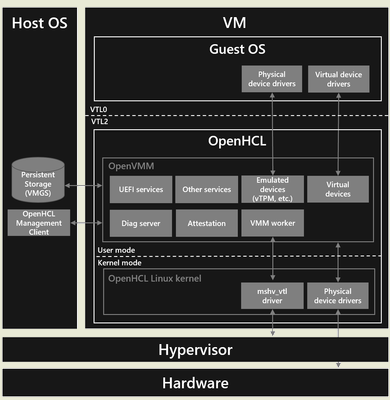

OpenHCL is positioned as an environment with paravirtualization components (paravisor) running on top of the OpenVMM hypervisor. The key feature of virtualization systems based on OpenVMM and OpenHCL is that paravirtualization components are executed not on the host system side, but in the same virtual machine with the guest system. Isolation of the paravirtualization layer from the guest operating system is provided by the second-level hypervisor OpenVMM. OpenHCL in this application can be considered as virtual firmware running at a higher privilege level than the operating system launched in the guest environment.

The separation of the guest system and OpenHCL components is achieved using the concept of virtual trust levels (VTLs), which can be implemented using both software mechanisms and hardware technologies such as Intel TDX (Trust Domain Extensions), AMD SEV-SNP (Secure Encrypted Virtualization-Secure Nested Paging), and ARM CCA (Confidential Compute Architecture). A stripped-down kernel build is used to execute OpenHCL components. Linux, which includes only the minimum required components for OpenVMM to run.

OpenHCL can run on x86-64 and ARM64 platforms, and supports Intel TDX, AMD SEV-SNP, and ARM CCA extensions for additional isolation. OpenHCL includes a set of services, drivers, and emulators used to provide access to hardware, provide virtual devices on the guest system side, and emulate hardware devices (for example, a chip for storing cryptographic keys, vTPM, can be emulated).

To translate access to hardware on the guest system side, existing paravirtualization-enabled drivers are used, or devices can be directly bound to the virtual machine, allowing existing guest systems to be migrated to an OpenHCL-based environment without modification. OpenHCL also includes diagnostic and debugging components. virtual machines, performed using extensions to ensure confidential computing.

Unlike the existing open source project COCONUT-SVSM (Secure VM Service Module), which provides services and emulated devices for guest systems running in confidential virtual machines (CVM, Confidential Virtual Machine), OpenHCL allows the use of standard interfaces in guest systems, while COCONUT-SVSM requires the organization of special interaction with SVSM, making changes to the guest system and the use of separate drivers.

Use cases for the OpenHCL paravisor include migrating existing systems to use Azure Boost hardware accelerators without requiring changes to the guest disk image; running existing guests in virtual machines that provide confidential computing (e.g., based on Intel TDX and AMD SEV-SNP); and providing verified boot for virtual machines using UEFI Secure Boot and vTPM.

It is separately noted that the OpenVMM project is focused on use with OpenHCL and is not yet ready for stand-alone use on host systems for production deployments by end users. The following are mentioned among the OpenVMM problems that prevent its use in host environments in a traditional context, outside of the OpenHCL bundle: poor documentation of the control interface; lack of proper optimization of the backend performance for storage, network and graphics; lack of support for some drivers (for example, IDE disks and PS/2 mouse); no guarantee of API stability and functionality. At the same time, the OpenVMM and OpenHCL bundle has already reached the level of industrial implementation and is used by Microsoft in the Azure platform (Azure Boost SKU) to support the operation of more than 1.5 million virtual machines.

Source: opennet.ru